Author: Denis Avetisyan

New research demonstrates how symbolic machine learning can unlock interpretable models from complex, chaotic time series data.

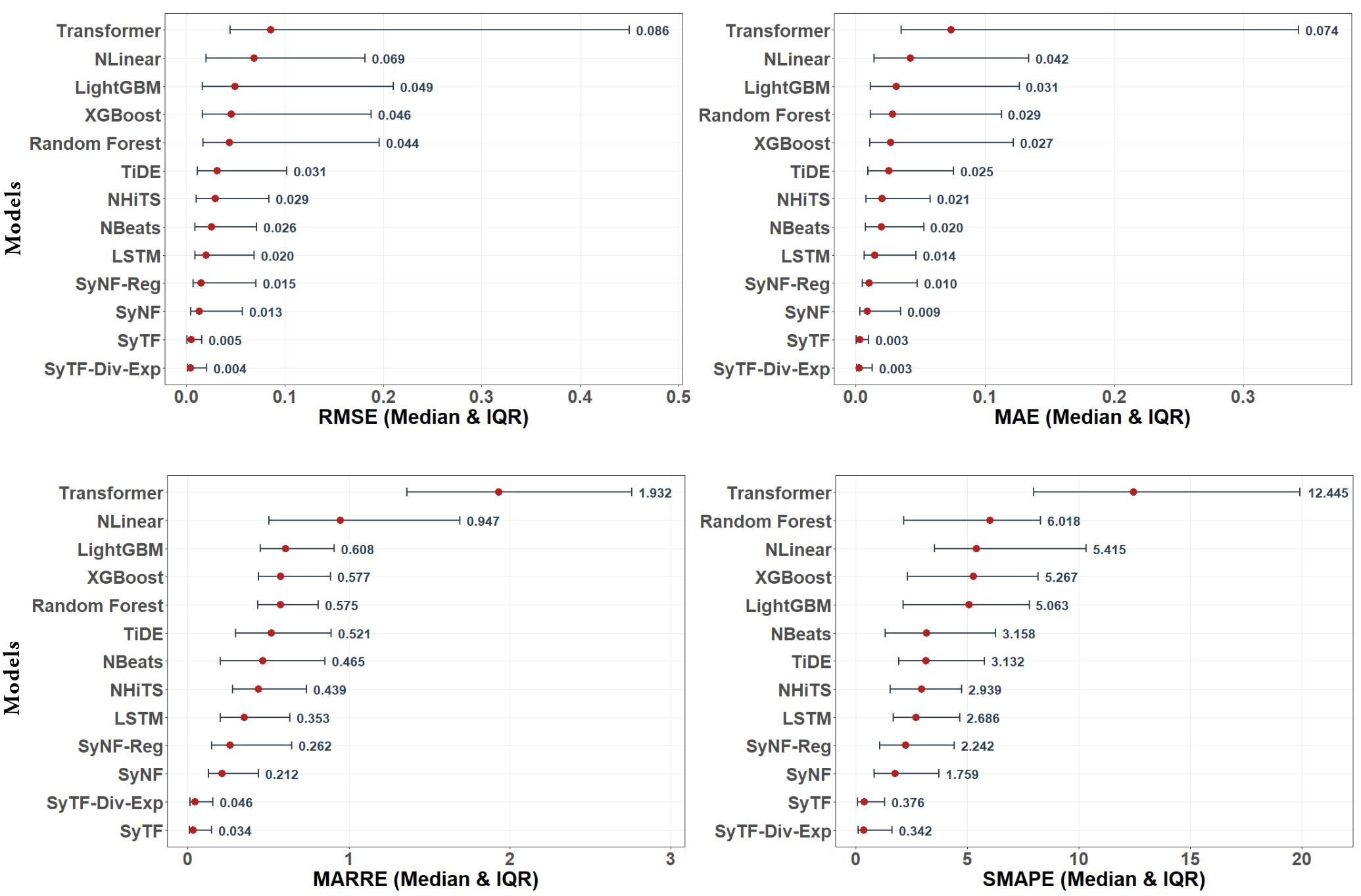

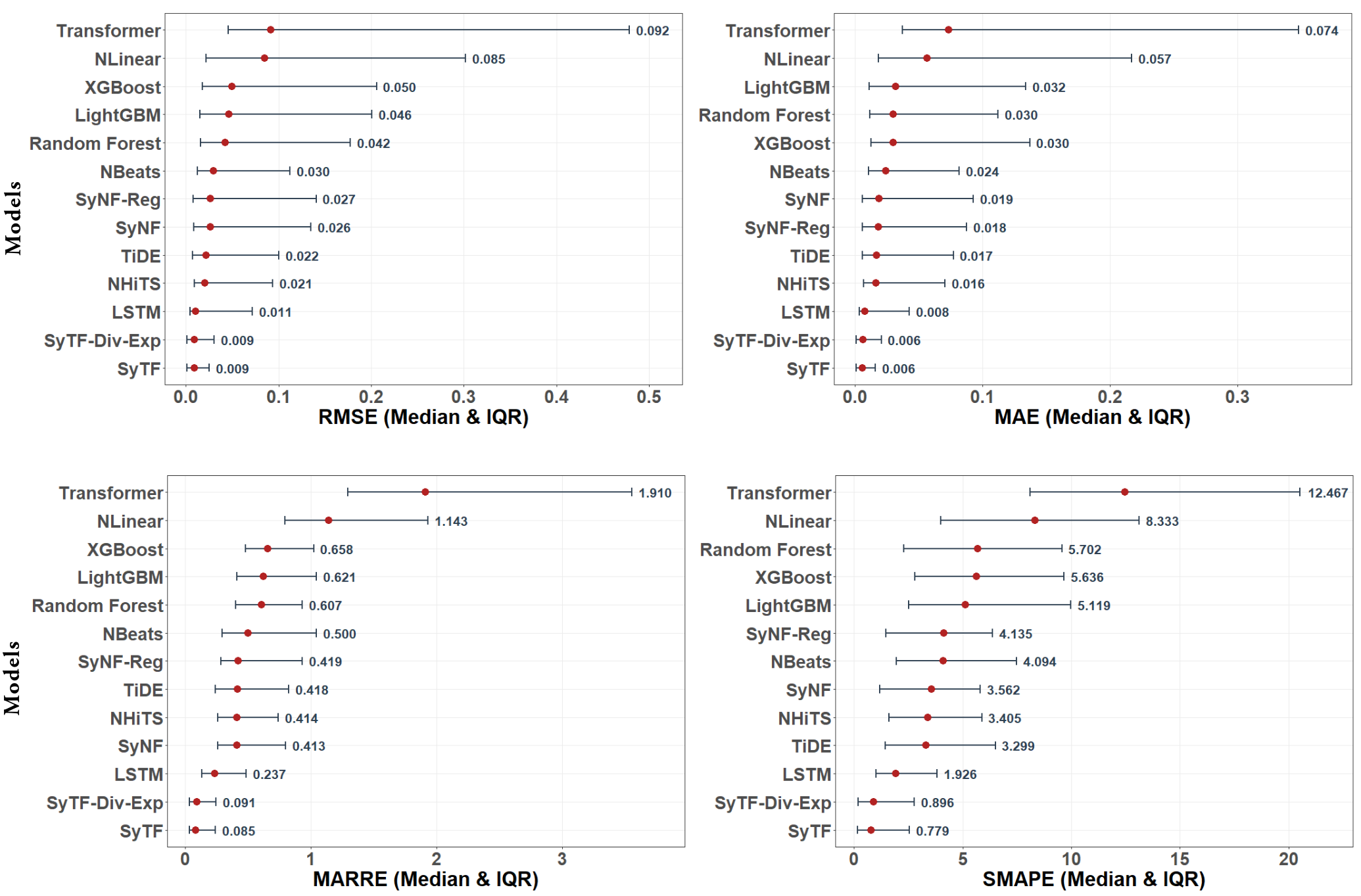

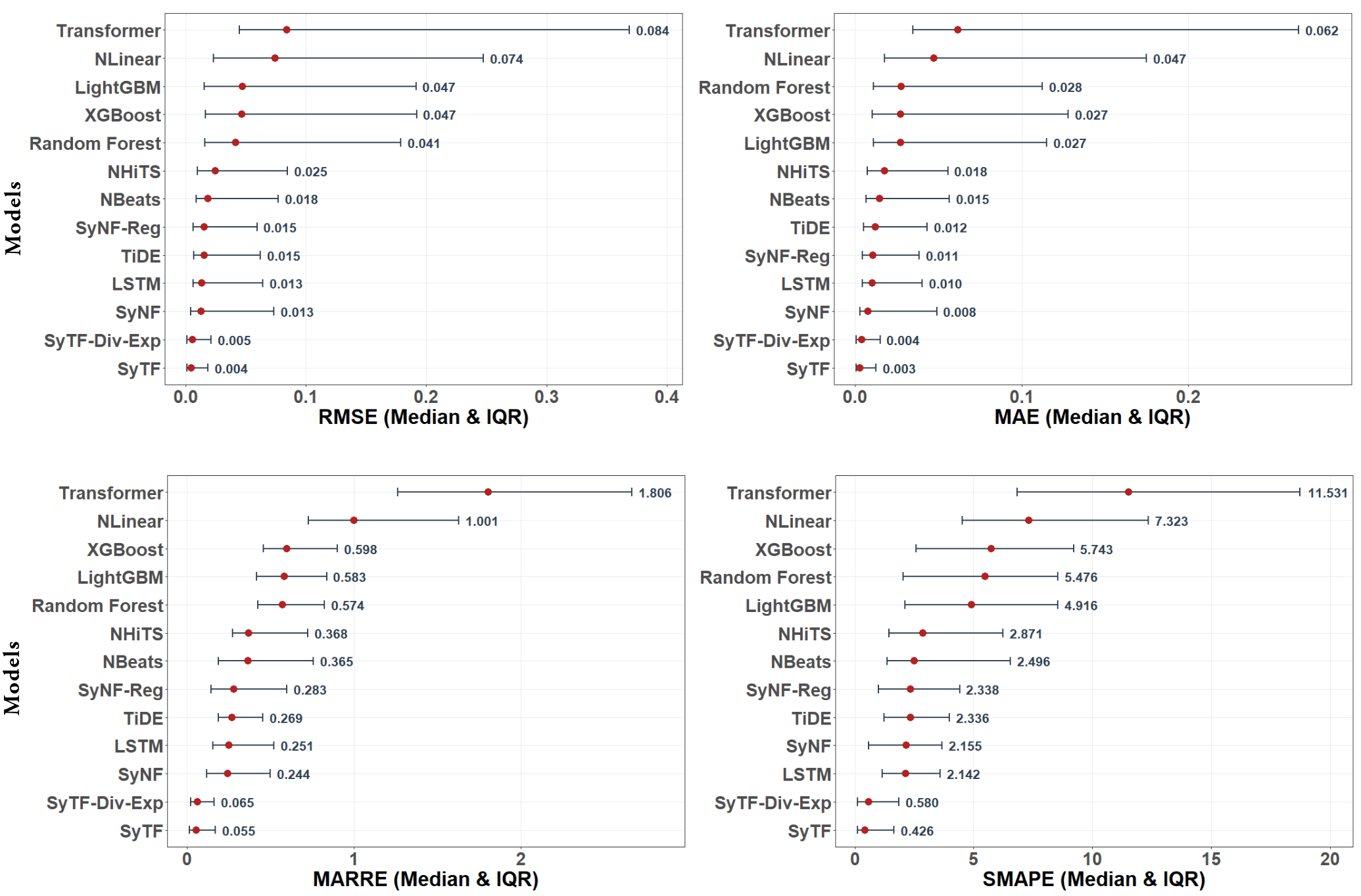

This review benchmarks symbolic regression and neural-symbolic methods for forecasting chaotic systems and discovering underlying dynamical equations.

Despite advances in forecasting, chaotic time series remain challenging due to sensitivity to initial conditions and inherent nonlinearities, often leaving modern deep learning approaches as ‘black boxes’ lacking scientific transparency. This work, ‘Turning Time Series into Algebraic Equations: Symbolic Machine Learning for Interpretable Modeling of Chaotic Time Series’, introduces and benchmarks two symbolic machine learning approaches – a neural-symbolic forecaster and a symbolic tree forecaster – capable of learning explicit algebraic equations directly from chaotic data. Results demonstrate competitive one-step-ahead accuracy across a diverse suite of benchmarks, including both synthetic and real-world chaotic systems, while simultaneously providing interpretable equations revealing underlying dynamics. Can these methods unlock a new paradigm for understanding and forecasting complex dynamical systems, bridging the gap between prediction and mechanistic insight?

Unraveling Chaos: The Limits of Prediction

The inherent difficulty in forecasting chaotic systems stems from an extreme sensitivity to initial conditions, famously dubbed the “butterfly effect.” Systems like the Lorenz and Rössler models, though defined by deterministic equations, demonstrate that even infinitesimally small differences in starting values can lead to vastly divergent outcomes over time. This isn’t merely a limitation of measurement; it’s a fundamental property of the system itself. Because perfect knowledge of initial conditions is impossible, long-term prediction becomes exponentially less accurate; the system’s future state is effectively unknowable beyond a certain horizon. This sensitivity doesn’t imply randomness, but rather a complex, interwoven dynamic where tiny, unobservable perturbations amplify, rendering extended forecasts unreliable and highlighting the limits of predictability in many natural and engineered processes.

Conventional time series forecasting relies heavily on identifying and extrapolating patterns from past data, a strategy that proves insufficient when applied to chaotic systems. These methods, such as autoregressive integrated moving average (ARIMA) models, assume a degree of stationarity or predictable trend, but chaotic systems, by definition, are exquisitely sensitive to initial conditions. Even minuscule errors in data collection or model parameterization can rapidly amplify, leading to forecasts that diverge dramatically from actual outcomes. While these traditional techniques can offer short-term predictions or useful approximations, their inability to fully represent the nonlinear dynamics inherent in chaos severely limits their long-term predictive power, particularly in phenomena where feedback loops and complex interactions dominate. This limitation underscores the need for novel forecasting approaches that move beyond simple pattern recognition and embrace the inherent unpredictability of chaotic processes.

Many seemingly disparate real-world systems, ranging from large-scale climate patterns like the El Niño-Southern Oscillation to the spread of infectious diseases such as Dengue Fever, are now understood to operate with characteristics of chaos. This means that even with precise measurements, long-term prediction is inherently limited due to extreme sensitivity to initial conditions and complex, nonlinear interactions. Consequently, traditional forecasting techniques-often reliant on linear assumptions-prove inadequate when applied to these systems. This necessitates the development of novel approaches, incorporating concepts from chaos theory and nonlinear dynamics, to improve risk assessment and, where possible, to provide more reliable short-term predictions. These new methodologies move beyond simply predicting what will happen, and instead focus on characterizing the range of possible outcomes and quantifying the associated uncertainties.

The Ghosts in the Machine: Limitations of Existing Models

Empirical models, such as autoregressive integrated moving average (ARIMA) or various machine learning regressions, achieve predictive accuracy by directly fitting parameters to observed data within a defined calibration period. However, these models lack an underlying representation of the system’s governing dynamics; they identify correlations rather than causal mechanisms. Consequently, their performance deteriorates rapidly when applied to data outside the calibration window, particularly in chaotic systems where sensitivity to initial conditions and nonlinear interactions amplify prediction errors. The absence of structural validity means these models cannot extrapolate beyond the observed data distribution and are unable to capture the inherent unpredictability of chaotic behavior, making them unsuitable for long-term forecasting or scenario analysis.

Mechanistic models, derived from established physical laws, necessitate the definition of numerous parameters to accurately represent system behavior. These parameters often lack directly observable values and must be estimated through techniques like optimization or data assimilation. The accuracy of these estimations is heavily influenced by the quality and quantity of available data, and the model’s sensitivity to parameter variations. Furthermore, strong a priori assumptions regarding model structure and parameter ranges are frequently required to constrain the estimation process and avoid non-unique or unstable solutions. This reliance on prior knowledge and the challenges of accurate parameter estimation significantly limit the applicability of mechanistic models, particularly when dealing with complex systems or data-scarce environments.

Analytical indicators, such as Lyapunov exponents and fractal dimensions, and phase-space reconstruction techniques rely on either complete knowledge of the system’s governing equations or the assumption of relatively simple dynamics. When applied to systems with complex nonlinear couplings – where interactions between variables are not easily defined or modeled – these methods become computationally expensive and prone to error. Specifically, accurate calculation of Lyapunov exponents requires high-precision data and robust numerical integration, while phase-space reconstruction suffers from the “curse of dimensionality” as the required data volume grows exponentially with system complexity. Consequently, while providing valuable insights in controlled environments, their practical utility diminishes significantly when dealing with real-world scenarios characterized by incomplete information and intricate nonlinear interactions.

Vector-Field Reconstruction (VFR) techniques estimate the local dynamics of a system by approximating the vector field from observed data points. The accuracy of VFR is highly sensitive to data quality and density; sparse data leads to increased uncertainty in the reconstructed vector field, while noise introduces errors in the estimated flow direction and magnitude. In chaotic systems, the inherent sensitivity to initial conditions exacerbates these issues, as even small errors in the reconstructed vector field can lead to significant divergence between the reconstructed trajectory and the true system dynamics. Consequently, VFR-based forecasts are typically limited to short timescales and require substantial, high-quality data to maintain reasonable accuracy, a condition rarely met in many practical applications.

Rewriting the Rules: Data-Driven Equation Discovery

Data-Driven Equation Discovery (DDED) represents a shift in modeling techniques by directly inferring mathematical equations from observed data without relying on pre-existing assumptions about the underlying system. Traditional methods require researchers to formulate a model structure based on domain expertise; DDED circumvents this by employing algorithms to search the space of possible mathematical expressions and identify those that accurately describe the data. This is achieved through techniques like symbolic regression, where the algorithm aims to find a function f(x) that minimizes the error between predicted and observed values, represented as min \sum_{i=1}^{n} (y_i - f(x_i))^2. The resulting equation provides an explicit, interpretable representation of the governing dynamics, potentially revealing fundamental relationships previously unknown or difficult to discern.

Neural-Symbolic Regression (NSR) integrates the strengths of both neural networks and symbolic regression to improve equation discovery. Neural networks excel at learning complex, non-linear relationships from data, providing a robust feature extraction mechanism. Symbolic regression then leverages these learned features to identify explicit mathematical expressions – such as y = ax^2 + bx + c – that accurately model the underlying system. This combination addresses limitations of traditional symbolic regression which can struggle with high-dimensional or noisy data, and avoids the “black box” nature of neural networks by providing interpretable symbolic equations as output. The process typically involves the neural network transforming raw data into a lower-dimensional representation, which is then used as input for the symbolic regression algorithm, enabling the discovery of governing equations from observational data.

Symbolic Regression (SR) operates by systematically exploring a space of mathematical expressions to identify the model that best describes a given dataset. This is achieved through techniques like genetic algorithms or evolutionary strategies, which iteratively refine candidate equations based on their accuracy in predicting observed data. The process involves defining a set of mathematical operators (e.g., addition, subtraction, multiplication, division, trigonometric functions) and constants, then combining these to generate numerous potential equations. A fitness function, typically measuring the error between the equation’s predictions and the actual data, is used to evaluate each candidate. Equations with lower error are retained and combined, mutated, or otherwise modified to create new candidates in subsequent generations, effectively searching for the optimal f(x) that minimizes the discrepancy between predicted and observed values. SR differs from traditional regression methods by not requiring a pre-defined functional form, allowing it to discover equations directly from data.

Reservoir Computing (RC) is a recurrent neural network technique particularly effective for short-term time series forecasting, especially within chaotic systems where long-term prediction is inherently limited. Unlike traditional recurrent networks trained with backpropagation, RC maintains a fixed, randomly connected recurrent layer – the “reservoir” – and only trains a simple linear readout. This fixed reservoir projects the input signal into a high-dimensional state space, allowing the linear readout to efficiently map reservoir states to desired outputs. The computational efficiency of training only the readout layer, coupled with the reservoir’s ability to capture temporal dependencies, makes RC a potentially valuable preprocessing or complementary technique for data-driven equation discovery, providing initial state estimates or assisting in validating discovered equations in chaotic regimes where standard methods struggle.

Beyond Prediction: Towards Robust and Interpretable Models

Traditional forecasting often relies on complex machine learning models – “black boxes” – that excel at prediction but offer little insight into why a system behaves as it does. A shift towards discovering the underlying governing equations offers a fundamentally different approach. Instead of simply mapping inputs to outputs, this method aims to uncover the fundamental laws that dictate a chaotic system’s evolution. By explicitly defining these relationships – perhaps as a system of differential equations – forecasts become more interpretable and, crucially, more robust. This is because the model is grounded in physical principles rather than statistical correlations, allowing it to generalize better to unseen conditions and adapt to changes in the system’s dynamics. Consequently, forecasts derived from discovered equations are less susceptible to the unpredictable errors that plague purely data-driven methods, leading to improved reliability and trust in long-term predictions.

The capacity to uncover underlying dynamical laws extends far beyond theoretical advancement, promising tangible improvements across diverse critical systems. More accurate climate models, for instance, could better predict extreme weather events and long-term shifts, informing effective mitigation strategies. Similarly, a law-based approach to epidemic forecasting transcends simple curve-fitting, potentially revealing transmission mechanisms and allowing for targeted interventions. Beyond these areas, optimized resource management – encompassing everything from water distribution to energy grid stabilization – becomes achievable through predictive models grounded in fundamental principles, offering a pathway towards sustainable and resilient infrastructure. This shift from empirical prediction to mechanistic understanding represents a substantial leap in forecasting capability, enabling proactive responses and informed decision-making in a complex and rapidly changing world.

Beyond simply predicting future states, robust forecasting necessitates a clear understanding of prediction uncertainty. Conformal Prediction offers a powerful, distribution-free method to achieve this, generating prediction intervals that provably cover the true value a specified percentage of the time – without requiring strong assumptions about the underlying data. This capability is particularly valuable in high-stakes scenarios, where risk assessment is paramount; for instance, in financial modeling or public health forecasting, knowing not just the likely outcome, but also the range of plausible outcomes, is critical for informed decision-making. By quantifying this uncertainty, Conformal Prediction transforms forecasts from point estimates into probabilistic statements, enabling more reliable planning and mitigating potential negative consequences stemming from unforeseen events, and fostering trust in predictive systems.

Despite inherent limitations in predicting behavior over extended periods, deep recurrent networks offer a practical foundation for evaluating the efficacy of methods focused on discovering underlying governing equations. These networks excel at capturing short-term dynamics within complex systems, establishing a readily quantifiable baseline against which the performance of equation-based models can be directly compared. By measuring how well a discovered equation improves upon the recurrent network’s short-term predictive capabilities, researchers gain valuable insight into whether the equation truly reflects the system’s fundamental principles, or simply represents a statistical correlation. This comparative approach is crucial for validating the robustness and generalizability of equation discovery techniques, ensuring they move beyond simply mimicking observed data and towards capturing genuine mechanistic understanding.

The pursuit of modeling chaotic time series, as detailed in the research, inherently demands a challenging of established predictive methods. It’s a process of dissecting complexity to reveal underlying principles – a pursuit beautifully captured by Edsger W. Dijkstra: “In order to gain understanding, one must strip away the superfluous.” The work showcases how symbolic machine learning attempts precisely this – stripping away the ‘black box’ of traditional forecasting to arrive at interpretable, algebraic equations. By focusing on expression-tree methods and neural-symbolic models, the research isn’t merely seeking accurate predictions, but a deeper comprehension of the dynamical systems themselves, acknowledging that true understanding arises from deconstruction and elegant simplification.

Beyond Prediction: Deconstructing the Dynamics

The pursuit of accurate forecasting, while valuable, often treats the underlying system as a black box. This work suggests a productive disruption: turning the box transparent, even if the view isn’t entirely comfortable. Achieving interpretable models for chaotic time series isn’t merely about predicting the next data point; it’s about reverse-engineering the rules governing the system itself. The benchmarks presented demonstrate a capacity to describe chaos, but description is only the first step towards control-or, perhaps more accurately, informed interaction.

Limitations remain, naturally. Current symbolic regression techniques still grapple with the curse of dimensionality and the inherent noise in real-world data. The true challenge isn’t simply finding an equation that fits, but determining which of many possible equations best reflects the generative process. Future work should focus on developing methods that actively test discovered equations, not just validate them against existing data. One could envision a system that proposes hypotheses about the underlying dynamics and then designs experiments to disprove them, iteratively refining its understanding.

Ultimately, this field isn’t about building better predictors; it’s about building better tools for scientific inquiry. The ability to extract algebraic equations from time series data represents a shift in perspective-from observing behavior to dissecting mechanisms. The next frontier lies in automating that dissection, turning the art of equation discovery into a rigorous, repeatable process.

Original article: https://arxiv.org/pdf/2603.07261.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Gold Rate Forecast

- Avengers: Doomsday Spoilers & Leaks Addressed By Director Joe Russo: “It’s Over-Policed”

- Assassin’s Creed is getting a live stage spin-off with parkour and choreographed fights

- INJ/USD

- STX/USD

- Detonate codes (December 2025)

- Crimson Desert Guide – How to Pay Fines, Bounties & Debt

- Apple TV’s Imperfect Women Becomes No. 1 Most-Watched Show Globally

- What is Omoggle? The AI face-rating platform taking over Twitch

- Pragmata: Every Hacking Mode, Ranked

2026-03-10 11:23