Author: Denis Avetisyan

As cyberattacks become increasingly sophisticated, machine learning-based intrusion detection systems are facing a new wave of threats from adversarial examples.

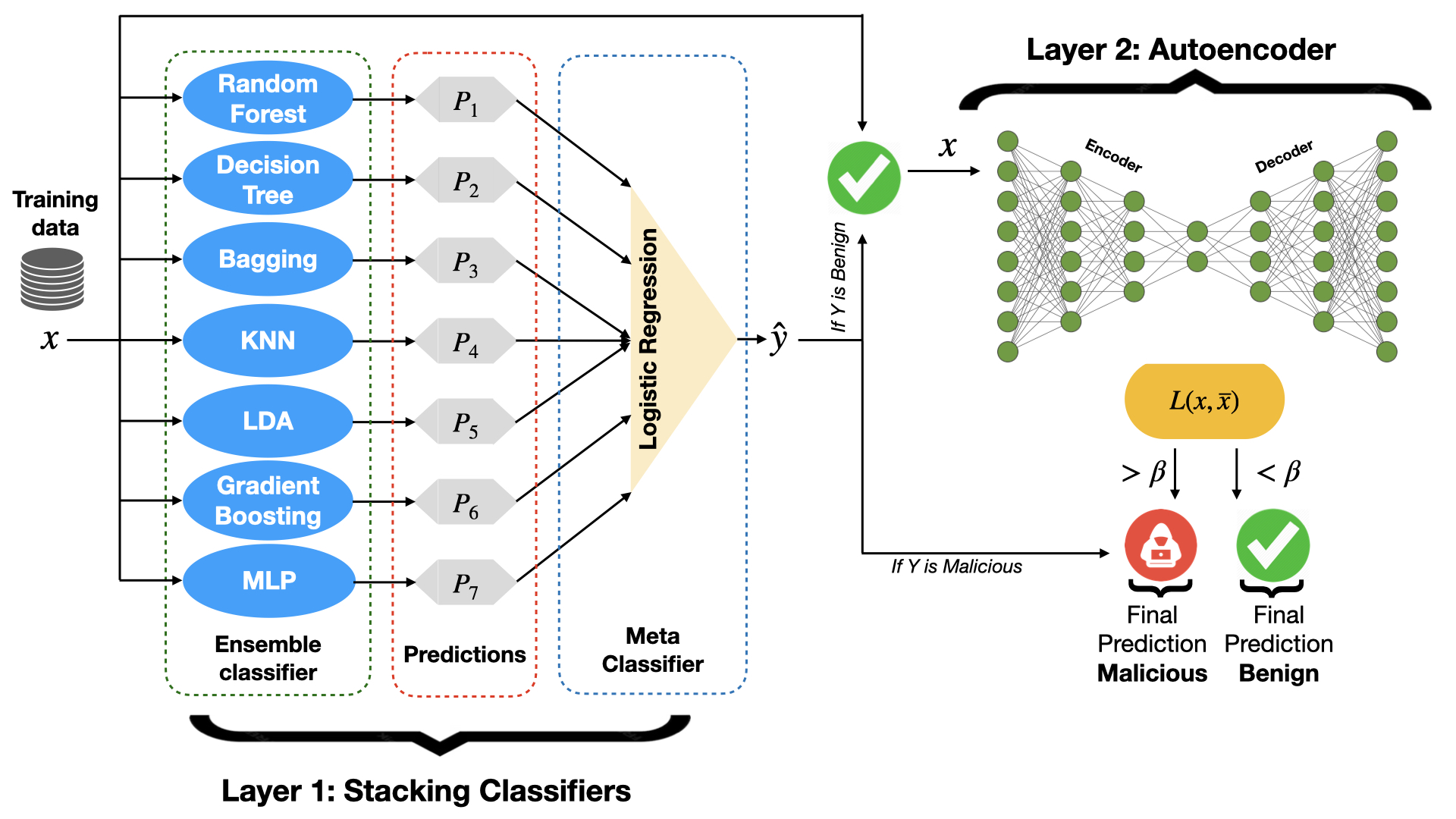

A multi-layer ensemble approach combining stacking classifiers, autoencoders, and adversarial training enhances the robustness of intrusion detection systems against fast gradient sign method and generative adversarial network attacks.

While machine learning has become integral to network security, its susceptibility to adversarial examples poses a critical threat to intrusion detection systems. This vulnerability is addressed in ‘Enhancing Network Intrusion Detection Systems: A Multi-Layer Ensemble Approach to Mitigate Adversarial Attacks’, which proposes a novel defense mechanism combining stacking classifiers and autoencoders, fortified by adversarial training. Experimental results on the UNSW-NB15 and NSL-KDD datasets demonstrate significant improvements in resilience against maliciously crafted network traffic. Could this multi-layered ensemble approach represent a substantial step towards more robust and reliable network security in an increasingly hostile digital landscape?

The Evolving Threat Landscape of Network Security

Network Intrusion Detection Systems (NIDS) form a critical defensive layer for modern digital infrastructure, constantly monitoring network traffic for malicious activity. These systems, however, are facing escalating vulnerabilities as attack methodologies become increasingly sophisticated. Originally designed to identify known threats through pre-defined signatures, NIDS are now frequently circumvented by zero-day exploits and polymorphic malware – attacks that alter their characteristics to evade detection. The sheer volume of network data, coupled with the speed at which threats evolve, overwhelms traditional signature-based approaches, necessitating more dynamic and intelligent security measures. Consequently, while NIDS remain essential, their effectiveness is waning, demanding a constant reassessment of security protocols and a shift towards proactive threat hunting strategies to maintain robust network defenses.

Network security historically relied on signature-based Intrusion Detection Systems, which function by identifying threats based on pre-defined patterns of malicious activity. However, this approach falters when confronted with zero-day exploits and polymorphic malware – attacks that constantly evolve to evade detection. Consequently, the field is rapidly shifting towards machine learning-based NIDS, capable of learning normal network behavior and flagging anomalies indicative of potential threats. These systems utilize algorithms to identify subtle deviations from established baselines, offering a proactive defense against previously unseen attack vectors. The promise lies in their ability to generalize beyond known signatures, adapting to the ever-changing landscape of cyber threats and bolstering defenses against increasingly sophisticated adversaries.

Machine learning-based network intrusion detection systems, while promising enhanced security, present a paradoxical vulnerability to what are known as adversarial attacks. These attacks don’t attempt to breach system defenses directly, but instead manipulate the input data in subtle, often imperceptible ways. By crafting malicious network traffic with carefully engineered perturbations, an attacker can effectively “fool” the machine learning model into misclassifying the threat – labeling a harmful intrusion as benign, or vice versa. This isn’t a flaw in the algorithms themselves, but a consequence of their reliance on pattern recognition; the altered data remains technically valid, yet deviates just enough to bypass the model’s defenses. Consequently, a sophisticated attacker can evade detection while maintaining the functionality of the malicious traffic, creating a significant security loophole that traditional signature-based systems, ironically, might have flagged.

Ensemble Learning: A Synthesis of Defensive Strategies

Stacking, a meta-learning technique, improves Network Intrusion Detection System (NIDS) performance by combining the predictions of multiple base classifiers. Rather than relying on a single model, stacking trains a meta-classifier – typically a Logistic Regression model – to learn the optimal weighting of predictions from diverse base learners. These base learners, such as Random Forest, K-Nearest Neighbors, Decision Trees, and Logistic Regression, are trained on the same dataset and their outputs become the input features for the meta-classifier. This approach reduces the risk of overfitting to specific data patterns and increases resilience against adversarial attacks by leveraging the collective intelligence of multiple models. The resulting stacked model consistently demonstrates higher accuracy and lower false positive rates compared to individual classifiers in NIDS deployments.

Stacking, as an ensemble method, leverages the predictions of multiple base classifiers – Random Forest, K-Nearest Neighbors, Decision Tree, and Logistic Regression – to generate a final prediction. Each base classifier is trained on the dataset, and their individual outputs become the input features for a meta-learner, typically a simpler model like Logistic Regression. This meta-learner is trained to optimally combine the predictions of the base classifiers, effectively learning which classifiers perform best under different conditions. The diversity among these base classifiers-achieved through differing algorithms and potentially varied feature subsets-reduces the risk of systematic errors and contributes to improved generalization performance and overall prediction accuracy compared to using a single classifier.

The effectiveness of ensemble learning in network intrusion detection systems (NIDS) is directly correlated to the heterogeneity of its component classifiers; by integrating algorithms with differing strengths and weaknesses – such as Random Forest’s handling of high-dimensional data, K-Nearest Neighbors’ sensitivity to local patterns, Decision Tree’s interpretability, and Logistic Regression’s efficiency – the system mitigates the risk of consistent errors stemming from any single model. This diversification ensures that misclassifications by one classifier are less likely to be replicated across the ensemble, resulting in a more stable and reliable detection rate, particularly against novel or obfuscated attack vectors. The combined output therefore provides a more robust defense than any individual classifier could achieve in isolation.

Simulating Attacks: Probing Systemic Weaknesses

Adversarial attack techniques are utilized to systematically assess the resilience of the Network Intrusion Detection System (NIDS). These techniques involve generating malicious network traffic with subtle, intentionally crafted perturbations designed to evade detection by the machine learning models within the NIDS. The Fast Gradient Sign Method (FGSM) is one such technique employed, calculating the gradient of the loss function with respect to the input data to identify the most significant features for manipulation. By introducing small changes to these features, the FGSM creates adversarial examples that are likely to be misclassified by the NIDS, thereby revealing vulnerabilities and weaknesses in its detection capabilities. This process allows for proactive identification of potential exploits and informs strategies for strengthening the NIDS against real-world attacks.

Adversarial attack methods function by introducing minimal, intentionally crafted perturbations to input data intended for machine learning models. These perturbations, often imperceptible to human observation, are calculated to maximize the probability of misclassification by the target model. The resulting perturbed inputs, while semantically similar to legitimate data, exploit vulnerabilities in the model’s decision boundaries. By analyzing the model’s performance on these subtly altered inputs, security researchers can identify weaknesses and areas for improvement in the model’s robustness and generalization capabilities, ultimately leading to a more resilient intrusion detection system.

Evaluation of the proposed two-layer Network Intrusion Detection System (NIDS) utilized the NSL-KDD and UNSW-NB15 datasets to assess performance under both standard and adversarial conditions. Results indicate a detection rate of approximately 92% on NSL-KDD and 99% on UNSW-NB15 when evaluating unmodified network traffic. Under attack conditions, specifically those generated using Generative Adversarial Networks (GANs), the system achieved F1-Scores of 84.23% on NSL-KDD and 91.24% on UNSW-NB15, demonstrating continued functionality and resilience despite adversarial input.

Proactive Defense: Harnessing the Power of Generative Models

Generative Adversarial Networks (GANs) present a novel approach to bolstering network intrusion detection systems by proactively expanding the scope of training data. These networks function through a competitive process, where a generator creates synthetic examples of network traffic-including malicious packets designed to evade detection-and a discriminator attempts to distinguish between real and generated data. This adversarial training process effectively forces the intrusion detection system to learn more robust features and generalize better to previously unseen attacks. By supplementing traditional training datasets with these GAN-generated adversarial examples, the system becomes more resilient to subtle variations in attack patterns and zero-day exploits, ultimately enhancing its ability to accurately identify and mitigate threats. The technique moves beyond relying solely on known attack signatures, fostering a more adaptable and future-proof security posture.

Network Intrusion Detection Systems (NIDS) often struggle to generalize effectively when confronted with novel attacks, a limitation addressed through the innovative application of Generative Adversarial Networks (GANs). These systems enhance NIDS training by proactively generating synthetic, yet realistic, attack samples that expand the diversity of the training dataset. This expanded dataset doesn’t simply increase the quantity of training data; critically, it improves the quality by exposing the NIDS to a broader spectrum of potential threats, including subtle variations and previously unseen attack vectors. Consequently, the NIDS learns to discern malicious activity with greater accuracy, reducing false positives and, more importantly, enhancing its ability to identify and mitigate zero-day exploits-attacks that have not been previously cataloged or defended against. This proactive approach fosters a more resilient security posture, allowing the NIDS to adapt and maintain high performance even as the threat landscape evolves.

Network intrusion detection systems can move beyond static defenses by incorporating generative adversarial networks within a voting classifier framework, creating an adaptive security posture. This approach leverages the GAN’s ability to continuously generate novel attack patterns, which are then presented to the voting classifier – an ensemble of diverse detection models. As the GAN evolves its attack simulations, the voting classifier dynamically adjusts its weighting of individual models, prioritizing those best equipped to handle the new threats. This feedback loop enables the system to learn and refine its defenses in real-time, effectively ‘chasing’ emerging attack vectors and bolstering resilience against zero-day exploits. The result is a security system that doesn’t simply react to threats, but proactively anticipates and neutralizes them, enhancing long-term protection against an ever-changing landscape of cyberattacks.

Anomaly Detection: Establishing a Baseline of Normalcy

Autoencoders represent a significant advancement in network anomaly detection through their ability to model and understand typical network behavior. These neural networks are trained on data representing normal network traffic, learning to compress this data into a lower-dimensional ‘latent space’ and then reconstruct it. This process forces the autoencoder to identify the most crucial features of normal traffic; when presented with anomalous data – something deviating from the learned patterns – the reconstruction error dramatically increases. This error serves as a strong indicator of potential threats, allowing for real-time flagging of unusual activity. Essentially, the system learns what is ‘normal’ and identifies anything that doesn’t fit as potentially malicious, offering a proactive approach to cybersecurity beyond simple signature-based detection.

Autoencoders function as vigilant monitors within network traffic, establishing a baseline of ‘normal’ activity through a learned, compressed data representation. When network behavior deviates from this established norm – a sudden spike in traffic, an unusual data packet structure, or an unexpected communication pattern – the autoencoder signals a potential anomaly. This flagging process happens in real-time, enabling immediate investigation and mitigation of potentially malicious activities. Rather than relying on pre-defined signatures of known threats, this deviation-based approach allows the system to identify novel attacks and zero-day exploits that would otherwise bypass traditional security measures, providing a crucial layer of proactive defense.

A layered security architecture, integrating autoencoders with ensemble and generative defenses, offers substantial improvements in network intrusion detection capabilities. Traditional methods, such as Decision Tree classifiers, demonstrate significant performance degradation – a 13.92% drop in F1-Score on the NSL-KDD dataset and 11.58% on UNSW-NB15 – when subjected to adversarial attacks from Generative Adversarial Networks (GANs). However, this research demonstrates that a two-layer model effectively mitigates these losses, achieving high levels of both precision (88.42% NSL-KDD, 92.15% UNSW-NB15) and recall (86.29% NSL-KDD, 90.97% UNSW-NB15). This suggests that combining anomaly detection with robust defensive strategies is crucial for building resilient cybersecurity systems capable of safeguarding networks against increasingly sophisticated threats.

The pursuit of resilient intrusion detection systems, as detailed in the study, demands a foundation built upon demonstrable correctness. The paper rightly focuses on defending against adversarial attacks, acknowledging the fragility of machine learning models when confronted with intentionally crafted inputs. This echoes Bertrand Russell’s sentiment: “The point of logic is to avoid thinking.” While seemingly paradoxical, Russell highlights the necessity of defined reasoning; in this context, a precisely defined defense – the multi-layer ensemble – is crucial. Without a rigorous, mathematically sound approach to security, any system, no matter how empirically successful, remains fundamentally vulnerable, merely reacting to threats instead of logically preempting them. The ensemble approach, utilizing autoencoders and adversarial training, strives for this logical foundation.

What Lies Ahead?

The presented work, while demonstrating a measurable improvement in resilience against crafted perturbations, merely addresses a symptom. The fundamental fragility stems from the reliance on correlative, rather than causative, features within the intrusion detection paradigm. A system predicated on statistical association will always be susceptible to manipulation, however sophisticated the ensemble. The asymptotic limit of adversarial attack mitigation, using these methods, is not perfect security, but an escalating arms race with diminishing returns.

Future investigation must move beyond feature engineering and embrace formal methods. The construction of intrusion detection systems as provably secure state machines, capable of verifying network behavior against a defined specification, offers a path toward true robustness. This necessitates a shift in focus from machine learning’s inductive approach to deductive reasoning, a conceptually more demanding, but ultimately more satisfying, endeavor. The current reliance on empirical validation, while pragmatic, is mathematically unsatisfying.

Furthermore, the implicit assumption of a stationary adversary requires re-evaluation. An adaptive attacker, capable of learning and evolving its strategies, will inevitably circumvent defenses designed against fixed threat models. Research should prioritize game-theoretic approaches, where the detection system and attacker engage in a continuous optimization process, forcing a Nash equilibrium that maximizes security. Only through such a formalization can a genuinely robust and lasting defense be achieved.

Original article: https://arxiv.org/pdf/2603.10413.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Off Campus Season 1 Soundtrack Guide

- Euphoria Season 3’s New R-Rated Sydney Sweeney Scene Proves The Show Is Trolling Us

- Gold Rate Forecast

- What is Omoggle? The AI face-rating platform taking over Twitch

- Man pulls car with his manhood while on fire to raise awareness for prostate cancer

- Popeye Slasher Horror Film Officially Kicks Off Production on ‘Bigger & Bloodier’ Sequel

- Why is there no Jujutsu Kaisen this week? Missing Season 3 Episode 8 explained

- Crimson Desert Guide – How to Pay Fines, Bounties & Debt

- Jailbreak codes (April 2026)

- Lord Of The Flies Review: Near-Perfect Adaptation Is A Reminder Of Classic Novel’s Haunting Power

2026-03-13 05:08