Author: Denis Avetisyan

A new framework combines explainable AI with strategic data handling to significantly improve the accuracy and transparency of cybersecurity threat detection.

Researchers demonstrate state-of-the-art performance on the CIC-IDS2017 dataset by integrating SHAP interpretability, stratified sampling, and robust feature selection techniques.

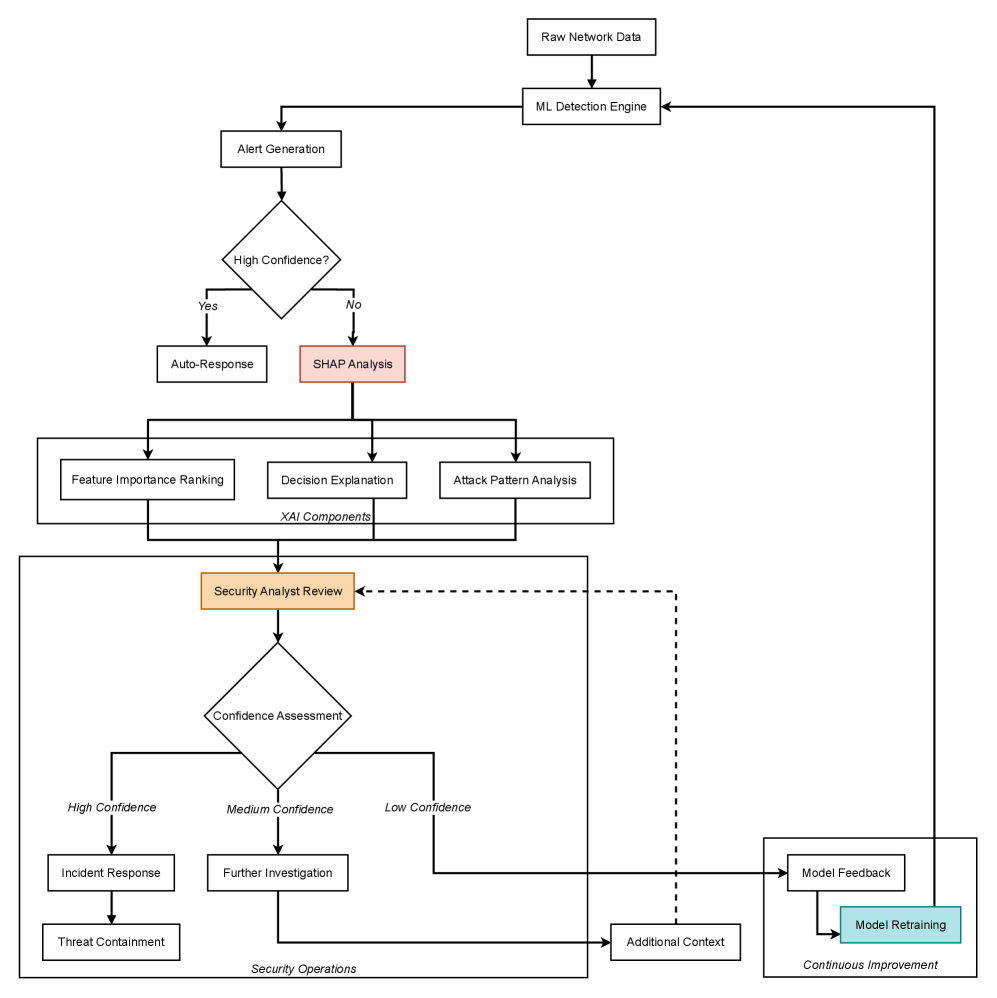

Despite increasing reliance on machine learning for cybersecurity, a lack of transparency and computational efficiency often hinders practical deployment. This is addressed in ‘Detecting Cybersecurity Threats by Integrating Explainable AI with SHAP Interpretability and Strategic Data Sampling’, which introduces a novel framework for building trustworthy threat detection systems. By combining strategic data sampling, automated data leakage prevention, and model-agnostic explanations via SHAP analysis, the approach maintains high detection efficacy while reducing computational burden and fostering analyst understanding. Can this integrated methodology provide a scalable path toward fully explainable and robust AI-driven security operations centers?

The Inherent Limitations of Signature-Based Defenses

Conventional intrusion detection systems, built upon pre-defined attack signatures and known malicious patterns, are increasingly challenged by the rapid evolution of cyber threats. These systems struggle significantly when confronted with zero-day exploits – attacks that leverage previously unknown vulnerabilities before a patch or signature is available. The inherent limitation lies in their reactive nature; they can only identify threats that have already been cataloged. Consequently, sophisticated attackers frequently bypass these defenses by crafting novel attacks, polymorphic malware, or employing evasion techniques that disguise malicious activity as legitimate network traffic. This creates a critical gap in security, demanding a shift towards proactive, behavior-based detection methods capable of identifying anomalous activity, regardless of whether a known signature exists. The increasing complexity of modern network environments further exacerbates this issue, providing attackers with more opportunities to exploit vulnerabilities and remain undetected.

Modern networks generate an astonishing amount of data, a deluge that increasingly challenges traditional cybersecurity systems. These systems often rely on signature-based detection, where known malicious patterns are identified to flag threats. However, the sheer volume of legitimate and malicious traffic overwhelms these approaches, causing them to generate a high number of false positives – incorrectly identifying harmless activity as malicious. This constant stream of alerts desensitizes security teams, burying genuine threats within a mountain of noise and significantly hindering their ability to respond effectively to real attacks. Consequently, organizations are compelled to invest heavily in alert triage and analysis, diverting resources from proactive threat hunting and preventative measures.

Conventional cybersecurity often centers on identifying known malicious patterns – static features like specific IP addresses or file hashes. However, this approach increasingly falters as adversaries skillfully adapt their techniques to evade detection. Modern attacks are characterized by polymorphism – constantly shifting code, encryption, and network behaviors – rendering fixed signatures ineffective. The dynamic nature of network traffic, influenced by legitimate user activity and rapidly changing application protocols, further obscures malicious intent. Consequently, systems reliant on static analysis struggle to differentiate between normal fluctuations and genuine threats, creating vulnerabilities that sophisticated attackers exploit by mimicking legitimate traffic patterns and continuously innovating their methods.

Leveraging Ensemble Learning for Robust Threat Detection

Ensemble learning techniques enhance detection accuracy by strategically combining the predictions of multiple individual machine learning models. This approach leverages the diversity of algorithms – such as decision trees, support vector machines, and neural networks – to compensate for the limitations inherent in any single model. By aggregating the outputs – through methods like bagging, boosting, and stacking – ensemble methods reduce both bias and variance, leading to more robust and generalized performance. The core principle is that the collective intelligence of multiple models often surpasses that of a single, complex model, particularly when those models exhibit differing strengths and weaknesses in identifying malicious activity.

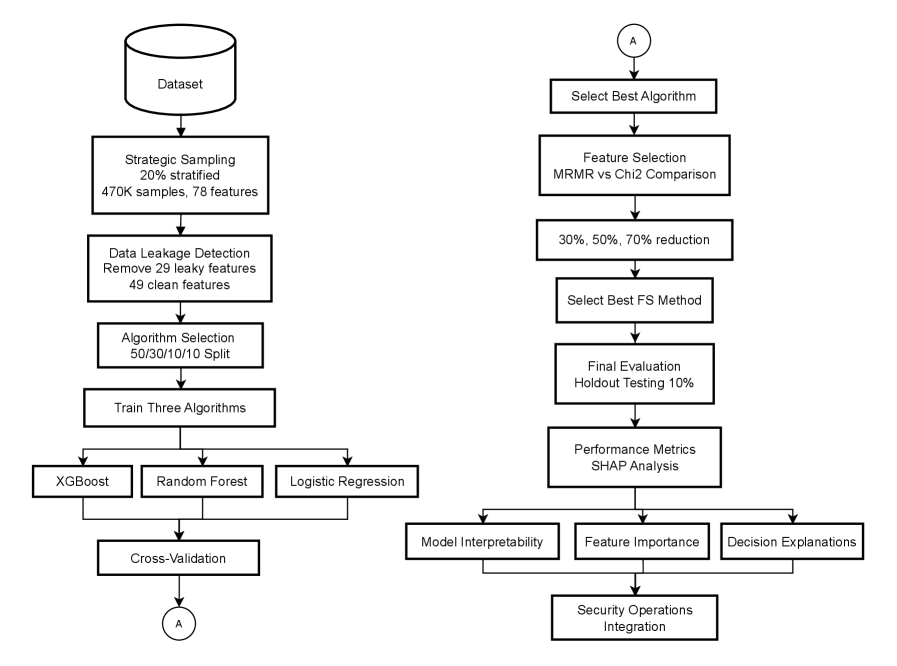

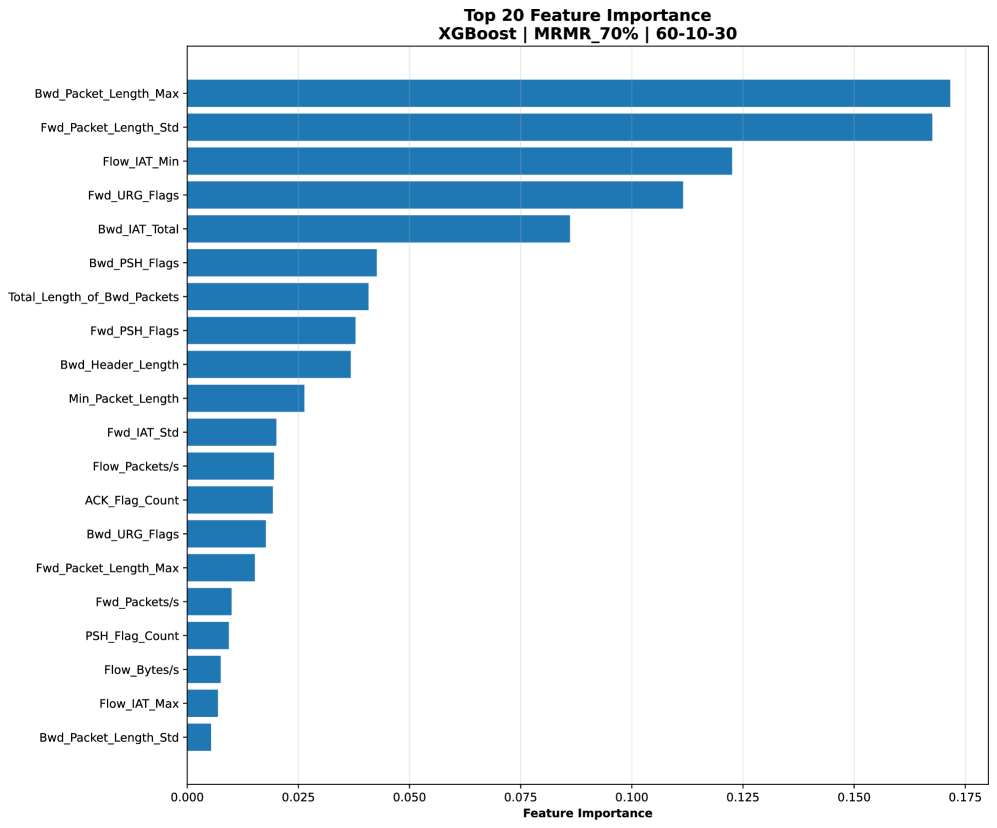

Feature selection is a critical step in building effective machine learning models for threat detection, as it identifies the most pertinent indicators of malicious activity while discarding irrelevant or redundant data. Techniques such as Chi2 and Maximum Relevance Minimum Redundancy (MRMR) are employed to assess feature importance and reduce dimensionality. Implementation of the MRMR method in this system resulted in a 30.6% reduction in the total number of features utilized, demonstrably improving model performance by decreasing computational load and minimizing the impact of noise inherent in less relevant data points. This streamlined feature set allows for faster training, reduced overfitting, and improved generalization to unseen threats.

Model interpretability in machine learning-based security systems presents a significant challenge despite high predictive accuracy. While models can effectively identify malicious activity, a lack of transparency regarding the reasoning behind those classifications hinders trust and limits practical application. Understanding the specific features or patterns driving a prediction is essential for security analysts to validate alerts, refine security policies, and respond effectively to threats. Without interpretability, it becomes difficult to distinguish between true positives and false positives, potentially leading to alert fatigue or, conversely, missed attacks. This necessitates research into explainable AI (XAI) techniques to provide insights into model decision-making processes and facilitate human-in-the-loop security operations.

Illuminating Model Decisions with SHAP-Driven Insights

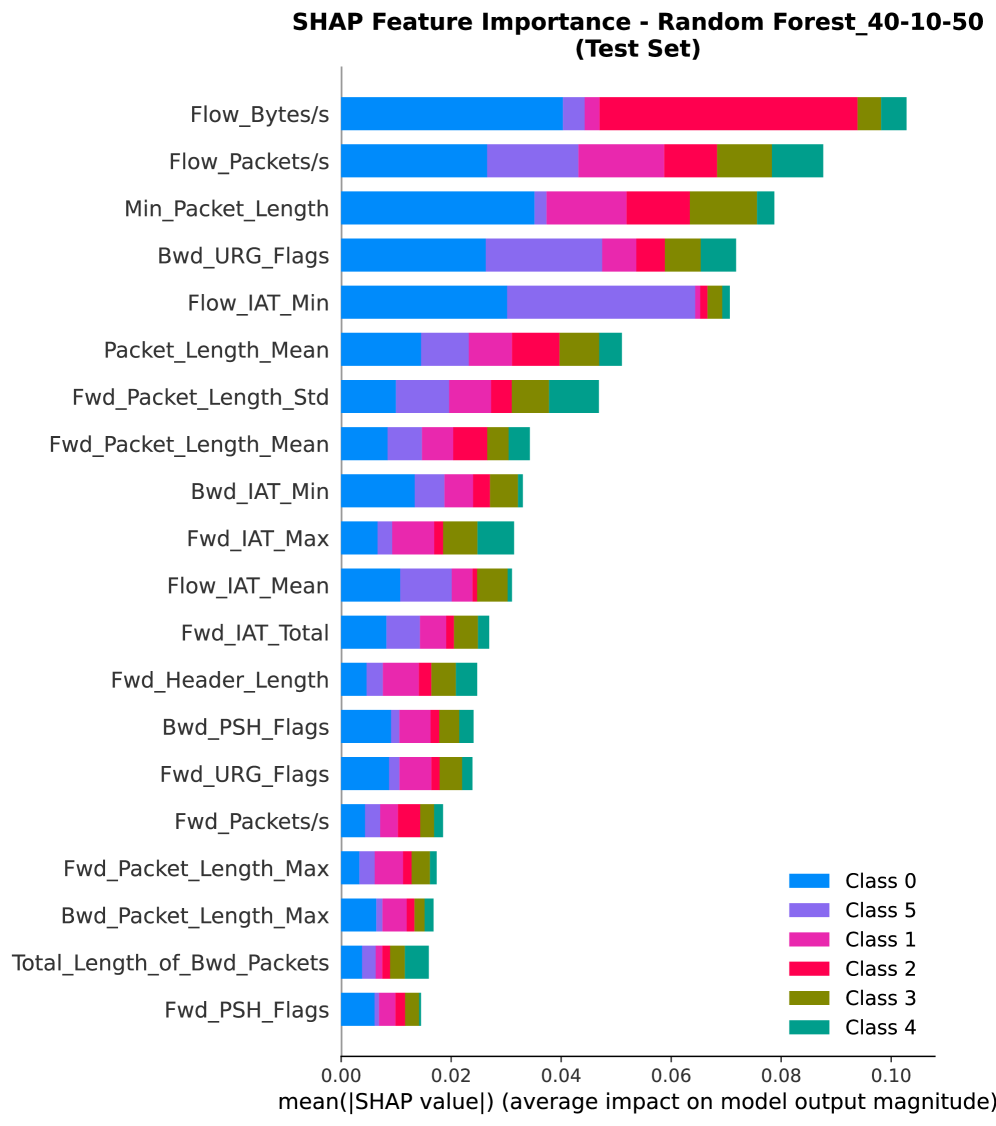

The XI2S-IDS system utilizes SHapley Additive exPlanations (SHAP) values to enhance the interpretability of its intrusion detection predictions. SHAP values assign each feature an importance weight for a particular prediction, indicating its contribution to the model’s output. This allows security analysts to move beyond simple binary classifications (malicious or benign) and understand why a specific network activity was flagged as suspicious. By examining the SHAP values associated with each feature – such as packet length, protocol type, or port number – analysts can validate the model’s reasoning, identify potential false positives, and gain deeper insights into the characteristics of observed attacks. This transparency is critical for building trust in the system and facilitating effective incident response.

The application of SHapley Additive exPlanations (SHAP) values to Random Forest, Logistic Regression, and XGBoost models facilitates the identification of key features influencing classification outcomes. SHAP values quantify each feature’s contribution to a specific prediction, allowing security analysts to determine which features are most impactful in both correctly identifying intrusions (positive classifications) and accurately classifying legitimate traffic (negative classifications). This granular feature importance analysis moves beyond overall model accuracy, providing insight into the reasoning behind each prediction and enabling more informed security decision-making and model refinement.

Model validation within the XI2S-IDS system utilizes both Multi-Configuration Validation and Temporal Validation techniques to assess generalization performance and adaptability to shifting attack landscapes. Multi-Configuration Validation involves training and testing the model across diverse network configurations to ensure consistent performance regardless of environment. Temporal Validation evaluates the model’s ability to maintain accuracy when tested on data collected at different time periods, addressing the issue of concept drift. Evaluation on the CIC-IDS2017 dataset demonstrated a 99.92% accuracy and a 99.77% F1-Macro score, indicating robust performance and effective generalization capabilities.

Mitigating Data Challenges and Achieving Adaptive Learning

Effective intrusion detection relies heavily on models that generalize well to unseen data, and data leakage prevention is paramount to achieving this. Without careful attention to preventing leakage, models can inadvertently learn patterns specific to the training set-spurious correlations-rather than the true underlying characteristics of network attacks. This results in deceptively high performance metrics during training and validation, creating a false sense of security. Techniques like rigorous data partitioning, feature engineering that avoids future information, and careful cross-validation are essential to ensure the model learns to identify attacks based on genuine indicators, not accidental artifacts of the training data itself. By mitigating data leakage, the system builds a more reliable and robust defense against evolving threats in real-world network environments.

A significant challenge in intrusion detection lies in the inherent class imbalance, where normal network traffic vastly outweighs malicious activity. To counteract this, the HAEnID system leverages strategic sampling techniques alongside the Synthetic Minority Oversampling Technique (SMOTE). Strategic sampling carefully selects instances from the majority class, reducing its dominance, while SMOTE artificially generates new examples of the minority class-the attacks-by interpolating between existing ones. This combined approach doesn’t simply increase the number of attack samples, but creates more representative data, enabling the detection algorithms to learn the characteristics of these rarer events more effectively and ultimately improving the identification rate of minority class attacks that might otherwise be overlooked.

The HAEnID system presents a novel approach to intrusion detection, effectively merging the strengths of multiple ensemble techniques with the Synthetic Minority Oversampling Technique (SMOTE). This combination not only enhances the system’s ability to identify rare and critical network attacks, but also significantly optimizes computational efficiency. Rigorous testing revealed a substantial 36.7% reduction in training time – decreasing from 33.90 seconds to just 21.47 seconds. This performance gain is directly attributable to strategic feature reduction and the implementation of optimized algorithms within the ensemble, allowing for a more adaptive and robust system capable of rapidly processing and responding to evolving cybersecurity threats.

Towards a Future of Proactive and Adaptive Cybersecurity

Maintaining the efficacy of cybersecurity models requires constant vigilance against a phenomenon known as concept drift – the subtle, yet critical, shift in data characteristics over time. Attackers continually refine their techniques, and network environments are rarely static; therefore, a model trained on historical data can rapidly become obsolete. To combat this, systems must incorporate temporal validation, a process of continually assessing model performance on recent data and retraining when accuracy declines. This isn’t simply about updating the model; it’s about establishing a feedback loop that acknowledges the evolving threat landscape and adapts accordingly. Failing to account for concept drift leaves security systems vulnerable, as previously effective defenses become blind to new and emerging attacks – a critical consideration in the ever-changing realm of digital security.

The increasing reliance on machine learning for cybersecurity necessitates a parallel advancement in Explainable AI (XAI) methodologies. Currently, many security systems operate as “black boxes,” offering predictions without revealing the reasoning behind them; this opacity hinders effective threat analysis and incident response. Ongoing research focuses on developing XAI techniques that can illuminate the decision-making processes of these algorithms, allowing security professionals to not only detect anomalies but also understand why a particular event triggered an alert. This enhanced transparency fosters trust in automated systems, enabling more informed human oversight and facilitating the identification of potential biases or vulnerabilities within the machine learning models themselves. Ultimately, XAI promises to move cybersecurity beyond simple detection towards a more nuanced and proactive understanding of evolving threat landscapes.

The convergence of adaptive learning and explainable AI promises a paradigm shift in cybersecurity, moving beyond reactive defenses to genuinely proactive threat mitigation. This approach allows systems to not only learn from evolving attack patterns but also to articulate the reasoning behind security decisions, fostering trust and enabling human experts to refine strategies. Recent advancements have yielded a system capable of processing 324,000 predictions per second, demonstrating the feasibility of real-time deployment in high-throughput network environments. This speed, combined with the transparency offered by explainable AI, facilitates rapid identification and neutralization of emerging threats before they can inflict significant damage, ultimately strengthening an organization’s overall security posture and resilience.

The pursuit of robust cybersecurity, as detailed in this framework, demands a level of mathematical rigor often absent in practical implementations. This study champions a provable approach to intrusion detection, prioritizing demonstrable correctness over mere empirical performance. As David Hilbert famously stated, “In every well-defined mathematical domain, there is a method to decide whether any given proposition is true or false.” The strategic data sampling and feature selection techniques employed here aren’t simply about improving accuracy; they represent a deliberate attempt to construct a system whose behavior is fundamentally understandable and, therefore, trustworthy – a system where the ‘truth’ of a detection can be established with certainty, echoing Hilbert’s vision of a logically sound foundation for all knowledge.

Beyond the Horizon

The pursuit of intrusion detection, as demonstrated by this work, inevitably encounters the limitations inherent in relying solely on observed correlations. While strategic data sampling and explainable AI offer a refined approach to feature selection – and a temporary reprieve from the curse of dimensionality – the fundamental challenge remains: true anomalies, by definition, lack sufficient precedent for reliable identification. The elegance of a solution is not measured by its accuracy on a static dataset, but by the consistency with which it maintains predictive power when confronted with genuinely novel threats.

Future efforts should therefore move beyond simply explaining model decisions, and focus on establishing provable boundaries for those explanations. A model that correctly identifies a threat, but cannot articulate why that particular configuration violates established principles of network behavior, offers little lasting value. The field requires a shift from empirical performance metrics to formal verification of algorithmic robustness – a demonstration that the system’s logic is sound, not merely coincidentally effective.

The promise of explainable AI, ultimately, lies not in post-hoc rationalization, but in the design of intrinsically interpretable algorithms. To achieve this, researchers must embrace the mathematical foundations of cybersecurity, and seek solutions that are defined by logical necessity, rather than statistical convenience. Only then can one hope to move beyond the endless cycle of reactive patching and towards a truly proactive defense.

Original article: https://arxiv.org/pdf/2602.19087.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- NBA 2K26 Season 6 Rewards for MyCAREER & MyTEAM

- Gold Rate Forecast

- Makoto Kedouin’s RPG Developer Bakin sample game is now available for free

- Where Winds Meet’s new Hexi expansion kicks off with a journey to the Jade Gate Pass in version 1.4

- Katanire’s Yae Miko Cosplay: Genshin Impact Masterpiece

- Paramount CinemaCon 2026 Live Blog – Movie Announcements Panel for Sonic 4, Street Fighter & More (In Progress)

- Vibe Out With Ghost Of Yotei’s Watanabe Mode Music While You’re Stuck At Work

- What is Managed Democracy? A Helldivers Guide

- Scientology speedrun trend escalates as viewers map out Hollywood facility

- This Capcom Fanatical Bundle Is Perfect For Spooky Season

2026-02-24 20:49