Author: Denis Avetisyan

A new machine learning framework leverages combined heart signals to accurately identify atrial fibrillation, a common and dangerous heart condition.

This review details a system using photoplethysmography and electrocardiogram data, demonstrating that ensemble learning with Bagged Decision Trees achieves optimal performance in atrial fibrillation detection.

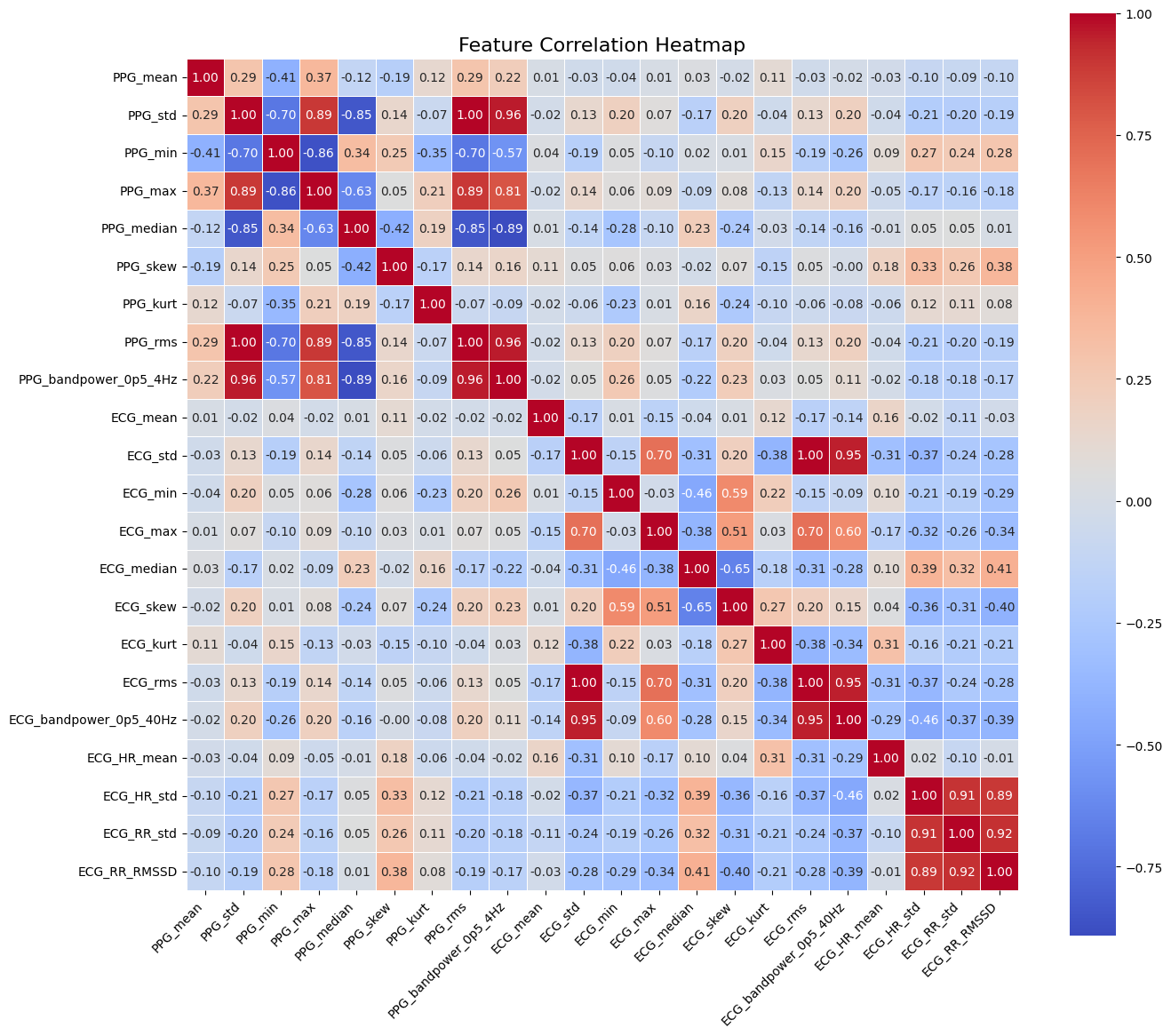

Early detection of atrial fibrillation (AF) remains a clinical challenge despite its strong association with increased stroke risk. This paper, ‘Atrial Fibrillation Detection Using Machine Learning’, presents a framework leveraging combined photoplethysmography (PPG) and electrocardiogram (ECG) signals to address this need. Through the extraction of 22 features from continuous recordings of 35 subjects, and evaluation of bagged decision trees, support vector machines, and k-nearest neighbors classifiers, the study demonstrates high accuracy-reaching 98.7% with subspace KNN-in AF detection. Could this approach facilitate more widespread, non-invasive AF screening and ultimately improve patient outcomes?

The Silent Threat: Understanding Atrial Fibrillation

Atrial fibrillation, a frequently occurring heart rhythm disorder, dramatically elevates the risk of two serious cardiovascular events: stroke and heart failure. This arrhythmia causes the upper chambers of the heart to beat irregularly and often rapidly, leading to inefficient blood flow and the potential formation of clots. These clots can then travel to the brain, causing a stroke, or strain the heart over time, contributing to heart failure. The prevalence of atrial fibrillation is increasing globally, largely due to an aging population and the rising incidence of associated conditions like hypertension and diabetes, making it a significant public health concern demanding improved detection and management strategies.

Current methods for detecting atrial fibrillation, such as Holter monitors and in-office electrocardiograms (ECGs), present significant limitations when considering population-wide screening. Holter monitors, while capable of continuous recording, require patients to wear bulky equipment for extended periods, often disrupting daily life and leading to discomfort – impacting adherence and data quality. Traditional ECGs, conversely, capture only a brief snapshot of heart rhythm, potentially missing intermittent episodes of AF. This necessitates repeated testing or prolonged monitoring, creating logistical hurdles and substantial healthcare costs. Consequently, a considerable number of individuals with undiagnosed AF remain unaware of their risk, hindering timely intervention and increasing the likelihood of stroke or heart failure. The need for more convenient, accessible, and scalable diagnostic tools is therefore paramount to effectively address this growing public health concern.

Automated Detection: A Machine Learning Approach

Machine learning techniques are increasingly investigated for the automated detection of atrial fibrillation (AF) utilizing electrocardiogram (ECG) and photoplethysmogram (PPG) signals. Traditional AF detection relies on manual interpretation of these signals, which is time-consuming and requires specialized expertise. Machine learning algorithms offer the potential for real-time, high-throughput analysis, improving diagnostic efficiency and potentially enabling earlier intervention. The application of these algorithms aims to identify characteristic patterns within the ECG and PPG data indicative of AF, such as irregular heart rhythms or fibrillatory waves, without explicit programming for each specific waveform feature.

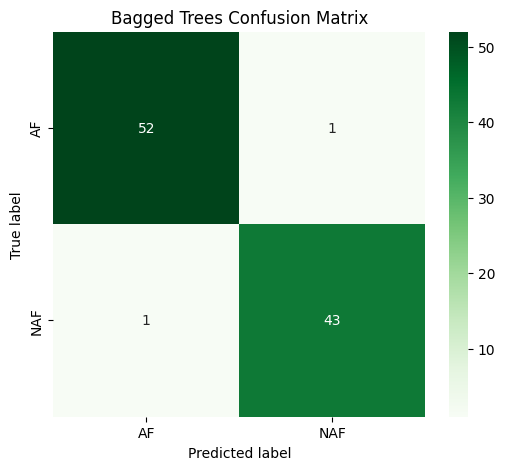

Current research investigates the application of multiple machine learning algorithms for automated atrial fibrillation (AF) detection. Specifically, Bagged Decision Trees, Subspace k-Nearest Neighbors (KNN), and Cubic Support Vector Machines (SVM) are under evaluation. Initial results demonstrate that Bagged Decision Trees exhibit high performance, achieving an accuracy of 97.94% when applied to photoplethysmography (PPG) signals for AF detection. These algorithms are being tested to determine their efficacy in providing an automated and accurate diagnostic tool.

Subspace k-Nearest Neighbors (KNN) demonstrated an accuracy of 94.79% in atrial fibrillation (AF) detection. This performance metric was achieved through the application of KNN within a subspace feature extraction framework, effectively reducing dimensionality and improving classification efficiency. The algorithm’s accuracy was determined via testing on a designated dataset, with results indicating a strong capability in correctly identifying instances of AF based on analyzed physiological signals. While specific dataset details and signal types are not provided here, the 94.79% accuracy represents a significant result for a non-parametric machine learning approach to automated AF detection.

Quantifying Performance: Metrics for Reliable Diagnosis

Model performance assessment relies on several key metrics to quantify predictive capability. Accuracy represents the overall proportion of correct predictions, calculated as (TP + TN) / (TP + TN + FP + FN) , where TP, TN, FP, and FN denote true positives, true negatives, false positives, and false negatives, respectively. Sensitivity, also known as recall, measures the ability of the model to correctly identify positive instances: TP / (TP + FN) . Conversely, Specificity quantifies the model’s ability to correctly identify negative instances: TN / (TN + FP) . These metrics provide complementary insights into a classifier’s performance, particularly regarding its ability to avoid both false positives and false negatives, and are crucial for comparing different models and tuning their parameters.

10-fold Cross-Validation is a statistical method used to assess the performance of machine learning algorithms by partitioning the dataset into ten mutually exclusive subsets, or ‘folds’. The model is then trained on nine of these folds and evaluated on the remaining fold; this process is repeated ten times, with each fold serving as the validation set once. The performance metric, such as accuracy, is then averaged across all ten iterations to provide a more reliable estimate of how well the model generalizes to unseen data compared to a single train-test split. This technique reduces the risk of overfitting and provides a less biased evaluation, particularly when dealing with limited datasets.

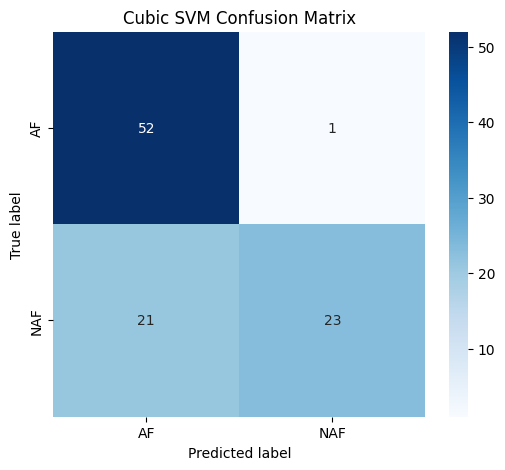

Evaluations conducted in this study indicate that Bagged Decision Trees achieved high performance metrics: 97.94% accuracy, indicating the overall correctness of predictions; 98.11% sensitivity, representing the ability to correctly identify positive cases; and 97.73% specificity, denoting the ability to correctly identify negative cases. In contrast, the Cubic Support Vector Machine (SVM) demonstrated comparatively lower specificity within this specific dataset, suggesting a higher rate of false positives when utilizing this algorithm for classification.

The study distills a complex physiological challenge – accurate atrial fibrillation detection – into a refined signal processing and machine learning framework. It prioritizes essential data from both ECG and PPG, effectively stripping away extraneous noise to reveal core patterns. This echoes Marvin Minsky’s sentiment: “The more we understand about how things work, the more we can simplify them.” The success of Bagged Decision Trees isn’t about adding complexity to the model, but rather about intelligently combining simpler components-a testament to the power of focused design. The emphasis on feature extraction and ensemble learning exemplifies a commitment to clarity, achieving robust performance through careful reduction and refinement of the input data.

Where to Next?

The demonstrated confluence of electrocardiogram and photoplethysmography signals, yielding improved atrial fibrillation detection, does not represent an arrival. It clarifies a departure point. The persistent challenge lies not in achieving incrementally higher accuracy-a pursuit of diminishing returns-but in defining ‘accuracy’ itself. What constitutes a clinically meaningful detection? A system capable of 99% accuracy remains cumbersome if it generates false positives that overwhelm medical resources. The focus must shift from signal processing refinement to contextual intelligence.

Further investigation should not prioritize more complex ensemble methods. Bagged Decision Trees performed adequately; the pursuit of marginal gains through neural networks risks obscuring fundamental limitations. Instead, the field would benefit from a reductionist approach: can atrial fibrillation be reliably detected from less data? Minimal viable signals, extracted with maximal simplicity, represent a more potent avenue for practical application. The question isn’t ‘how much can we add?’ but ‘what can we remove?’

Ultimately, the true progress will be measured not by the sophistication of the algorithms, but by their absence. A system that renders itself obsolete by enabling preventative measures-addressing underlying causes rather than merely detecting symptoms-would be the ultimate testament to the power of reduction. The goal is not to perfect detection, but to eliminate the need for it.

Original article: https://arxiv.org/pdf/2602.18036.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Itzaland Animal Locations in Infinity Nikki

- NBA 2K26 Season 6 Rewards for MyCAREER & MyTEAM

- Gold Rate Forecast

- Makoto Kedouin’s RPG Developer Bakin sample game is now available for free

- Paramount CinemaCon 2026 Live Blog – Movie Announcements Panel for Sonic 4, Street Fighter & More (In Progress)

- Where Winds Meet’s new Hexi expansion kicks off with a journey to the Jade Gate Pass in version 1.4

- Vibe Out With Ghost Of Yotei’s Watanabe Mode Music While You’re Stuck At Work

- When Logic Breaks Down: Understanding AI Reasoning Errors

- This Capcom Fanatical Bundle Is Perfect For Spooky Season

- What is Managed Democracy? A Helldivers Guide

2026-02-24 05:42