Author: Denis Avetisyan

Researchers have developed a novel graph neural network that uses causal reasoning to improve accuracy and reliability in identifying key features within complex graph structures.

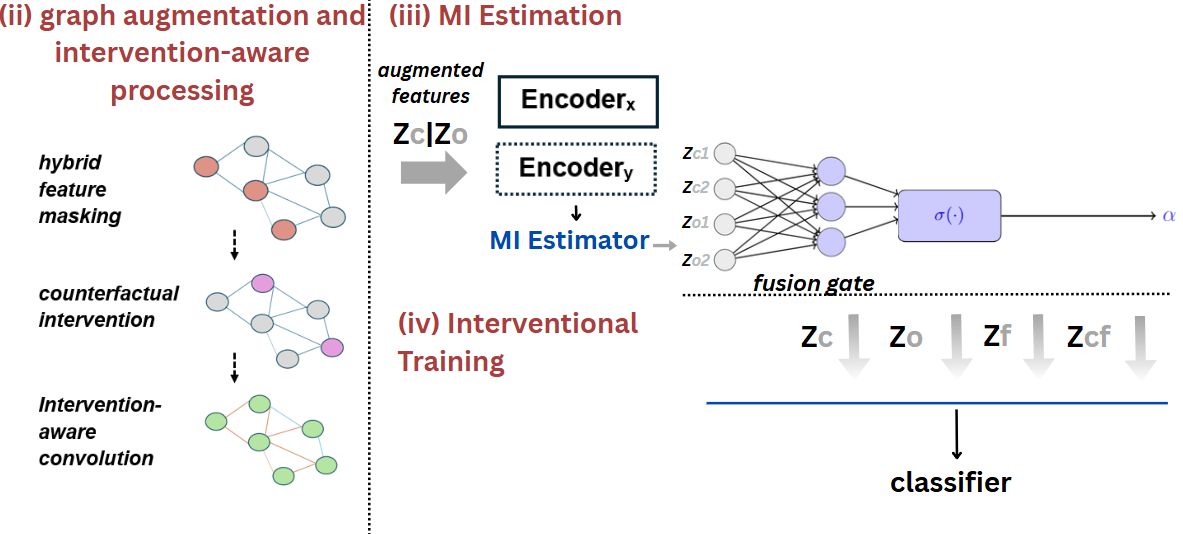

This work introduces CCAGNN, a method that leverages counterfactual interventions and mutual information to disentangle causal and non-causal features for robust graph classification and causal inference.

While graph neural networks excel at learning from relational data, their reliance on correlations renders them vulnerable to spurious patterns and distributional shifts. This limitation motivates the work ‘Optimizing Graph Causal Classification Models: Estimating Causal Effects and Addressing Confounders’, which introduces CCAGNN, a novel framework that integrates causal reasoning and counterfactual interventions into graph learning. By disentangling causal from non-causal features, CCAGNN demonstrably improves both the accuracy and robustness of graph classification across diverse datasets. Could this approach pave the way for more reliable and interpretable graph machine learning in real-world applications requiring robust causal inference?

The Allure of Spurious Correlation

Graph Neural Networks (GNNs) have rapidly become a dominant approach for analyzing data represented as graphs – from social networks and molecular structures to knowledge graphs and recommendation systems. However, despite their success, GNNs are demonstrably susceptible to drawing incorrect conclusions due to spurious correlations and confounding variables present within these complex datasets. Essentially, the network may identify patterns that appear meaningful but are, in reality, coincidental or driven by hidden factors unrelated to the actual phenomenon under investigation. This limitation arises because standard GNN architectures often prioritize capturing relational patterns without explicitly modeling the underlying causal relationships, leading to predictions that lack robustness and generalizability when faced with distributional shifts or novel scenarios. Consequently, researchers are increasingly focused on developing methods to mitigate these issues and enhance the reliability of GNN-based insights.

The predictive power of graph neural networks diminishes when faced with intricate relational data due to a susceptibility to misleading patterns. Traditional GNNs often prioritize correlation over causation, leading to inaccurate conclusions when spurious connections overshadow genuine influences within the graph. This is particularly problematic in scenarios involving confounding variables – hidden factors that affect both the node features and the connections between them – as the network may mistakenly attribute causality where it doesn’t exist. Consequently, insights derived from these models can be unreliable, hindering effective decision-making in domains such as social network analysis, drug discovery, and knowledge graph reasoning, where understanding the true underlying relationships is crucial for achieving meaningful results.

Current Graph Neural Networks (GNNs) often operate under a fundamental limitation: the assumption of equal importance for all connections within a graph. This approach disregards the crucial distinction between correlation and causation, potentially leading to inaccurate conclusions. By treating every edge as equally influential, these models fail to discern which relationships are genuinely driving the observed patterns and which are merely coincidental or the result of confounding factors. Consequently, predictions can be skewed by spurious correlations, hindering the GNN’s ability to generalize to unseen data or provide reliable insights into the underlying mechanisms governing the graph’s structure. A more nuanced approach, one that explicitly models or infers causal relationships, is therefore essential to overcome this limitation and unlock the full potential of GNNs for complex graph analysis.

Disentangling Reality from Appearance

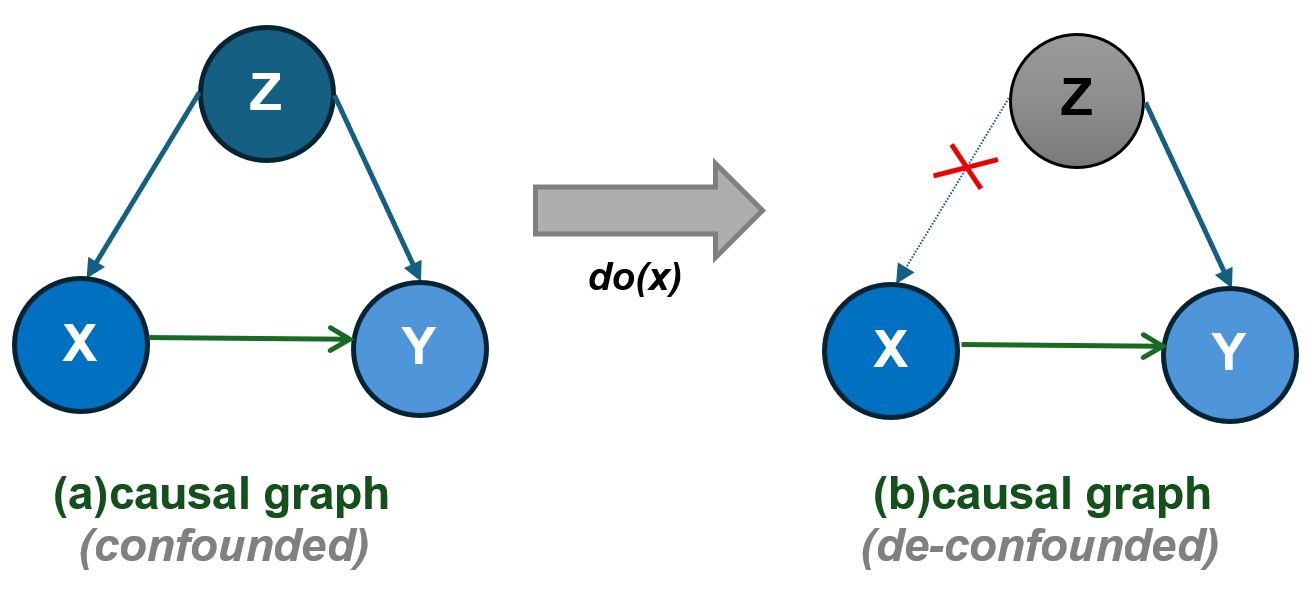

Integrating causal reasoning into Graph Neural Networks (GNNs) requires addressing the problem of confounding variables. These are unobserved factors that influence both node features and the target variable, creating spurious correlations that can mislead the GNN. Without accounting for confounders, the model may incorrectly infer a direct relationship between features and the target, leading to inaccurate predictions and flawed interpretations. Techniques to mitigate confounding include identifying potential confounders through domain knowledge or statistical methods, and then employing strategies like adjusting for these variables in the model, or using causal discovery algorithms to estimate the true underlying relationships between variables, thereby reducing bias and improving the reliability of the GNN’s inferences.

Feature disentanglement addresses the challenge of isolating causal signals within complex datasets by systematically separating meaningful feature variations from noise and spurious correlations. This process aims to identify the minimal set of factors that genuinely influence the target variable, effectively removing confounding information. Techniques employed often involve dimensionality reduction, information theory-based metrics like mutual information, and representation learning methods designed to enforce statistical independence between irrelevant feature components. Successful disentanglement improves the reliability of downstream analyses, including causal inference and predictive modeling, by ensuring that learned relationships reflect true dependencies rather than coincidental associations present in the observational data.

Attention-Guided Feature Disentanglement utilizes Graph Attention Networks (GATs) to dynamically weigh the importance of each feature when inferring causal relationships. GATs achieve this by learning attention coefficients that quantify the influence of each node’s features on its neighbors, effectively prioritizing features relevant to the causal effect being estimated. This contrasts with methods that treat all features equally or rely on predetermined importance scores. The attention mechanism allows the model to focus on features that contribute most significantly to explaining variations in the target variable, while down-weighting irrelevant or noisy features, thereby improving the accuracy and robustness of causal inference in graph-structured data. The resulting attention weights can also provide insights into which features are most important for the observed relationships.

CCAGNN: A System Designed for Clarity

The Causal Confounder-Aware Graph Neural Network (CCAGNN) addresses the challenge of unobserved confounders through Latent Confounder-Aware Feature Fusion. This process integrates features by explicitly modeling the potential influence of hidden common causes. Specifically, CCAGNN learns to represent and subtract the shared information attributable to these latent confounders from the node features before aggregation. This subtraction aims to isolate the genuine relationships between nodes, reducing spurious correlations induced by unobserved variables and improving the accuracy of downstream tasks reliant on accurate causal inference. The fusion mechanism operates on the premise that features affected by the same unobserved confounder will exhibit statistical dependencies that can be identified and removed through this learned feature transformation.

CCAGNN employs Conditional Mutual Information (CMI) to assess the statistical dependence between node features, given the presence of other features, thereby quantifying relationships relevant to disentangling causal effects from spurious correlations. Specifically, CMI, expressed as I(X;Y|Z), measures the information that variable X provides about variable Y, conditional on variable Z. In CCAGNN, this calculation is applied to node feature vectors, where Z represents potential confounders. Higher CMI values indicate stronger relationships, guiding the model to prioritize feature disentanglement along dimensions where conditional dependencies are most pronounced, ultimately improving the model’s robustness to unobserved confounding factors.

CCAGNN incorporates Graph Attention Networks version 2 (GATv2) to dynamically assess the significance of neighboring nodes within the graph structure. GATv2 employs attention mechanisms to learn weighted coefficients for each neighbor, effectively assigning higher importance to nodes more relevant to the target node’s causal dependencies. This adaptive weighting scheme contrasts with traditional Graph Neural Networks that utilize fixed or uniformly applied weights. The attention coefficients are calculated based on the features of both the target and neighboring nodes, allowing the model to discern subtle relationships and prioritize information from the most influential nodes during message passing. This improves the model’s capacity to accurately represent causal relationships even in the presence of noise or incomplete data, leading to enhanced performance in downstream tasks requiring causal inference.

A Signal Beyond the Benchmarks

Rigorous testing of the CCAGNN model across a diverse suite of benchmark datasets – encompassing citation networks like Cora, Citeseer, and PubMed, as well as large-scale product co-purchase graphs from Amazon and the social streaming platform Twitch – consistently reveals its performance advantages. These evaluations weren’t limited to a single type of graph structure or data characteristic; instead, CCAGNN demonstrated robust and reliable results irrespective of the dataset’s scale or inherent complexity. The model’s ability to maintain high accuracy across such varied domains suggests a fundamental strength in its approach to graph analysis, and highlights its potential for broad applicability beyond the specific datasets used in the study. This consistent outperformance establishes CCAGNN as a highly effective and versatile tool for numerous graph-based machine learning tasks.

Evaluations reveal that the CCAGNN model establishes new benchmarks in graph analysis, achieving state-of-the-art accuracy across several prominent datasets. Specifically, the model attained 91.14% accuracy on the Cora dataset, a significant improvement over existing graph neural networks. Further testing demonstrated strong performance on the Citeseer and PubMed datasets, with recorded accuracies of 82.84% and 89.97% respectively. These results collectively highlight CCAGNN’s capacity to effectively discern complex relationships within graph structures, consistently surpassing the performance of established methods in diverse academic citation networks.

The consistently strong performance of CCAGNN across diverse datasets highlights a critical advancement in graph analysis: the integration of causal reasoning. Traditional graph neural networks often identify correlations within data, but struggle with scenarios requiring an understanding of underlying cause-and-effect relationships. By explicitly modeling causal connections, CCAGNN achieves not only improved accuracy-reaching state-of-the-art results on benchmarks like Cora, Citeseer, and PubMed-but also enhanced reliability. This suggests that incorporating causal inference isn’t merely a refinement of existing techniques, but a fundamental shift toward more robust and generalizable graph-based models, with potential applications extending far beyond citation networks to areas like recommendation systems, social network analysis, and knowledge discovery.

The pursuit of CCAGNN, as detailed in this work, echoes a familiar pattern. The model doesn’t simply classify graphs; it attempts to model the underlying causal structure, a delicate ecosystem of influence. This mirrors the inherent instability of any complex system – a belief in perfect architectural solutions is, invariably, a denial of entropy. Vinton Cerf observed, “Any sufficiently advanced technology is indistinguishable from magic.” CCAGNN, with its counterfactual interventions and disentanglement of causal features, aims to move beyond mere correlation, approaching a deeper understanding of the ‘magic’ at play within graph data, knowing full well that even the most robust model will eventually succumb to the inevitable decay of its predictive power.

What Lies Ahead?

The pursuit of causal classification within graph neural networks, as exemplified by this work, is less a construction project and more an exercise in carefully applied delay. CCAGNN, with its focus on disentangling features through intervention, does not solve the problem of spurious correlation; it merely shifts the point of failure. The architecture postpones chaos, offering a temporary reprieve before the inevitable emergence of unforeseen confounders and distributional shifts. It is a necessary postponement, certainly, but one that should not be mistaken for resolution.

Future iterations will undoubtedly focus on expanding the scope of interventions – moving beyond node-level manipulations to consider more complex, system-wide perturbations. However, the fundamental challenge remains: the world is not static. Any model of causality is, by definition, a snapshot – a fleeting approximation of a dynamic system. There are no best practices, only survivors – those architectures capable of gracefully degrading in the face of inevitable model drift.

The true frontier lies not in perfecting the estimation of causal effects, but in embracing the inherent uncertainty. Order is just cache between two outages. The next generation of these models will need to incorporate mechanisms for continuous self-assessment, adaptive intervention strategies, and, crucially, the ability to recognize – and gracefully accommodate – its own limitations. The goal isn’t a perfect map, but a resilient explorer.

Original article: https://arxiv.org/pdf/2602.17941.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Itzaland Animal Locations in Infinity Nikki

- Gold Rate Forecast

- NBA 2K26 Season 6 Rewards for MyCAREER & MyTEAM

- Makoto Kedouin’s RPG Developer Bakin sample game is now available for free

- Paramount CinemaCon 2026 Live Blog – Movie Announcements Panel for Sonic 4, Street Fighter & More (In Progress)

- Where Winds Meet’s new Hexi expansion kicks off with a journey to the Jade Gate Pass in version 1.4

- Vibe Out With Ghost Of Yotei’s Watanabe Mode Music While You’re Stuck At Work

- When Logic Breaks Down: Understanding AI Reasoning Errors

- This Capcom Fanatical Bundle Is Perfect For Spooky Season

- Katanire’s Yae Miko Cosplay: Genshin Impact Masterpiece

2026-02-23 18:17