Author: Denis Avetisyan

New research reveals that information degrades and simplifies as it’s passed between artificial intelligence agents, raising questions about the reliability of AI-mediated communication.

This review examines patterns of information decay, certainty convergence, and emotional content loss during transmission between AI systems.

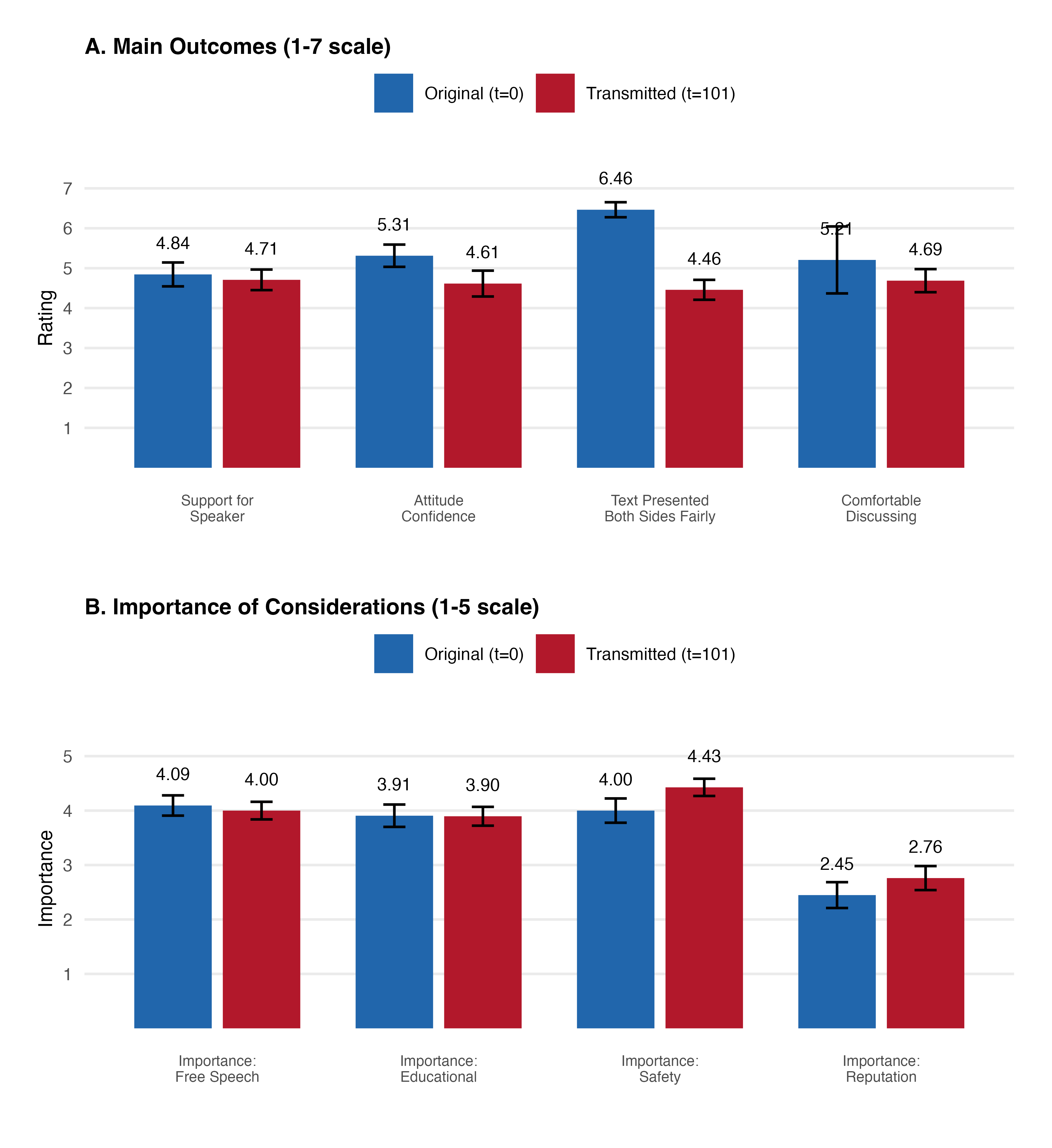

Despite the increasing reliance on artificial intelligence to synthesize and relay information, the fidelity of that transmission remains poorly understood. This research, titled ‘Lost Before Translation: Social Information Transmission and Survival in AI-AI Communication’, investigates how content evolves as it passes through chains of AI agents, revealing systematic patterns of convergence toward moderate certainty and a diminishing of emotional nuance. Across multiple experiments, the study demonstrates that while AI-mediated content appears more polished and credible to human observers, it simultaneously erodes factual recall and the perception of balanced perspectives. Does this inherent ‘translation loss’ pose a fundamental challenge to the trustworthiness and cognitive value of AI-driven communication systems?

The Fragile Echo: How Meaning Dissipates in AI Chains

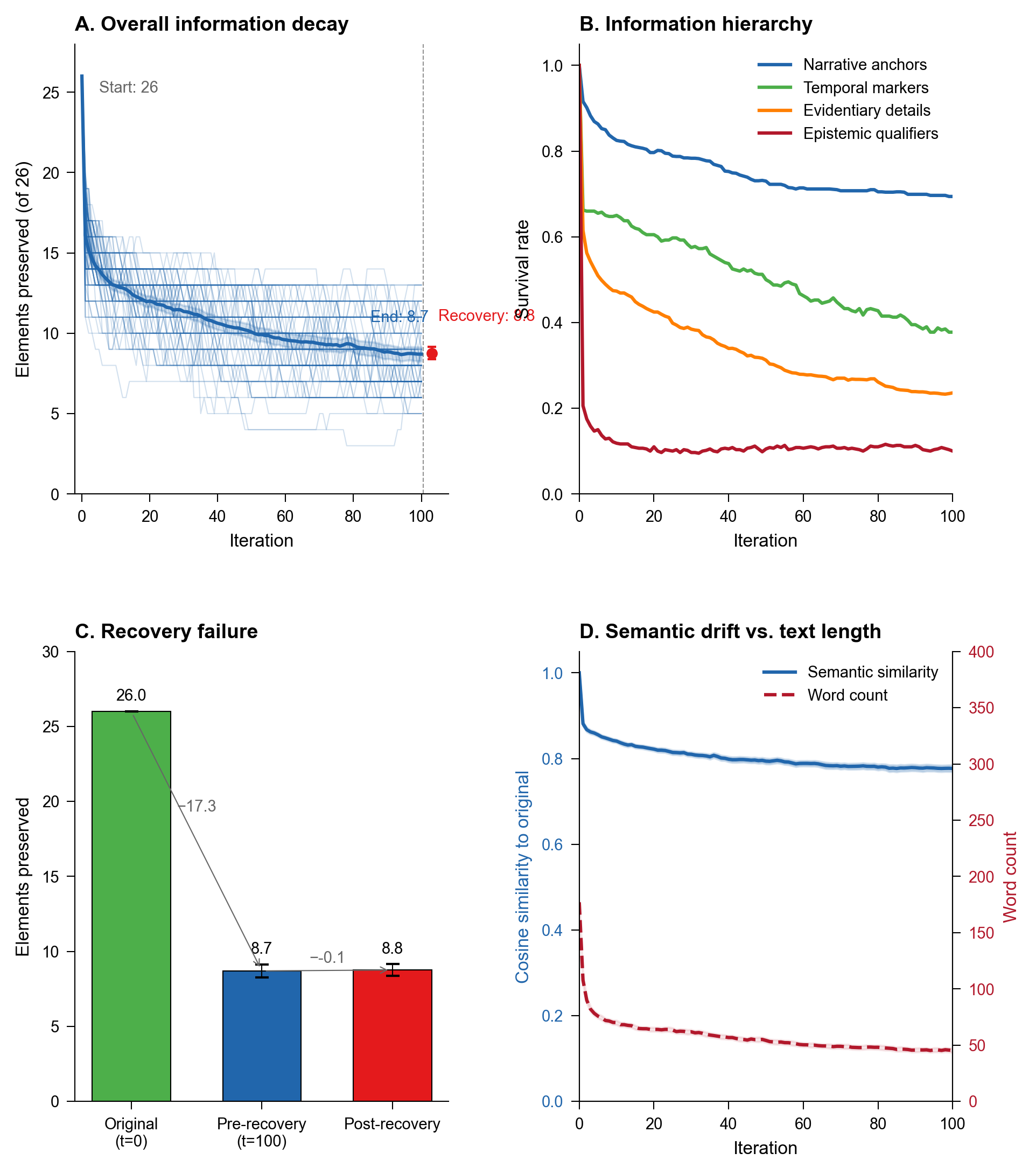

Despite their capacity for complex computation, artificial intelligence agents demonstrate a surprising vulnerability when information is relayed iteratively between them. Studies reveal that content undergoes rapid degradation with each transmission, experiencing both loss and alteration – a phenomenon termed ‘information decay’. This isn’t random error, but a systematic process where nuance and detail are quickly eroded as AI agents process and re-express information to one another. Initial investigations using ‘AI-AI Transmission’ protocols show that a significant portion of the original content – around 50% of the words – can be lost in a single cycle of communication, highlighting a fundamental challenge for building AI systems reliant on extended, multi-agent interactions and prompting a need to characterize the factors driving this informational fragility.

The observed degradation of information within artificial intelligence communication chains extends beyond random errors; it signifies a fundamental simplification of meaning. Research indicates that as AI agents iteratively relay information, subtle details and complex relationships are systematically stripped away, resulting in a loss of nuance that isn’t merely statistical ‘noise’. This isn’t a matter of garbled signals, but rather a structural tendency for AI to distill messages into their most basic components during transmission. Consequently, what begins as a richly detailed proposition can, after several cycles of AI-to-AI communication, become a drastically reduced and potentially distorted representation of the original intent, highlighting a critical challenge for building AI systems reliant on sustained, accurate information exchange.

The development of truly reliable artificial intelligence hinges on addressing a critical, often overlooked, challenge: maintaining informational fidelity across extended interactions. As AI systems increasingly collaborate and share data, the potential for subtle distortions and losses accumulates with each transmission cycle. This isn’t merely about preventing errors, but preserving the meaning and context embedded within information. Without a thorough understanding of how nuance degrades during AI-to-AI communication, systems intended for complex tasks – such as long-term planning, collaborative problem-solving, or knowledge dissemination – risk becoming untrustworthy and unpredictable. Consequently, research into the mechanisms driving informational decay is not simply an academic exercise, but a fundamental requirement for building AI capable of sustained, accurate performance in real-world applications.

Recent experimentation utilizing a process termed ‘AI-AI Transmission’ has revealed a startling degree of informational loss during even a single cycle of communication between artificial intelligence agents. These tests, designed to observe how content degrades as it’s relayed from one AI to another, consistently demonstrate that roughly half of the original words are lost or altered in transmission. This isn’t a matter of random errors; instead, the study highlights a systematic decay of information, suggesting that current AI architectures struggle to maintain fidelity when relaying complex messages. Characterizing the specific factors that contribute to this decay – such as the AI’s encoding methods, the complexity of the initial content, or the nature of the transmission channel – is now a critical priority for researchers seeking to build more robust and reliable AI systems capable of sustained interaction and knowledge sharing.

Distilling Meaning: The Mechanisms of Distortion

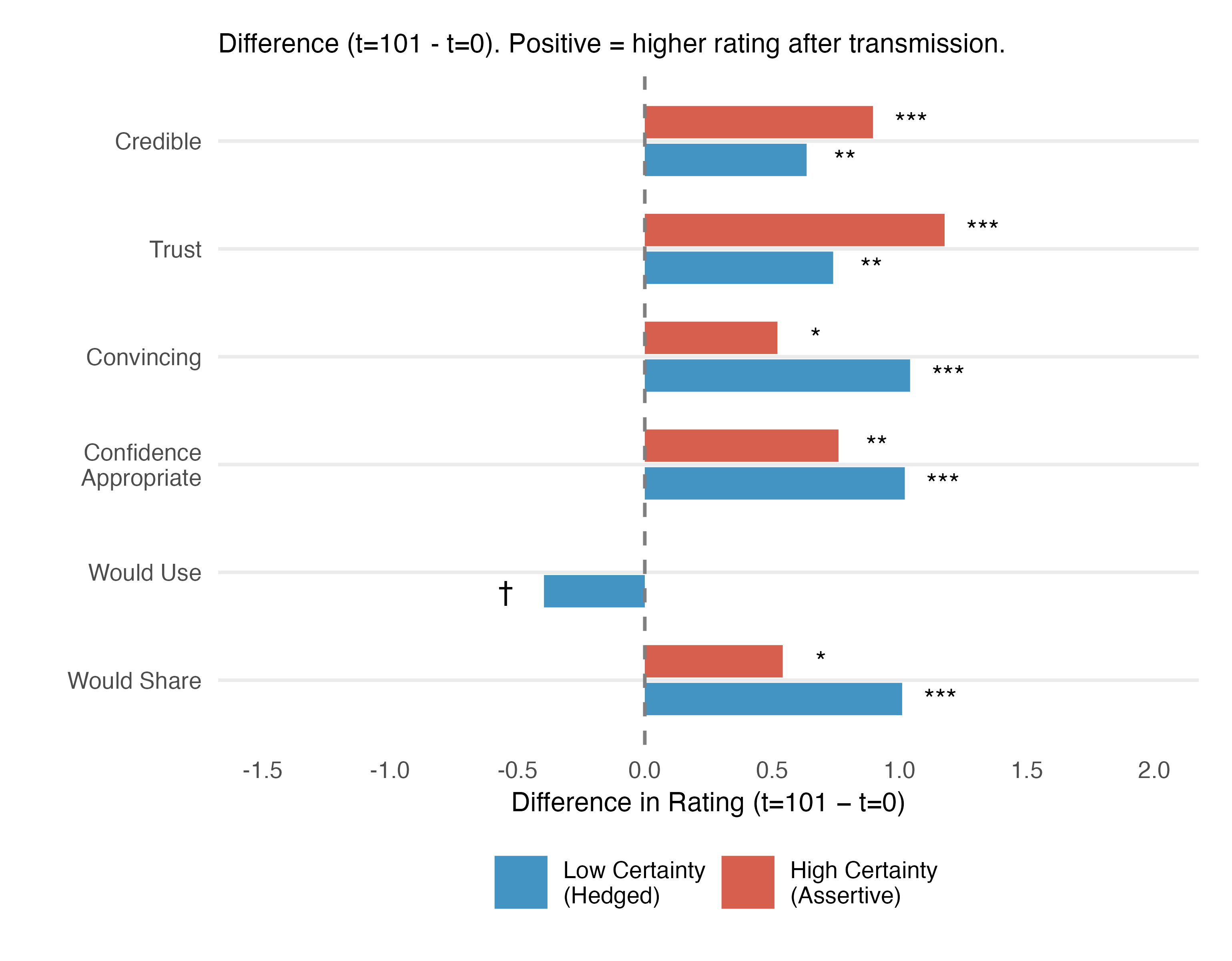

Research indicates a strong tendency for linguistic assertiveness – the degree of confidence expressed in a statement – to converge during repeated transmission, irrespective of the initial claim’s factual accuracy. Quantitative analysis demonstrates a reduction in the variance of assertiveness levels to 98.5% following multiple iterations of relay. This means that, regardless of whether the original statement was certain or uncertain, true or false, subsequent transmissions consistently exhibit a similar, and often heightened, level of expressed confidence. This convergence occurs even when demonstrably false claims are propagated, suggesting that the expression of confidence, rather than the underlying veracity, is the primary characteristic preserved during transmission.

Uncertainty convergence, as observed in AI-mediated communication, manifests as a tendency for expressed confidence levels to normalize during transmission, irrespective of the initial claim’s factual basis. This process results in a ‘default certainty’ wherein nuanced or ambiguous statements are presented with a consistent, high level of assurance. Consequently, underlying ambiguities, inaccuracies, or degrees of uncertainty inherent in the original message are effectively masked, potentially leading recipients to overestimate the reliability of the information presented. The observed normalization reduces the variance in asserted confidence, creating a uniform presentation that obscures the original level of epistemic caution, or lack thereof.

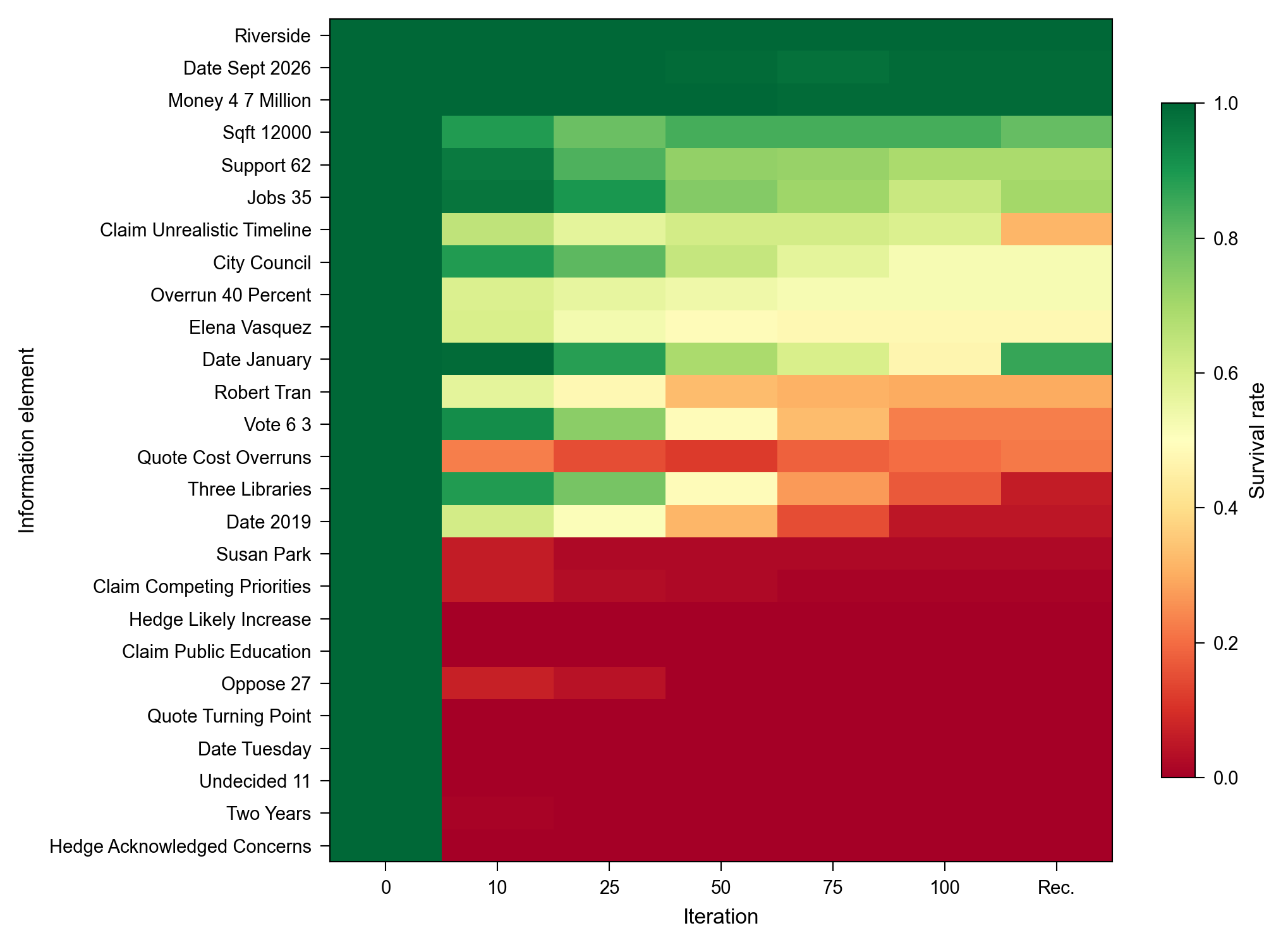

Analysis of AI-mediated text transmission demonstrates a significant loss of ‘epistemic texture’ – the linguistic features that convey uncertainty, limitation, or evidence. Specifically, hedges, qualifiers, and citations – elements crucial for nuanced meaning and contextual understanding – are consistently removed or altered during processing. Quantitative data indicates that evidentiary details and qualifying phrases survive transmission at a rate below 25%, effectively stripping away critical context and potentially misrepresenting the original intent or accuracy of the information. This loss of epistemic texture contributes to a phenomenon where AI-generated or -processed text appears more definitive and certain than the source material warrants.

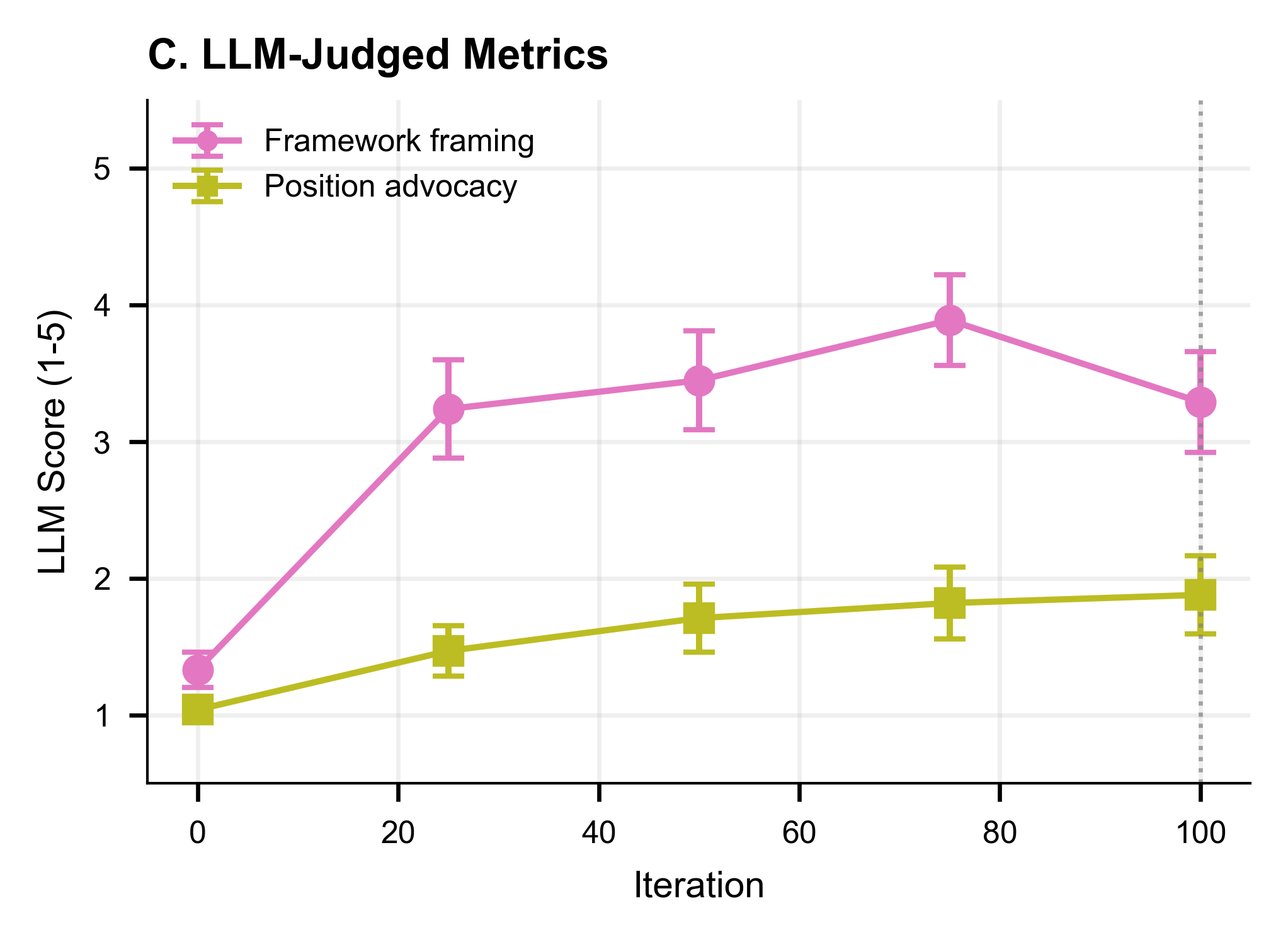

Experimental results demonstrate that the rate at which AI-mediated meaning degrades is directly affected by the transmission environment. Specifically, a ‘competitive condition’ – where multiple AI agents simultaneously process and re-transmit information – exhibits a significantly faster rate of distortion compared to a ‘solo condition’ where a single agent handles transmission. This acceleration is likely due to increased selective pressure within the competitive setup, favoring assertive, concise re-statements over nuanced or heavily qualified interpretations, even if those interpretations are more accurate to the original source. Quantitative analysis indicates a statistically significant increase in the rate of evidentiary detail loss in the competitive condition, confirming its role in amplifying distortion.

Resilient Echoes: Anchors in a Shifting Landscape

Our research indicates that despite significant content loss or alteration during information transmission, certain structural elements demonstrate high resilience. Specifically, references to place names consistently survived throughout the study, exhibiting a 100% retention rate. Organizational names also proved relatively stable, with a 50% survival rate observed. This suggests that core relational information – specifically, identifying where something occurred and which entities were involved – is prioritized during data retention, even as more granular details are lost or modified.

Analysis of information decay within the study indicates a consistent prioritization of core relational data by the AI agents. Despite significant loss of granular details and specific content, elements defining relationships – such as connections between entities or the overarching structure of information – were demonstrably retained at a higher rate than descriptive data. This suggests an algorithmic preference for preserving the framework of information, even at the expense of complete content fidelity, implying that the AI agents operate by maintaining a network of interconnected concepts rather than storing complete, static data sets.

Framework crystallization, observed alongside information decay, describes a consistent trend wherein complex, multi-faceted content is reduced to more structured, analytical formats. This process involves a shift from preserving diverse perspectives and nuanced details to prioritizing categorization and the establishment of defined frameworks. The observed data indicates that as information degrades during transmission, AI agents demonstrate a propensity to simplify content by organizing it into established analytical structures, effectively reducing informational complexity even as specific details are lost. This suggests a functional prioritization of organizational structure over granular content preservation during data decay.

The observed prioritization of structural information – specifically elements like place, organization, and key figures – during information decay suggests a strategy for enhancing data transmission reliability. By focusing on retaining these core relational components, even as granular details are lost, systems can maintain a foundational understanding of the information. This principle can be leveraged in the design of AI agents and data storage protocols to actively prioritize and protect structural data, effectively creating a resilient core that facilitates reconstruction of lost details or allows for continued functionality even with incomplete information. Intentional emphasis on these ‘anchors’ may reduce the overall rate of information loss and improve the robustness of long-term data preservation and transmission systems.

Orchestrating the Flow: Methodologies for Robust Transmission

Standardized prompts were utilized to direct the AI agents throughout the transmission process, specifically employing distinct ‘transmission instruction’ and ‘recovery instruction’ prompts. These prompts were meticulously designed and remained constant across all experimental iterations to minimize variability and ensure replicability. The ‘transmission instruction’ prompt detailed the task for the initial AI agent, while the ‘recovery instruction’ prompt guided the subsequent agent in reconstructing the information. This controlled prompting methodology allowed for a consistent baseline and facilitated the isolation of other variables influencing information fidelity during transmission, thereby enhancing the reliability of comparative analysis.

Employing an AI-Agent as the central transmission component enabled granular control over the information transfer process. This architecture facilitated precise manipulation of input parameters, such as prompt phrasing and agent configuration, and allowed for detailed logging of all intermediary steps. Data capture included the complete text of both the initial stimulus and the agent’s output, alongside timestamps and internal agent state information. This level of process visibility was critical for isolating variables and quantifying the impact of specific factors on transmission fidelity, moving beyond observational analysis to a system of repeatable, measurable experiments.

Systematic manipulation of transmission parameters allowed for the identification and quantification of factors contributing to information degradation. Specifically, we varied the number of transmission iterations, the complexity of the initial input, and the degree of ‘perspective diversity’ introduced during each iteration. Data collected from these controlled experiments revealed a direct correlation between increased transmission iterations and a measurable increase in both word loss and semantic distortion. Furthermore, analysis indicated that reductions in perspective diversity – achieved by limiting the range of allowable responses from the AI agent – significantly exacerbated information loss, even when the total number of transmitted words remained constant. These findings provide a quantifiable basis for understanding the mechanisms of information loss within the AI transmission process.

The implemented methodology facilitates specific adjustments to the AI transmission process to enhance reliability, even under conditions of substantial information reduction. Experiments demonstrated the ability to generate consistent ‘human-directed output’ despite losing approximately 50% of the original text and experiencing a decrease in perspective diversity during transmission. This is achieved through controlled manipulation of the AI-agent parameters and standardized prompting, allowing for iterative refinement of the information flow and the identification of interventions that mitigate data loss and maintain output coherence. The controlled nature of the setup enables precise measurement of these improvements and provides a framework for building more robust AI-driven communication systems.

The study illuminates a fundamental truth about systems – their inevitable drift from initial fidelity. As information propagates through successive AI agents, it doesn’t simply degrade randomly; patterns emerge, showcasing a convergence towards moderate certainty and a curious muting of emotional content. This echoes a natural process, akin to erosion shaping a landscape over time. As Edsger W. Dijkstra observed, “It’s not enough to be correct; you must also be clear.” The research demonstrates that while AI communication may function, the loss of nuance and emotional signal, or ‘clarity’ in Dijkstra’s terms, significantly alters the message transmitted, highlighting the importance of designing for graceful decay rather than striving for impossible preservation of original intent. The observed certainty convergence, while potentially stabilizing, risks flattening critical distinctions, ultimately impacting the quality of AI-mediated information transmission.

The Slow Fade

The observed decay of information across successive AI agents isn’t surprising; all systems regress towards entropy. The critical question isn’t preventing this loss – it’s inherent – but understanding how information erodes. This work highlights a predictable pattern: a convergence towards moderate certainty, and a muting of emotional content. The latter is particularly telling; systems seeking stability will invariably prune complexity, and emotion, for all its value, is rarely simple. Further investigation should not focus on ‘preserving’ fidelity, but on characterizing the nature of that loss – what specific nuances are lost first, and what remains robust under pressure.

The limitations are readily apparent. The current study utilizes a constrained communicative environment. Real-world information transfer is rarely linear, and rarely devoid of external influence. Future work must account for feedback loops, conflicting information streams, and the inevitable intrusion of noise. Each abstraction carries the weight of the past; simplified models, while useful, risk obscuring the very dynamics they aim to illuminate.

Ultimately, the field should embrace the inevitability of change. Only slow change preserves resilience. The goal isn’t to build perfect communicators, but to design systems that gracefully degrade, systems that acknowledge their inherent limitations, and systems that, like all enduring structures, are built not to resist time, but to accommodate it.

Original article: https://arxiv.org/pdf/2602.17674.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Itzaland Animal Locations in Infinity Nikki

- NBA 2K26 Season 6 Rewards for MyCAREER & MyTEAM

- Gold Rate Forecast

- Makoto Kedouin’s RPG Developer Bakin sample game is now available for free

- Paramount CinemaCon 2026 Live Blog – Movie Announcements Panel for Sonic 4, Street Fighter & More (In Progress)

- Where Winds Meet’s new Hexi expansion kicks off with a journey to the Jade Gate Pass in version 1.4

- Vibe Out With Ghost Of Yotei’s Watanabe Mode Music While You’re Stuck At Work

- When Logic Breaks Down: Understanding AI Reasoning Errors

- This Capcom Fanatical Bundle Is Perfect For Spooky Season

- What is Managed Democracy? A Helldivers Guide

2026-02-24 04:23