Author: Denis Avetisyan

A new framework integrates blockchain consensus with federated learning to create a robust and trustworthy decentralized AI system.

Resilient Federated Chain leverages Byzantine fault tolerance and robust aggregation to mitigate adversarial threats in federated learning environments.

While Federated Learning offers a promising path toward privacy-preserving, decentralized AI, its susceptibility to adversarial attacks remains a critical vulnerability. This paper introduces ‘Resilient Federated Chain: Transforming Blockchain Consensus into an Active Defense Layer for Federated Learning’, a novel framework that leverages blockchain technology to transform consensus mechanisms into an active defense layer for robust model training. By repurposing redundancy within a Proof of Federated Learning architecture and incorporating a flexible evaluation function, Resilient Federated Chain (RFC) demonstrably improves resilience against various attack strategies. Could this approach unlock a new era of truly trustworthy and secure decentralized learning environments?

Unveiling the Cracks: Federated Learning and the Allure of Interference

Federated learning represents a fundamental shift in machine learning practices, moving away from the traditional requirement of centralized datasets. Instead of bringing data to a model, this innovative approach brings the model to the data, enabling collaborative training across numerous decentralized devices – such as smartphones or hospitals – without the direct exchange of sensitive information. This paradigm is particularly crucial in scenarios where data privacy is paramount, or data is naturally distributed and difficult to consolidate due to logistical or regulatory constraints. By performing computations locally and only sharing model updates – rather than the raw data itself – federated learning aims to unlock the potential of vast, previously inaccessible datasets, fostering advancements in fields ranging from healthcare and finance to personalized technology, all while respecting user privacy and data sovereignty.

The very architecture that defines federated learning – its distribution across numerous independent devices – simultaneously creates new avenues for malicious interference. This decentralized nature renders the system particularly vulnerable to Byzantine attacks, where compromised participants intentionally submit faulty updates to corrupt the global model. Equally concerning are backdoor attacks, where adversaries subtly manipulate local training data to embed hidden triggers within the resulting model, allowing them to control or extract information from it later. Unlike centralized systems where security can be enforced at a single point, the distributed nature of federated learning necessitates defenses at every participating node, dramatically increasing the complexity of ensuring model integrity and reliability. These attacks aren’t merely theoretical concerns; researchers have demonstrated successful implementations, highlighting the urgent need for robust security protocols specifically designed for this emerging paradigm.

The established defenses for machine learning systems, largely built around securing centralized datasets and models, prove inadequate when applied to federated learning environments. This inadequacy stems from the distributed nature of the training process; traditional security perimeters dissolve as model updates, rather than raw data, traverse networks. Consequently, malicious actors can compromise the global model not through direct data breaches, but by injecting poisoned updates from compromised client devices. Addressing this vulnerability necessitates a shift towards novel security paradigms, including robust aggregation algorithms designed to detect and mitigate the impact of adversarial contributions, differential privacy techniques applied to model updates, and secure multi-party computation to ensure the integrity of the federated training process. These advanced approaches are crucial for establishing trust and reliability in federated learning systems, paving the way for secure and privacy-preserving collaborative intelligence.

The Art of Filtering: Robust Aggregation in a Hostile Landscape

Robust aggregation techniques, including Krum, Bulyan, and Geometric Median, mitigate the impact of adversarial clients in federated learning by selectively filtering model updates before averaging. Krum identifies a subset of updates closest to each other, excluding outliers assumed to be malicious. Bulyan utilizes a signed distance metric to evaluate update contributions, downweighting those that significantly deviate from the expected update direction. Geometric Median computes the update that minimizes the sum of distances to all received updates, effectively reducing the influence of extreme values. These methods operate on the principle that malicious clients will submit updates substantially different from the honest majority, and by discarding these outliers, the global model remains resistant to corruption.

Robust aggregation techniques mitigate the impact of malicious updates by statistically identifying and excluding outlying contributions from clients during the federated learning process. These methods operate on the principle that updates representing genuine model improvements will cluster around a central value, while adversarial or corrupted updates will exhibit significant deviation. Specifically, algorithms like Krum and Bulyan quantify the distance between each update and a subset of other updates, discarding those exceeding a defined threshold. Geometric Median similarly identifies the update that minimizes the aggregate distance to all other updates, effectively down-weighting or excluding outliers. This process limits the influence of potentially harmful contributions, protecting the global model from manipulation or degradation.

Although robust aggregation techniques like Krum, Bulyan, and Geometric Median demonstrate effectiveness in mitigating the impact of malicious updates from a subset of clients, their performance can degrade when facing sophisticated attack strategies or non-independent and identically distributed (Non-IID) data. Complex attacks may involve coordinated malicious behavior exceeding the simple outlier rejection capabilities of these methods. Furthermore, Non-IID data, where clients possess significantly different data distributions, introduces inherent variance in model updates; this variance can be mistaken for malicious activity, leading to the incorrect exclusion of legitimate updates and hindering model convergence. Consequently, research often focuses on combining these techniques with other defenses or adapting them to account for data heterogeneity to achieve improved resilience in real-world federated learning deployments.

Forging Resilience: The Resilient Federated Chain Architecture

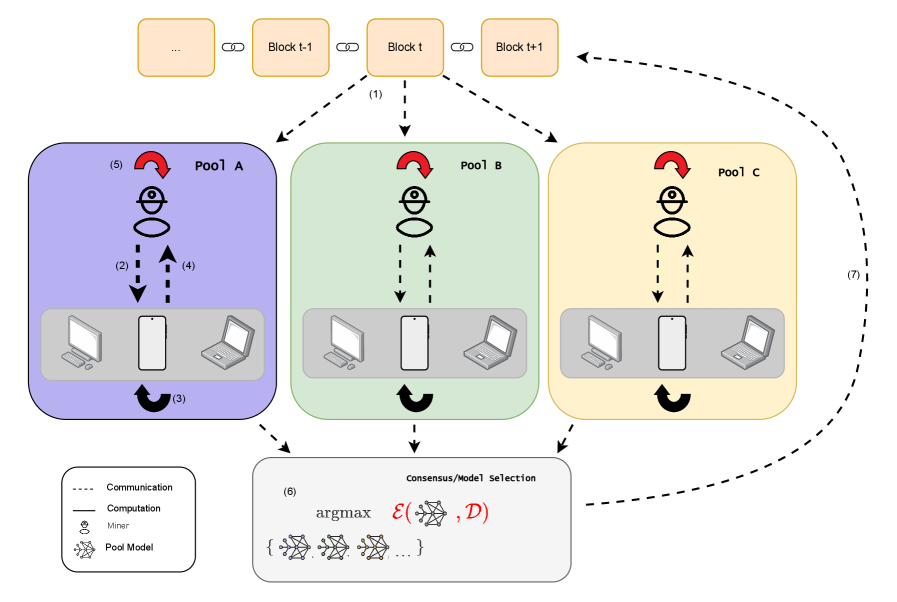

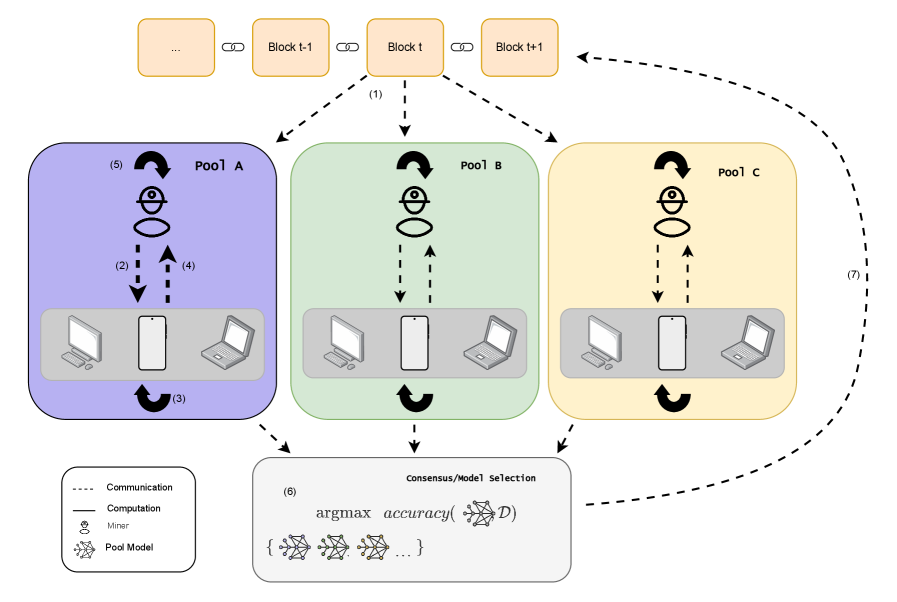

The Resilient Federated Chain (RFC) framework integrates robust aggregation techniques with the inherent security features of blockchain technology. Specifically, RFC utilizes a permissioned blockchain to record and validate contributions from participating federated learning pools. This blockchain implementation ensures data immutability and provides a transparent audit trail of model updates, preventing malicious actors from altering or suppressing legitimate contributions. By anchoring the aggregation process to a blockchain, RFC establishes a verifiable record of consensus, enhancing trust and accountability within the federated learning system. This combination of robust aggregation and blockchain-based security provides a more resilient and reliable foundation for collaborative model training compared to traditional centralized or non-blockchain federated learning approaches.

The Resilient Federated Chain (RFC) framework addresses the single point of failure present in traditional centralized Federated Learning systems by implementing a Proof of Federated Learning (PoFL) consensus mechanism. In conventional setups, a central server is responsible for aggregating model updates, creating a vulnerability; compromise of this server halts the entire process. PoFL distributes consensus responsibility across multiple participating pools, requiring a supermajority to validate and commit aggregated updates to a shared blockchain. This decentralized validation process ensures system operation continues even if a subset of pools experiences compromise or failure, enhancing overall robustness and availability. The blockchain component provides an immutable record of model updates and consensus decisions, further strengthening the system’s resilience against manipulation and ensuring data integrity.

The Resilient Federated Chain (RFC) architecture demonstrably enhances the robustness of Federated Learning systems when subjected to adversarial attacks. Testing indicates that RFC maintains greater than 50% accuracy even with a significant number of compromised participant pools. In contrast, standard Federated Averaging (FedAvg) and Proof-of-Federated Learning algorithms experience substantial performance degradation under the same adversarial conditions. Specifically, accuracy declines rapidly as the proportion of malicious participants increases, whereas RFC’s blockchain-based aggregation and consensus mechanism mitigate the impact of compromised data contributions, preserving a higher degree of model accuracy and reliability.

Resilient Federated Chains (RFC) demonstrate significant mitigation of backdoor attacks in federated learning scenarios. Evaluations indicate that RFC achieves low loss rates when subjected to adversarial tasks designed to introduce backdoors into the global model. In contrast, the standard Federated Averaging (FedAvg) algorithm exhibits a failure to defend against these attacks, resulting in substantially higher loss on the adversarial task and compromised model integrity. This improved resilience stems from RFC’s blockchain-based aggregation process, which verifies and validates model updates, effectively filtering out malicious contributions intended to create backdoors.

Resilient Federated Chain (RFC) variants address the overfitting issues commonly observed when employing Krum and GeoMed aggregation rules in federated learning. Traditional implementations of these robust aggregation methods can exhibit instability and slow convergence due to susceptibility to model variance. RFC introduces mechanisms that regularize the aggregation process, reducing the impact of outlier models and promoting more stable updates. Comparative analysis demonstrates that RFC-integrated Krum and GeoMed achieve improved convergence rates and reduced generalization error compared to their non-RFC counterparts, leading to more reliable and accurate global models.

Beyond Security: Towards a Decentralized Future of Intelligence

The Resilient Federated Chain presents a novel architecture for artificial intelligence, moving beyond centralized models to foster collaborative learning while safeguarding sensitive data. This framework utilizes a distributed ledger, akin to a blockchain, to record model updates and contributions from various participants, ensuring transparency and immutability. Crucially, raw data remains localized, with only encrypted model parameters being shared, thereby preserving data privacy and minimizing the risk of breaches. The chain’s resilience stems from its ability to withstand malicious attacks or participant failures; consensus mechanisms validate contributions, and redundant data storage ensures system integrity. This approach not only enhances security but also empowers a broader range of stakeholders to participate in AI development, fostering innovation and democratizing access to advanced technologies.

The potential impact of a resilient, decentralized AI framework extends across numerous critical sectors. In healthcare, it promises to facilitate collaborative medical research and diagnostics while preserving patient data confidentiality – a crucial element for building trust and accelerating discoveries. Financial institutions could leverage this technology to detect fraudulent activities and assess risk more effectively, all without centralizing sensitive customer information. Perhaps most profoundly, autonomous systems – from self-driving vehicles to robotic surgery – stand to benefit from enhanced security and reliability, ensuring operational integrity even in the face of adversarial attacks or system failures, ultimately fostering greater public acceptance and widespread adoption of these transformative technologies.

Ongoing development of the Resilient Federated Chain prioritizes practical implementation through enhancements in several key areas. Researchers are actively investigating methods to improve the framework’s scalability, enabling it to accommodate increasingly large datasets and a greater number of participating nodes without performance degradation. Simultaneously, efforts are dedicated to minimizing the system’s energy footprint, crucial for deployment in resource-constrained environments and promoting sustainable AI practices. Perhaps most critically, the framework’s adaptability to novel attack vectors is under constant scrutiny; this involves developing proactive defense mechanisms and incorporating techniques from adversarial machine learning to ensure long-term security and resilience against evolving threats. These combined advancements aim to transition the Resilient Federated Chain from a promising theoretical model to a robust, efficient, and secure platform for decentralized artificial intelligence.

The pursuit of robust decentralized systems, as demonstrated by Resilient Federated Chain, inherently involves a degree of controlled demolition. This framework doesn’t merely accept the potential for Byzantine faults and adversarial attacks; it actively incorporates them into its defensive strategy. As Brian Kernighan observed, “Debugging is twice as hard as writing the code in the first place. Therefore, if you write the code as cleverly as possible, you are, by definition, not smart enough to debug it.” RFC embodies this principle, challenging the conventional wisdom of monolithic security by deliberately stress-testing the consensus mechanisms and aggregation techniques against simulated threats. The system’s resilience isn’t a byproduct of flawless design, but rather a consequence of proactively seeking-and neutralizing-points of failure, demonstrating a powerful, iterative approach to trustworthy AI.

Beyond the Chain: Future Directions

The presented Resilient Federated Chain, while a pragmatic fusion of established techniques, inevitably reveals more questions than it answers. The immediate utility lies in hardening decentralized learning against known attacks – a reactive posture. However, true resilience isn’t about withstanding blows, but anticipating them. The framework’s reliance on Byzantine Fault Tolerance assumes a certain rationality in malicious actors; a deviation from this assumption – irrational sabotage, for instance – could expose vulnerabilities. Future work should explore game-theoretic models of adversarial behavior, testing the limits of RFC’s defensive capabilities against truly unpredictable opponents.

Furthermore, the computational overhead of integrating blockchain consensus remains a significant constraint. While current implementations address immediate security concerns, scaling RFC to encompass genuinely large-scale, resource-constrained devices demands a radical rethinking of consensus mechanisms. Perhaps the solution doesn’t lie in more computation, but in intelligently distributing the burden, leveraging differential privacy and homomorphic encryption to minimize data exposure while maximizing efficiency.

Ultimately, the value of this approach isn’t simply building a more secure system, but understanding the fundamental limits of trust in decentralized environments. The aim should not be to create an impenetrable fortress, but a transparent one – a system where vulnerabilities are known, and their exploitation is detectable, allowing for rapid adaptation and a continuous cycle of refinement. Only by embracing this inherent fragility can genuinely robust and trustworthy AI be achieved.

Original article: https://arxiv.org/pdf/2602.21841.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- United Airlines can now kick passengers off flights and ban them for not using headphones

- Crimson Desert: Disconnected Truth Puzzle Guide

- All 9 Coalition Heroes In Invincible Season 4 & Their Powers

- Mewgenics vinyl limited editions now available to pre-order

- The Boys Season 5 Spoilers: Every Major Character Death If the Show Follows the Comics

- Invincible Season 4 Episode 6 Release Date, Time, Where to Watch

- Grok’s ‘Ask’ feature no longer free as X moves it behind paywall

- Assassin’s Creed Shadows will get upgraded PSSR support on PS5 Pro with Title Update 1.1.9 launching April 7

- Crimson Desert Guide – How to Pay Fines, Bounties & Debt

- ‘Timur’ Trailer Sees Martial Arts Action Collide With a Real-Life War Rescue

2026-02-26 23:29