Echoes of Bias: How AI Safety Fades with Time and Language

New research reveals that the safety of large language models isn’t static, but diminishes as they encounter nuanced cultural contexts and older data.

New research reveals that the safety of large language models isn’t static, but diminishes as they encounter nuanced cultural contexts and older data.

New research introduces a method for optimizing language model behavior by carefully managing the inherent trade-offs between being both harmless and helpful.

![FlowNP demonstrably surpasses TNP in modeling discontinuous functions, accurately capturing sharp transitions within random step functions-a capability attributable to its ability to represent multimodal distributions, unlike TNP’s reliance on Gaussian autoregressive prediction which inherently smooths such features, as evidenced by its performance at [latex] x=0 [/latex].](https://arxiv.org/html/2512.23853v1/figures/step3.png)

Researchers have developed a novel neural process model that leverages flow matching to generate and evaluate complex functions with improved efficiency and accuracy.

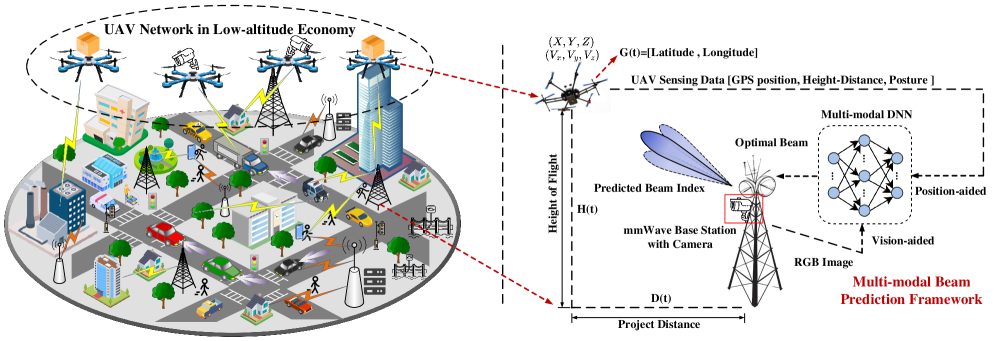

A new deep learning framework leverages multi-sensor data to significantly improve communication reliability for unmanned aerial vehicles in complex urban environments.

![The framework dissects signal generation into dual pathways-a Content Encoder extracting the core pathological factor [latex]Z_c[/latex] under physiological constraints, and a Style Encoder isolating non-causal background noise [latex]Z_s[/latex]-before recombining them via a Decoder to ensure sufficient and statistically orthogonal reconstruction of the original signal.](https://arxiv.org/html/2512.24564v1/cpr_framework_optimized.png)

Researchers have developed a novel framework that leverages causal reasoning and physiological knowledge to build ECG analysis models resilient to real-world variations and malicious attacks.

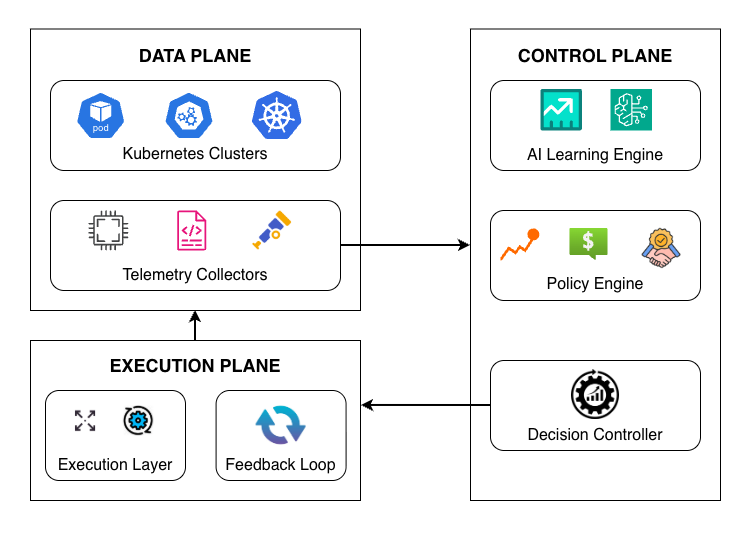

A new framework leverages artificial intelligence to dynamically optimize resource allocation across distributed cloud environments, boosting performance and reducing waste.

![The study dissects an ensemble learning model, demonstrating that removing individual components-as evidenced by the decrease from an original accuracy and F1-score ([latex]Acc\_Org[/latex], [latex]F1\_Org[/latex]) to modified values ([latex]Acc[/latex], [latex]F1[/latex])-reveals the contribution of each element to overall performance, effectively quantifying the system’s reliance on redundancy and specialized functions.](https://arxiv.org/html/2512.24772v1/ch4.png)

Researchers have developed a novel framework to improve the accuracy of depression detection in multiple languages, particularly for those with limited data.

A new framework automatically translates high-level threat descriptions into platform-specific queries for diverse security information and event management systems.

A diagnostic approach to understanding and correcting error dynamics-bias, noise, and alignment-promises more robust and interpretable machine learning systems.

![The study demonstrates how increasingly refined stochastic interpolation-using SINNOs with parameters [latex]n=5, 10, 20, 50[/latex]-can approximate the Ornstein-Uhlenbeck process, revealing that even simple numerical methods can converge towards the true stochastic path with sufficient refinement.](https://arxiv.org/html/2512.24106v1/SINNOsapprox.png)

Researchers have developed a new approach to accurately simulate and predict stochastic processes using a novel type of neural network operator.