Author: Denis Avetisyan

Artificial intelligence is transforming network defense, but its performance isn’t guaranteed in the face of evolving threats and real-world data challenges.

A comprehensive review reveals the vulnerabilities of AI-powered intrusion detection and side-channel analysis to data distribution shifts and adversarial attacks, emphasizing the need for robust and adaptive machine learning models.

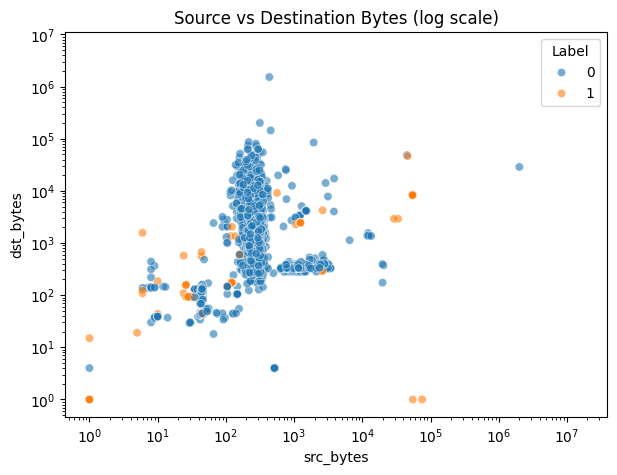

Despite advances in cybersecurity, reliably defending against evolving threats remains a persistent challenge. This is addressed in ‘Understanding AI Methods for Intrusion Detection and Cryptographic Leakage’, which investigates the application of machine learning to both network intrusion detection and the identification of cryptographic implementation vulnerabilities. Our results demonstrate that while AI models can achieve high accuracy in stable environments and even reveal patterns indicative of side-channel leakage, performance degrades significantly when confronted with shifting data distributions or adversarial manipulations. Consequently, how can we develop more robust and adaptive AI systems to ensure continued cybersecurity efficacy in the face of increasingly sophisticated attacks?

The Evolving Threat Landscape: A System in Decay

Digital infrastructure now contends with a relentless and escalating wave of cyberattacks, moving beyond simple intrusions to encompass highly complex, multi-stage operations. These attacks aren’t merely increasing in frequency, but also in sophistication, leveraging artificial intelligence, machine learning, and advanced evasion techniques to bypass traditional security measures. Contemporary threats often target critical systems – energy grids, financial institutions, healthcare providers – with the potential for widespread disruption and significant economic damage. Attackers are also increasingly utilizing supply chain vulnerabilities, exploiting trusted relationships to gain access to target networks, and employing ransomware tactics that demand substantial payouts to restore data and functionality. This constant barrage necessitates a proactive, adaptive, and layered security approach to effectively mitigate risk in the modern digital landscape.

Conventional cybersecurity systems, largely reliant on signature-based detection and static rule sets, are increasingly challenged by the speed and complexity of modern attacks. These established methods struggle to identify novel threats, particularly those leveraging polymorphic malware – malicious code that constantly alters its signature to evade detection. Attack vectors are also diversifying, moving beyond simple viruses to encompass sophisticated techniques like fileless malware, supply chain attacks, and exploits targeting zero-day vulnerabilities. This creates a significant gap in security, as defenses built on recognizing known threats are rendered less effective against rapidly evolving and previously unseen malicious activity. Consequently, organizations are facing a growing need for proactive, adaptive security solutions that can anticipate and neutralize threats before they cause damage.

The exponential growth of data traversing networks presents a significant hurdle in contemporary cybersecurity. Modern networks generate immense volumes of traffic, encompassing legitimate user activity, application data, and increasingly, malicious intrusions. This sheer scale overwhelms traditional security systems reliant on manual analysis or signature-based detection, creating a critical time lag between an attack’s inception and its identification. Security Information and Event Management (SIEM) systems, while designed to aggregate and analyze data, often struggle with ‘alert fatigue’ – generating a flood of warnings, many of which are false positives. Consequently, genuine threats can remain hidden within the noise, allowing attackers ample opportunity to compromise systems and exfiltrate data before defenders can react effectively. Advanced analytics, including machine learning, are being deployed to address this challenge, aiming to automatically prioritize alerts and identify anomalous patterns indicative of malicious activity, but the ongoing arms race between attackers and defenders necessitates continuous innovation in data processing and threat detection techniques.

Adaptive Defenses: The Rise of Machine Intelligence

Artificial Intelligence (AI) and Machine Learning (ML) are increasingly utilized to automate tasks previously requiring significant human analyst intervention in threat analysis and detection. ML algorithms process large datasets of network traffic, system logs, and file characteristics to identify indicators of compromise (IOCs) and anomalous behaviors. This automation reduces the time to detect threats and alleviates the burden on security teams. Specifically, ML enables the creation of systems capable of continuous monitoring and real-time analysis, improving the scalability and responsiveness of security operations. The technology moves beyond signature-based detection, adapting to novel and evolving threats without explicit programming for each new attack vector.

Machine learning (ML) algorithms improve upon traditional security systems by identifying malicious activity through data analysis rather than predefined rules. Rule-based systems rely on signatures of known threats and struggle with zero-day exploits or polymorphic malware. Conversely, ML algorithms are trained on datasets of both benign and malicious activity, allowing them to establish baselines of normal behavior and detect deviations indicative of threats. This approach enables the identification of previously unknown malware and adapts to evolving attack techniques. Furthermore, ML’s automated analysis significantly reduces the time required to detect and respond to incidents compared to manual review processes, increasing overall security efficacy and reducing analyst fatigue.

Deep Learning utilizes artificial neural networks with multiple layers – often termed “deep” networks – to analyze data and identify complex, non-linear relationships indicative of anomalous activity. These networks are trained on large datasets, enabling them to learn hierarchical representations of features; lower layers identify basic patterns, while higher layers combine these into more abstract concepts. This allows Deep Learning models to detect subtle anomalies that might be missed by traditional signature-based or even simpler Machine Learning algorithms, particularly in scenarios involving zero-day exploits or polymorphic malware where malicious code constantly changes its appearance. The effectiveness of Deep Learning is directly correlated to the size and quality of the training data and the architecture of the neural network itself, with convolutional and recurrent neural networks being commonly employed for security applications.

AI and machine learning techniques are integrated into several key defensive security systems. Malware classification leverages ML algorithms to analyze file characteristics and categorize threats based on learned patterns, automating the process of identifying malicious software. Threat analysis benefits from these technologies through automated data correlation and the identification of emerging attack campaigns. Critically, intrusion detection systems (IDS) utilize ML to establish baselines of normal network activity and flag deviations indicative of unauthorized access or malicious behavior, significantly improving detection rates and reducing false positives compared to signature-based approaches.

The Fragility of Intelligence: Adversarial Landscapes

Adversarial attacks represent a significant threat to machine learning-based security systems by intentionally crafting malicious inputs designed to circumvent detection mechanisms. These attacks, including techniques like feature manipulation, operate by subtly altering input data – such as network packets – while maintaining the underlying malicious intent. This manipulation aims to exploit vulnerabilities in the model’s learning process, causing misclassification or allowing the attack to go undetected. Feature manipulation specifically targets the most informative attributes used by the model, potentially causing disproportionate impact on detection rates. Our research indicates that manipulating even a small number of top features can substantially reduce the effectiveness of AI-based intrusion detection, leading to a significant increase in missed attacks.

Data distribution shift represents a critical challenge for machine learning-based intrusion detection systems due to the evolving nature of network attacks. The statistical properties of attack traffic are rarely static; attackers continually adapt their techniques to evade detection, resulting in a divergence between the data used during model training and the data encountered during deployment. This shift can manifest as changes in feature values, the emergence of novel attack patterns, or alterations in the frequency of existing attacks. Consequently, models trained on historical data may exhibit significantly reduced performance when confronted with these altered data distributions, leading to increased false negatives and a diminished ability to accurately identify and respond to emerging threats. Observed performance on shifted testing data indicates approximately 70% detection accuracy, highlighting the substantial degradation caused by this phenomenon.

The NSL-KDD and CIC-IDS datasets are commonly utilized for evaluating and enhancing the robustness of machine learning-based intrusion detection systems. These datasets are designed to mimic authentic network traffic, incorporating a diverse range of attack types and normal activity, allowing for realistic performance assessments. The NSL-KDD dataset addresses limitations found in the original KDD Cup 99 dataset, offering a reduced size and removal of redundant records. CIC-IDS, conversely, provides more contemporary and comprehensive traffic captures, including modern attack vectors and application protocols. Utilizing these datasets enables researchers and developers to benchmark model performance, identify vulnerabilities to evasion techniques, and iteratively improve the resilience of their security solutions against real-world threats.

Adversarial learning is a training methodology designed to improve the robustness of machine learning models against evasion attacks. This technique involves augmenting the training dataset with intentionally crafted adversarial examples – inputs specifically designed to cause the model to make incorrect predictions. By exposing the model to these challenging inputs during training, the model learns to identify and correctly classify them, effectively increasing its resilience to malicious inputs attempting to bypass security measures. This process encourages the model to focus on more robust features and avoid over-reliance on easily manipulated aspects of the input data, ultimately enhancing its ability to generalize to unseen, potentially adversarial, data.

Evaluations of AI-based intrusion detection systems indicate a high baseline performance, achieving a Precision-Recall Area Under the Curve (PR-AUC) of 0.9983 in controlled laboratory settings. However, this performance is demonstrably susceptible to adversarial attack. Specifically, manipulation of the top 5 features in attack data resulted in a significant reduction of PR-AUC to 0.6148. Increasing the number of manipulated features to 10 and 20 further degraded performance, yielding PR-AUC scores of 0.5790 and 0.6307, respectively. These results highlight a substantial vulnerability in current AI-based security systems when exposed to even limited feature manipulation within adversarial inputs.

Evaluation of the AI-based intrusion detection system revealed a 70% detection accuracy rate when tested against data exhibiting distribution shift, indicating significant performance degradation when faced with previously unseen attack patterns. Furthermore, feature manipulation attacks resulted in over 41,000 missed attacks during testing. This suggests a substantial vulnerability to adversarial techniques that subtly alter input features, allowing malicious traffic to bypass detection mechanisms and potentially compromise system security.

The Hidden Signals: Exploiting Physical Realities

Side-Channel Analysis represents a significant threat to cryptographic systems by moving beyond purely mathematical vulnerabilities and focusing on their physical implementations. Rather than attacking the algorithms themselves, these techniques exploit the inherent physical characteristics produced during computation – notably, variations in power consumption, electromagnetic radiation, and even timing differences. These emanations, though seemingly insignificant, correlate with the data being processed, effectively ‘leaking’ sensitive information like encryption keys. The premise relies on the fact that different operations within a cryptographic process require varying amounts of energy or time, creating measurable patterns that can be analyzed to deduce secret values. Consequently, even mathematically robust encryption algorithms become susceptible to compromise when considered alongside their physical footprint, demanding a holistic approach to security that addresses both software and hardware vulnerabilities.

Power analysis, a prominent side-channel attack, hinges on the principle that a device’s power consumption isn’t constant during computation, but fluctuates based on the data being processed. These subtle variations, often measured with high-resolution oscilloscopes, can reveal information about the secret key used in cryptographic operations. A core concept within this analysis is Hamming Weight – the number of ‘1’ bits in a binary value. Because operations on bits with different values require varying amounts of energy, the total Hamming Weight of intermediate values during encryption or decryption directly influences power consumption. By statistically analyzing numerous power traces collected during cryptographic operations, attackers can correlate these fluctuations with the Hamming Weight and, ultimately, deduce the secret key. This highlights that even a mathematically secure algorithm can be compromised through its physical implementation, making power analysis a significant threat to cryptographic systems.

Despite the robust mathematical foundations of modern encryption standards, including AES Encryption, ECC Encryption, and Diffie-Hellman Key Exchange, implementations are susceptible to compromise through side-channel attacks. These attacks don’t target flaws in the algorithms themselves, but rather exploit the physical characteristics of the systems executing them. Variations in power consumption, electromagnetic radiation, or even processing time, all correlated to the data being processed, can leak sensitive information to an attacker. This means a carefully crafted side-channel analysis, monitoring these physical emanations, can reveal secret keys or plaintext, effectively breaking the encryption without needing to solve the underlying mathematical problem. Consequently, even mathematically secure algorithms require diligent hardware and software countermeasures to prevent these practical, implementation-level vulnerabilities.

The development of robust countermeasures against side-channel attacks is heavily reliant on access to comprehensive datasets, and the SCA Dataset serves as a pivotal resource in this field. These curated collections of physical leakage traces – capturing variations in power consumption, electromagnetic radiation, or timing – provide the necessary training and testing grounds for artificial intelligence (AI) and machine learning (ML) algorithms. Researchers utilize these datasets to develop AI-powered defenses capable of distinguishing between legitimate cryptographic operations and attacks, effectively masking sensitive information. Without such datasets, evaluating the efficacy of proposed defenses becomes significantly challenging, hindering progress in securing cryptographic implementations against increasingly sophisticated physical attacks. The availability of standardized datasets like the SCA Dataset fosters collaboration and accelerates the development of practical and effective security solutions.

Analysis of side-channel attacks demonstrates a significant vulnerability in cryptographic systems, revealing that approximately 50% classification accuracy is achievable by attackers. This finding underscores that even mathematically secure algorithms, such as AES and ECC, can be compromised through the observation of physical characteristics during operation. The ability to correctly classify information with such a degree of accuracy, despite the presence of noise and countermeasures, proves that partial leakage of sensitive data occurs during computation. This leakage, detectable through measurements of power consumption or electromagnetic radiation, provides an attacker with enough information to progressively refine guesses and ultimately recover secret keys, demonstrating a critical need for robust side-channel countermeasures in practical implementations.

The pursuit of increasingly sophisticated cybersecurity measures, as detailed in this research, inherently acknowledges the transient nature of stability. Models, even those leveraging the power of machine learning for intrusion detection and side-channel analysis, are not impervious to the relentless march of data distribution shifts and adversarial attacks. As Grace Hopper famously stated, “It’s easier to ask forgiveness than it is to get permission.” This sentiment resonates with the iterative process of building adaptive security systems; a proactive approach to identifying and mitigating vulnerabilities, even if it means accepting temporary imperfections, proves more effective than rigid adherence to outdated models. The study emphasizes that a system’s uptime is not a guarantee of perpetual security, but a temporary reprieve within a constantly evolving threat landscape.

The Horizon Recedes

The presented work confirms a predictable truth: every advantage accrues entropy. Artificial intelligence, applied to the defenses of network security and cryptographic integrity, is not an exception. The observed vulnerabilities to data distribution shift and adversarial manipulation are not failings of the methods themselves, but rather symptoms of a fundamental constraint. Systems designed to detect anomaly must, by definition, be susceptible to novel anomalies – the very act of learning creates a fixed point against which future change is measured. Every delay in addressing this is simply the price of understanding.

Future work will undoubtedly focus on adaptive learning, continual training, and the pursuit of ‘robustness’. However, the term itself deserves scrutiny. True robustness is stasis, and stasis is death. The more pertinent question is not how to prevent change, but how to gracefully accommodate it. The field should prioritize methods that readily acknowledge their own limitations and actively seek to refine their models in response to observed discrepancies, rather than striving for an unattainable perfection.

Architecture without history is fragile and ephemeral. The next generation of AI-driven security systems must not simply detect threats, but learn from them, building a cumulative understanding of the evolving landscape of attack. This requires a shift in focus – from pattern recognition to anomaly characterization, from detection to comprehension. The goal isn’t to build a wall, but to cultivate a garden – one that thrives on diversity, adaptation, and the inevitable intrusion of the unexpected.

Original article: https://arxiv.org/pdf/2603.25826.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Off Campus Season 1 Soundtrack Guide

- Chainsaw Man Volume 24’s Cover Art Reveals a Brand-New Denji

- X-Men ’97 Finally Gave Gambit the Hero Moment He Deserved

- 46 Years Later, The Mandalorian & Grogu Answers A Major Empire Strikes Back Question

- HoI4 fans harsh reactions to the announcement of another DLC pack

- 10 Worst End-Game Couples In Sitcom History

- Emily Henry Says to ‘Trust the Vision’ For Beach Read Adaptation

- DoorDash responds after customer uses AI to make food look bad and get a refund

- Gold Rate Forecast

- Katanire’s Yae Miko Cosplay: Genshin Impact Masterpiece

2026-03-30 06:30