Author: Denis Avetisyan

Researchers are demonstrating how to dramatically reduce the size of deep learning models, enabling accurate avian species identification on low-power edge devices.

This study investigates the impact of target class characteristics on the compressibility of neural networks deployed for energy-autonomous biodiversity monitoring using mel-spectrograms.

Effective biodiversity monitoring is increasingly challenged by the limitations of resource-intensive traditional methods. This paper, ‘Investigating Target Class Influence on Neural Network Compressibility for Energy-Autonomous Avian Monitoring’, addresses this by exploring the feasibility of deploying compressed deep learning models on low-power edge devices for automated avian species identification. Our results demonstrate that significant neural network compression is achievable with minimal performance loss, enabling long-term, energy-autonomous acoustic monitoring. Can this approach unlock scalable, real-time insights into avian populations and contribute to more effective conservation efforts?

Decoding the Avian Chorus: Why Traditional Monitoring Falls Silent

The health of avian populations serves as a critical indicator of broader ecosystem wellbeing, making their consistent monitoring paramount for effective conservation strategies. Birds fulfill vital roles – from pollination and seed dispersal to insect control and scavenging – directly influencing habitat health and stability. Declines in bird populations can signal cascading effects throughout an ecosystem, potentially indicating environmental stressors like habitat loss, pollution, or climate change. Therefore, accurate and ongoing assessments of bird abundance, distribution, and reproductive success are not simply about protecting individual species; they represent a proactive approach to safeguarding entire ecological networks and ensuring long-term environmental resilience. Consequently, investment in robust monitoring programs is foundational to informed conservation decision-making and the preservation of biodiversity.

Point counts, a cornerstone of avian biodiversity monitoring, demand significant investment of time and human resources, as observers must meticulously identify and record birds within a defined radius at specific locations. This process isn’t merely about presence or absence; accurate species identification requires substantial training and experience, limiting the pool of capable researchers and increasing project costs. Furthermore, the inherent limitations of covering vast or challenging terrains with on-foot surveys pose a major scalability issue; while effective for localized studies, expanding point count data collection to regional or continental levels proves logistically difficult and financially prohibitive, hindering comprehensive assessments of long-term population trends and ecological health.

The practical difficulties inherent in traditional bird monitoring methods acutely impact the ability to assess avian populations in challenging terrains. Remote and inaccessible areas – encompassing vast forests, high-altitude ecosystems, and isolated islands – present logistical hurdles that significantly limit the frequency and scope of data collection. Consequently, crucial information regarding species distribution, abundance, and long-term trends can remain uncaptured, hindering timely conservation interventions. This data scarcity not only compromises the accuracy of regional and global biodiversity assessments but also introduces substantial uncertainty into predictive models used to anticipate the impacts of habitat loss, climate change, and other environmental stressors on vulnerable bird species.

Resurrecting the Signal: Machine Learning as an Acoustic Key

Deep neural networks are increasingly utilized in bioacoustics due to their capacity to automatically learn complex patterns from raw audio data. Traditional methods of analyzing bird songs and calls relied on manual feature engineering, a process that is both time-consuming and subject to human bias. Deep learning models, conversely, can ingest spectrograms or other audio representations and learn relevant features directly from the data, improving accuracy and reducing the need for expert intervention. These networks excel at tasks such as species identification, call detection, and behavioral classification, even in noisy environments or with limited training data. Furthermore, the ability of deep learning models to generalize to new datasets and unseen conditions makes them valuable for long-term ecological monitoring and conservation efforts.

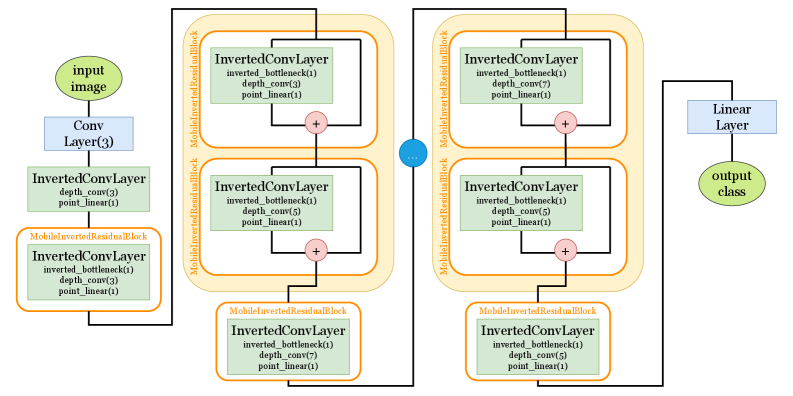

The MCUNet framework facilitates the deployment of machine learning models for bioacoustic analysis on devices with limited computational resources, such as microcontrollers and embedded systems. This is achieved through a software architecture optimized for low-power consumption and minimal memory footprint, allowing for real-time, on-device processing of audio data – termed “edge-based monitoring”. By performing analysis locally, MCUNet reduces the need for data transmission to a central server, decreasing latency, bandwidth requirements, and reliance on network connectivity, particularly beneficial for remote or inaccessible field deployments. The framework supports model formats commonly used in deep learning and provides tools for efficient model loading and inference on constrained hardware.

MCUNet addresses the challenges of deploying machine learning models for bioacoustic analysis in remote field settings by employing model compression techniques. Traditional deployments often require substantial computational resources and power, making them impractical for battery-operated or off-grid locations. MCUNet utilizes methods like quantization, pruning, and knowledge distillation to reduce model size and computational complexity without significant performance degradation. Quantization reduces the precision of model weights and activations, while pruning removes redundant connections. Knowledge distillation transfers learning from a larger, more accurate model to a smaller, compressed model. These optimizations enable the execution of complex bioacoustic models on resource-constrained microcontrollers, facilitating real-time, edge-based data processing and reducing the need for data transmission.

The Anatomy of Intelligence: Data and Model Refinement

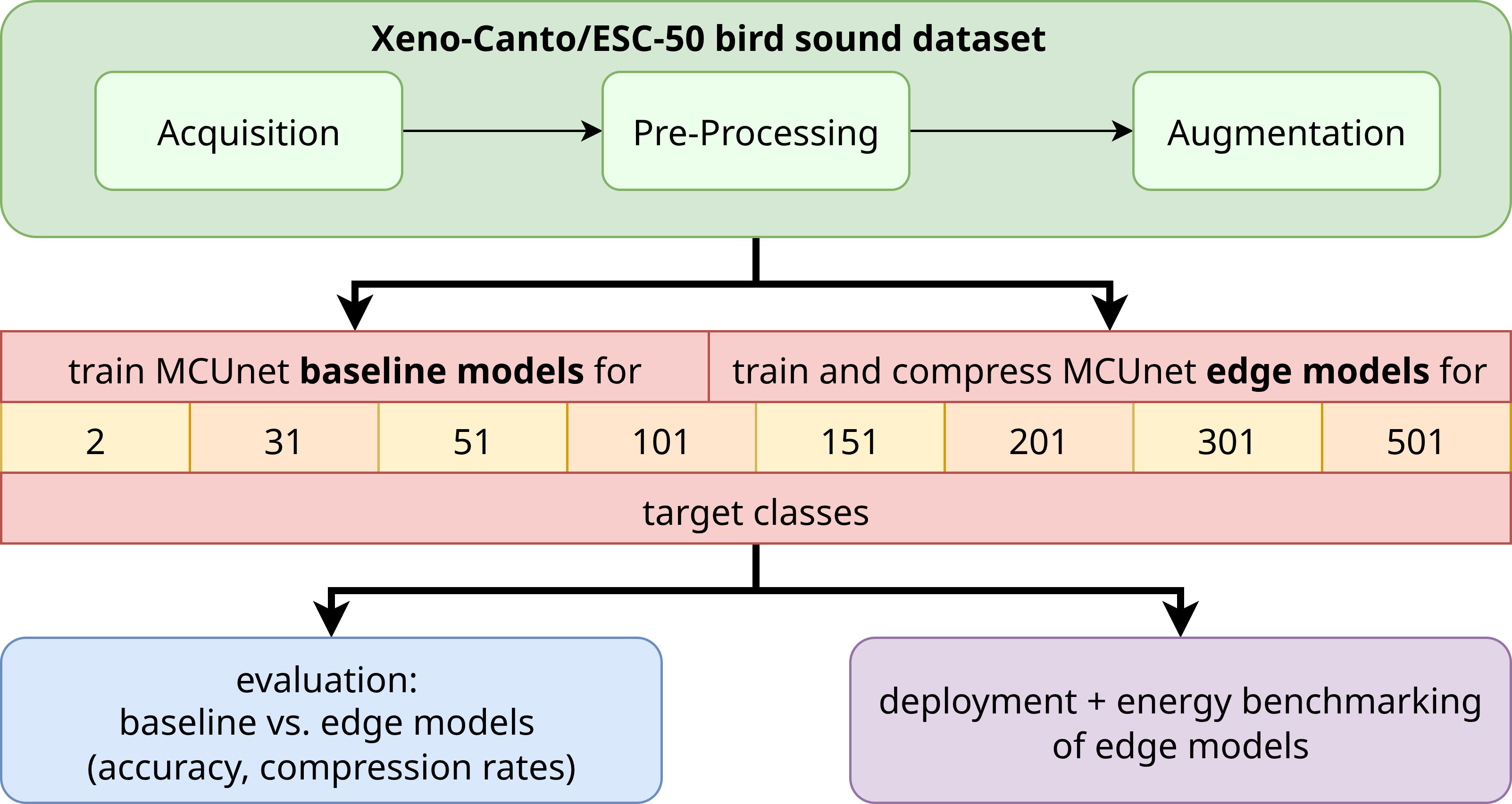

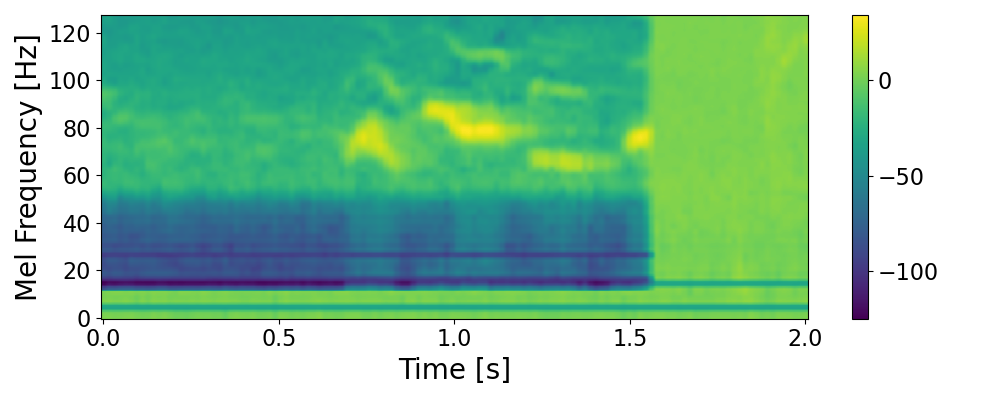

The MCUNet-in4 model’s training regimen leveraged both general-purpose image data and species-specific audio recordings. ImageNet, a large-scale visual database, provided a foundation for feature extraction, while the Xeno-Canto archive, a resource specializing in wildlife sound recordings, supplied the necessary bioacoustic data. This combination allowed the model to learn both broad visual patterns and the nuanced characteristics of avian vocalizations, effectively bridging the gap between computer vision and acoustic analysis. The Xeno-Canto data included recordings from a diverse range of species and geographic locations, contributing to the model’s generalization capability.

Data augmentation was implemented to enhance the generalization capability of the MCUNet-in4 model. Specifically, the technique of SpecAugment was applied to Mel-Spectrograms, which involves masking regions of the spectrogram in both the time and frequency domains. This process introduces variations in the training data that simulate real-world conditions, such as noise and signal degradation. By exposing the model to these artificially modified inputs, the model learns to become more resilient to variations in audio quality and improves its ability to correctly classify sounds it hasn’t encountered directly during training, thereby increasing overall model robustness.

The MCUNet-in4 model’s training regimen included the ESC-50 dataset, a collection of environmental sounds, specifically to enhance its ability to discriminate between avian vocalizations and non-avian sounds. This incorporation addressed a critical need for real-world applicability, as environmental recordings often contain a variety of auditory events beyond target bird species. By exposing the model to a broader acoustic landscape during training, the inclusion of ESC-50 data improved the model’s precision in identifying avian sounds amidst background noise and other interfering signals, leading to increased accuracy in practical deployment scenarios.

Validation accuracy for the MCUNet-in4 models consistently ranged from approximately 82% to 88% across experiments utilizing varying numbers of target classes. This performance level indicates the model’s ability to generalize effectively to unseen data, even as the task complexity increases with a greater diversity of avian vocalizations. The maintained accuracy across differing class counts suggests the model architecture and training methodology are robust and capable of scaling to more complex bioacoustic identification scenarios without significant performance degradation.

Extending the Network: Edge Deployment and the Promise of Autonomy

The convergence of the MCUNet framework and the TinyEngine inference engine unlocks the potential for sophisticated machine learning applications on resource-constrained devices. This pairing facilitates the deployment of complex models onto low-power microcontrollers, specifically the ARM Cortex-M4 and M7 platforms, traditionally limited by their computational capacity and energy budgets. MCUNet streamlines model architecture, reducing complexity without significant accuracy loss, while TinyEngine optimizes inference execution for these microcontrollers. The result is a system capable of performing real-time data analysis at the edge, directly on the device, without relying on cloud connectivity or substantial power consumption, paving the way for applications in remote sensing, wearable technology, and the Internet of Things.

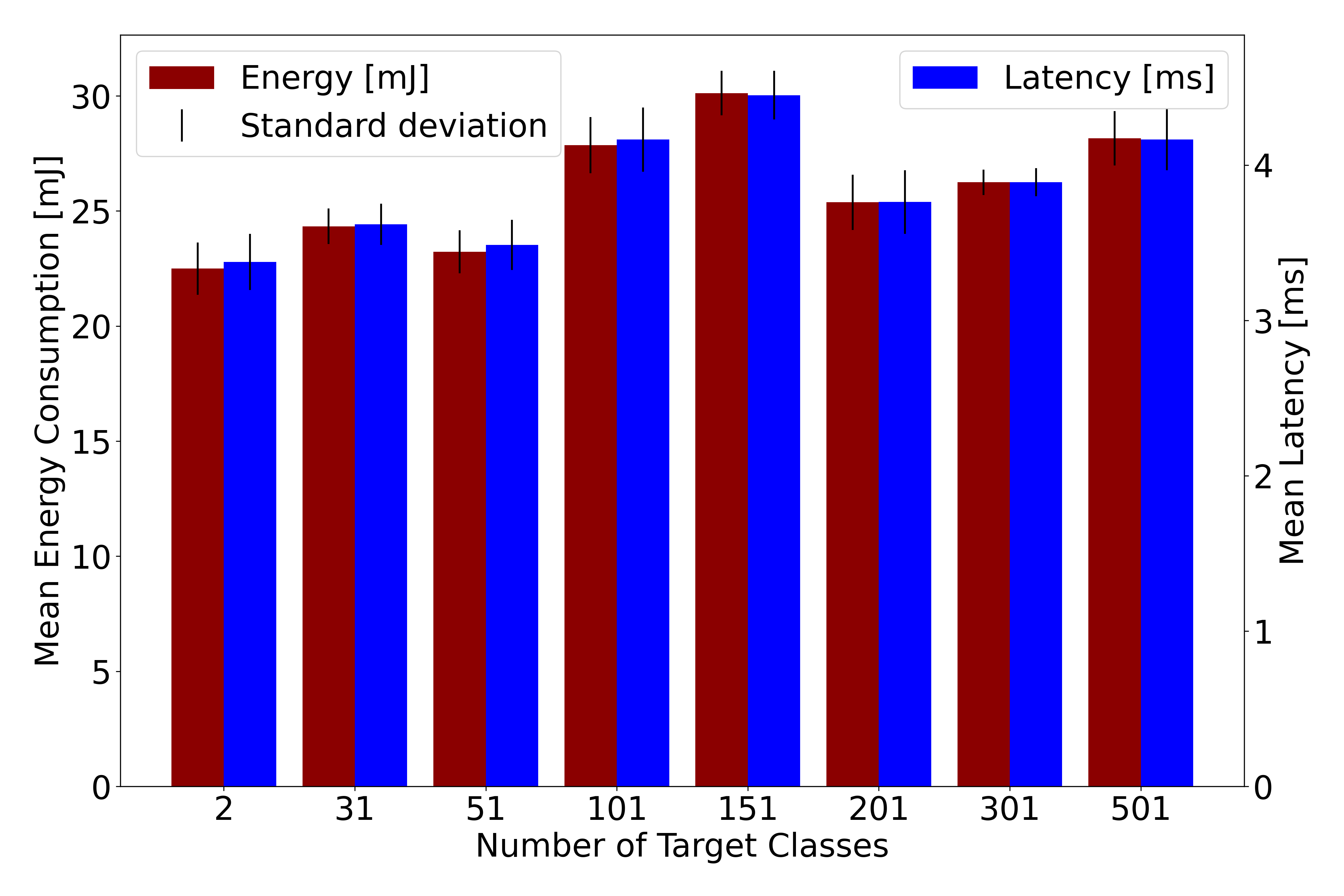

Rigorous benchmarking across diverse hardware platforms, including the Raspberry Pi 4, substantiates the model’s capabilities in both performance and energy conservation. These evaluations reveal a compelling trade-off between computational demands and power consumption, demonstrating the feasibility of deploying sophisticated machine learning models on resource-constrained devices. The Raspberry Pi 4 serves as a crucial testbed, allowing researchers to quantify inference speeds and energy usage under realistic operating conditions, providing a valuable baseline for comparison against other embedded systems. Results from this platform highlight the model’s potential to deliver intelligent functionality with minimal environmental impact, paving the way for sustainable and scalable edge computing solutions.

The pursuit of truly pervasive machine learning necessitates devices capable of operating independently of traditional power infrastructure. Recent advancements demonstrate the feasibility of energy-autonomous edge deployment through the integration of photovoltaic energy harvesting. By coupling a machine learning model with a small-area solar panel, systems can achieve long-term, self-sufficient operation even in remote or resource-constrained environments. This approach minimizes the logistical challenges and costs associated with battery replacement or access to grid power, opening doors for applications like persistent environmental monitoring, precision agriculture in off-grid locations, and extended sensor networks where continuous data collection is paramount. The ability to power these devices solely from renewable sources not only enhances their practicality but also contributes to a more sustainable and environmentally friendly approach to edge computing.

A significant advantage of deploying machine learning models on low-power microcontrollers, such as the ARM Cortex-M7, lies in their dramatically reduced energy demands, and consequently, the scale of renewable energy sources needed for sustained operation. Research indicates that powering the Cortex-M7 for fully autonomous function requires a solar panel with an area of just 0.07 square meters – roughly the size of a standard sheet of paper. This contrasts sharply with the Raspberry Pi 4, a more powerful but energy-intensive platform, which necessitates a solar panel area of 1.58 square meters to achieve the same level of energy independence. This substantial difference highlights the potential for deploying sophisticated machine learning applications in remote or off-grid locations using minimal infrastructure and a greatly reduced environmental footprint, making long-term, sustainable operation far more attainable.

A significant advantage of deploying machine learning models on the MCUNet framework with the TinyEngine is demonstrable energy efficiency, particularly when contrasted with more powerful platforms. The ARM Cortex-M7 microcontroller, leveraging model compression and optimized inference, requires a mere 6.6 milliampere-hours of battery capacity to operate continuously for 48 hours. This stands in stark contrast to the Raspberry Pi 4, which demands a substantially larger 155.5 mAh for the same duration. This difference highlights the potential for drastically reduced power consumption and extended operational lifespans in edge computing applications, making deployment in remote or energy-constrained environments not only feasible, but remarkably sustainable.

The deployed model demonstrates remarkable efficiency on the ARM Cortex-M7 microcontroller, achieving a latency of 237 milliseconds for inference with 31 distinct classes. This rapid processing speed is coupled with exceptionally low energy consumption, requiring only 83 milliJoules per inference step. These figures highlight the potential for real-time applications in resource-constrained environments, where minimizing both processing time and power draw is paramount. Such performance characteristics enable complex machine learning tasks to be executed directly on edge devices, fostering greater autonomy and reducing reliance on cloud connectivity.

Significant reductions in model size are achieved through advanced compression techniques, reaching an average rate of 82-88% without compromising accuracy. This compression is critical for deploying complex machine learning models on resource-constrained devices, like microcontrollers, where memory and processing power are limited. By drastically decreasing the model’s footprint, these techniques enable efficient storage and faster inference times, paving the way for broader implementation of edge computing solutions. The ability to maintain high accuracy while substantially reducing size represents a key advancement, allowing for sophisticated data analysis directly on the device – and minimizing reliance on energy-intensive cloud connectivity.

The study meticulously probes the limits of model compression, a process akin to controlled demolition. It seeks to dismantle complexity without sacrificing functionality-a delicate balance achieved through pruning and quantization. This resonates with Edsger W. Dijkstra’s assertion: “It’s not enough to just do something; you must also understand why it works.” The research doesn’t merely compress models for avian monitoring; it dissects how different target classes influence compressibility, revealing underlying principles. By understanding these constraints, the team reverse-engineers the system to maximize efficiency, ensuring long-term deployment on energy-autonomous edge devices. This deliberate breakdown of a complex system, to then rebuild it leaner and more effective, embodies the spirit of intellectual deconstruction.

Where Do the Wires Lead?

The demonstrated feasibility of deploying compressed neural networks for avian monitoring isn’t a destination, but a rather predictable consequence of forcing a complex system to conform to physical limitations. The real question isn’t whether it can be done-any sufficiently motivated engineer will eventually make it so-but rather what is lost in the reduction. The paper acknowledges the trade-offs; accuracy, predictably, suffers. But the more interesting failure modes remain largely unexamined. What subtle vocalizations are now invisible? What biases are baked into the compression itself, favoring certain species or call types over others?

Future work will inevitably focus on algorithmic refinement – better compression, more efficient architectures. Yet, a more fruitful avenue might lie in embracing the limitations. Instead of striving for perfect identification, could these systems be repurposed to detect change? Anomaly detection, rather than species cataloging, shifts the burden of proof. The network doesn’t need to know what a bird is, only that something sounds… different. Such an approach would bypass the need for exhaustive training data and potentially reveal ecological shifts currently masked by the noise of biodiversity.

Ultimately, this isn’t about building better bird detectors. It’s about reverse-engineering the very notion of “understanding” in a world constrained by energy and computation. The true test will not be achieving high accuracy, but in acknowledging-and even exploiting-the inherent imperfections of any system attempting to model the messy reality of a forest.

Original article: https://arxiv.org/pdf/2602.17751.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- United Airlines can now kick passengers off flights and ban them for not using headphones

- Crimson Desert: Disconnected Truth Puzzle Guide

- All 9 Coalition Heroes In Invincible Season 4 & Their Powers

- Grey’s Anatomy Season 23 Confirmed for 2026-2027 Broadcast Season

- Mewgenics vinyl limited editions now available to pre-order

- Viral Letterboxd keychain lets cinephiles show off their favorite movies on the go

- All Golden Ball Locations in Yakuza Kiwami 3 & Dark Ties

- Does Mark survive Invincible vs Conquest 2? Comics reveal fate after S4E5

- The Boys Season 5 Spoilers: Every Major Character Death If the Show Follows the Comics

- How to Get to the Undercoast in Esoteric Ebb

2026-02-24 01:05