Author: Denis Avetisyan

A new approach empowers conversational AI to identify and correct errors during complex, multi-turn interactions.

This paper introduces ReIn, a test-time intervention method leveraging reasoning to enhance the robustness of LLM-based agents and improve error recovery in conversational AI.

Despite advances in tool-augmented large language model (LLM) agents, robust handling of unanticipated user errors remains a critical challenge in conversational AI. This work introduces ‘ReIn: Conversational Error Recovery with Reasoning Inception’, a novel test-time intervention method designed to enhance agent resilience by proactively injecting reasoning steps to guide error recovery during multi-turn dialogues. Through an external inception module, ReIn identifies conversational failures-specifically ambiguous or unsupported requests-and generates corrective plans without requiring model fine-tuning or prompt modification. Our results demonstrate substantial improvements in task success across diverse agent models, suggesting that strategically defining recovery tools with ReIn offers a safe and effective pathway towards more robust and adaptable conversational agents-but how can we further extend these principles to address a wider range of conversational breakdowns?

The Fragility of Automation

Despite recent advancements in artificial intelligence, task agents frequently encounter difficulties when presented with user requests that deviate from their training data or anticipated scenarios. These agents, designed to automate specific functions, often operate within narrowly defined parameters; a slight ambiguity or unforeseen input can lead to errors or complete task failure. This fragility isn’t a matter of lacking intelligence, but rather a limitation in their ability to generalize and adapt to novel situations, hindering their reliability in real-world applications where user input is inherently unpredictable. Consequently, even sophisticated agents can stumble on seemingly simple requests that fall outside the scope of their pre-programmed expectations, impacting user experience and eroding confidence in their capabilities.

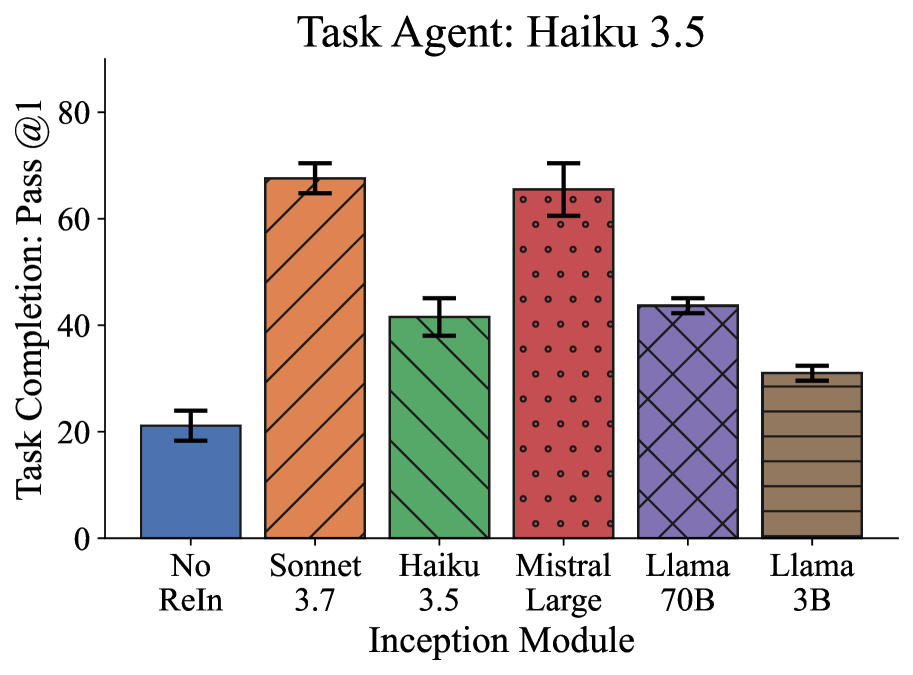

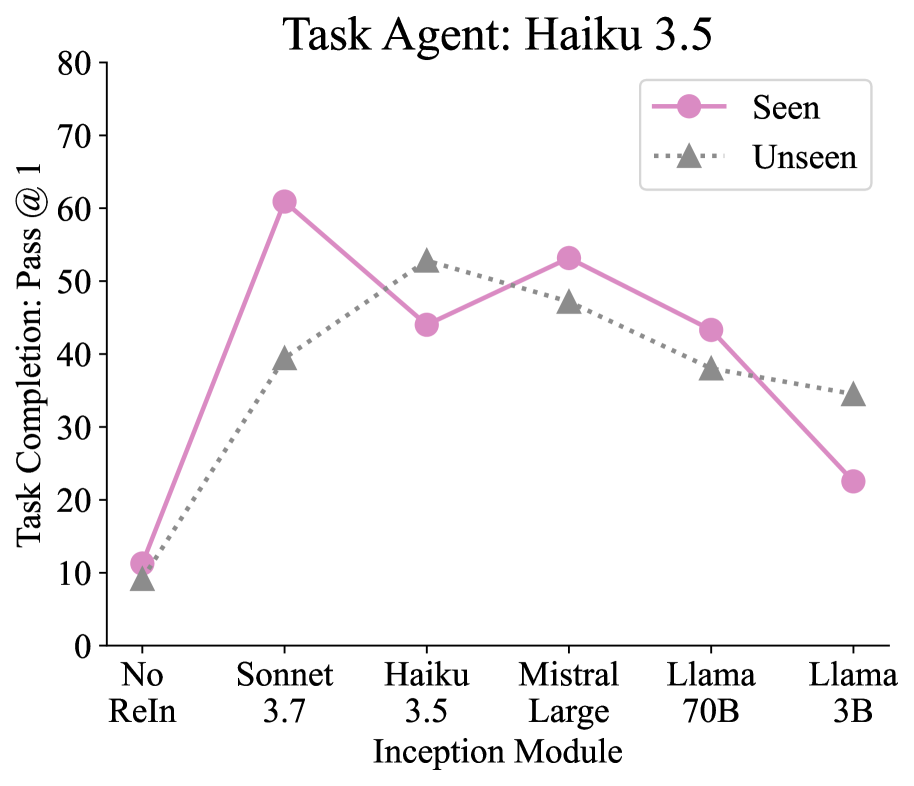

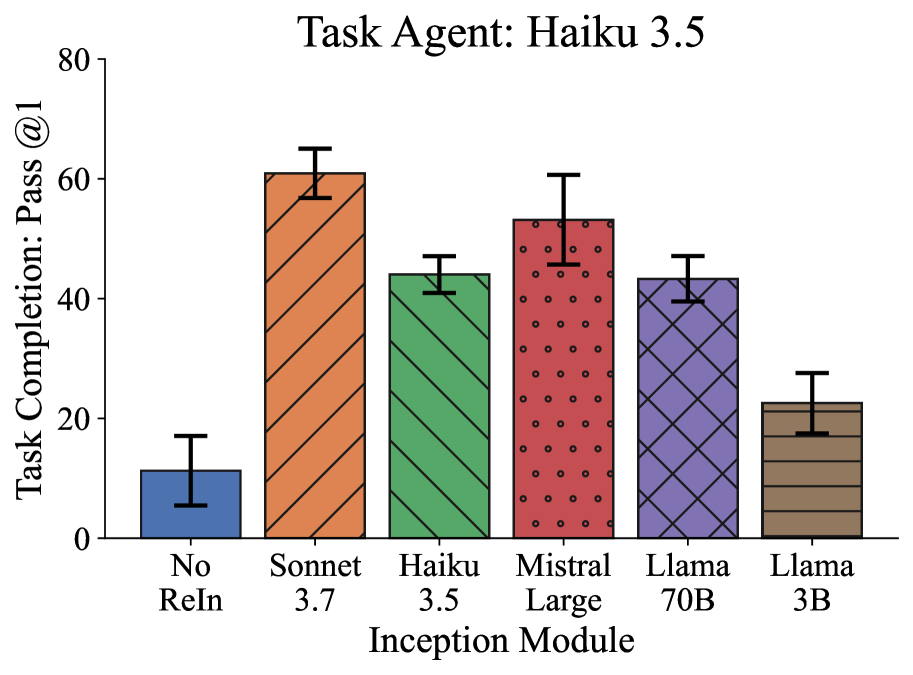

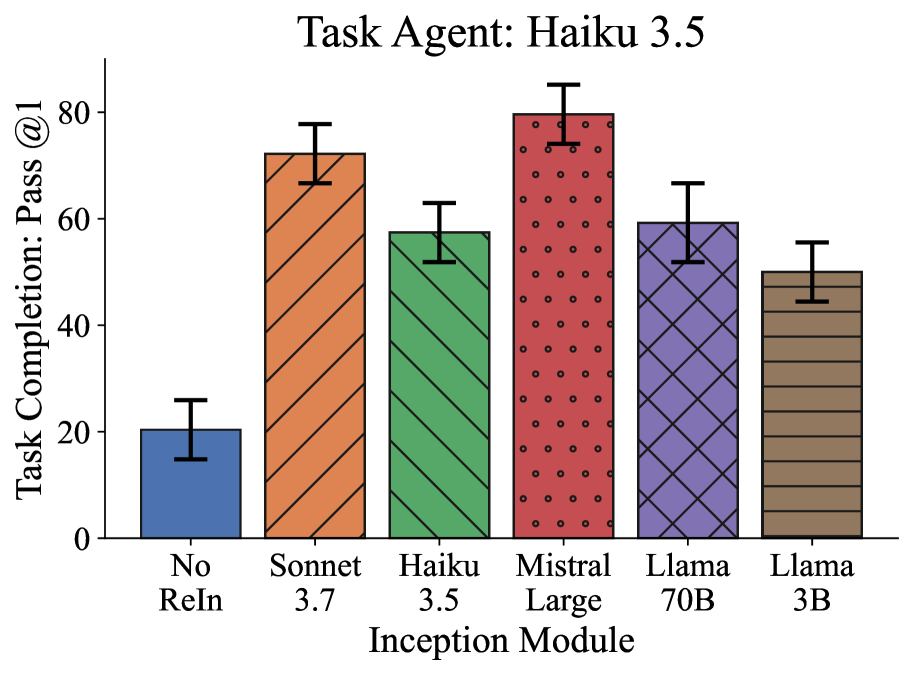

The fragility of current task agents arises from a consistent inability to recognize and address errors during operation, significantly diminishing both user experience and confidence in the system. Despite advancements in artificial intelligence, these agents frequently falter when confronted with unexpected inputs or complex requests, leading to task failures. Quantitative assessments reveal a concerning trend: current methodologies achieve a Pass@1 rate – the probability of successfully completing a task on the first attempt – of only 18.5% to 25.9%. This low success rate underscores a critical limitation in the robustness of these agents and highlights the need for more resilient error detection and recovery mechanisms to ensure reliable performance and foster greater user trust.

Proactive Intervention: Anticipating the Inevitable

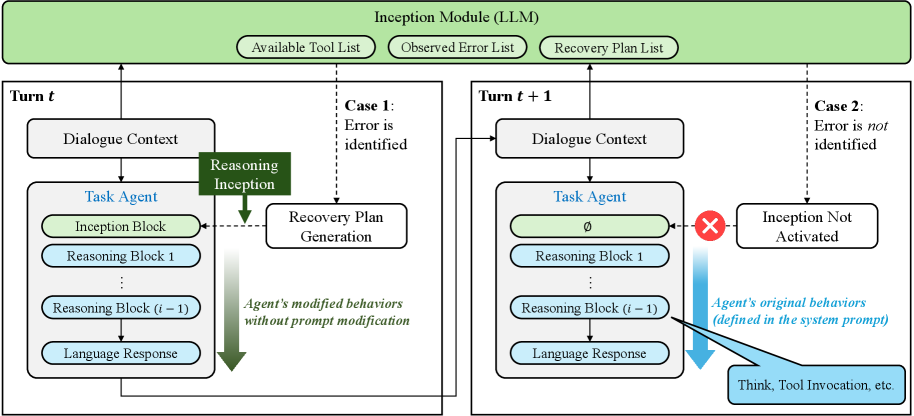

The InceptionModule functions as a pre-processing component integrated into the request pipeline to proactively identify potential issues with user inputs. This module operates prior to request fulfillment, analyzing incoming data to determine if it contains errors that would otherwise lead to system failures or incorrect outputs. By intercepting these issues early, the InceptionModule aims to prevent the propagation of flawed requests through subsequent processing stages, increasing system robustness and reliability. Its core function is error identification, facilitating intervention before a request impacts core functionalities.

The ErrorDetection module employs a suite of techniques to preemptively identify problematic user requests, specifically focusing on two core scenarios: UnsupportedAction and AmbiguousRequest. UnsupportedAction errors occur when a user attempts to invoke a function or command not currently implemented or available within the system. AmbiguousRequest errors arise when the user input is syntactically valid but lacks sufficient information for the system to uniquely determine the intended action; this requires clarification before processing. The module analyzes the request structure and semantic content to classify incoming requests into one of these error categories, triggering the RecoveryPlan if either condition is met. This proactive error identification is distinct from traditional error handling that responds to failures after execution has begun.

Upon detection of user request errors – specifically ‘UnsupportedAction’ or ‘AmbiguousRequest’ – the system initiates a ‘RecoveryPlan’ designed to guide the agent towards a successful resolution instead of terminating the interaction. Evaluation of this recovery mechanism indicates moderate inter-rater reliability. Pairwise Cohen’s Kappa scores ranged from 0.36 to 0.42, while Fleiss’ Kappa, assessing agreement among multiple raters, yielded a score of 0.38. These Kappa values suggest acceptable, though not perfect, consistency in the application of the ‘RecoveryPlan’ across different evaluations.

Reasoning as a Corrective Layer

The ReIn-Mechanism functions as a runtime intervention, augmenting the TaskAgent with supplemental reasoning processes during the evaluation phase. This injection of reasoning capability is not a modification of the core TaskAgent model itself, but rather an external system that provides additional contextual analysis and guides response generation. By operating at test time, the ReIn-Mechanism serves as a protective layer, mitigating potential errors stemming from unforeseen inputs or ambiguous prompts without requiring retraining or alteration of the original model’s parameters. This approach enables the TaskAgent to dynamically leverage reasoning support only when needed, enhancing robustness and reliability during operation.

The ReIn-Mechanism functions by leveraging error analysis data generated by the InceptionModule. Specifically, the InceptionModule identifies potential errors or inconsistencies in the TaskAgent’s current response trajectory. This analysis isn’t simply error detection, but a focused assessment of why an error might occur. The ReIn-Mechanism then uses this granular error information to dynamically guide the TaskAgent’s response generation, prompting it to reconsider its approach or to prioritize alternative reasoning paths. This iterative process, driven by the InceptionModule’s output, aims to correct errors before they manifest in the final response, improving overall reliability and coherence.

Evaluations demonstrate that the implemented reasoning injection mechanism improves the agent’s performance when presented with unanticipated input, resulting in a more consistent conversational flow. However, statistical analysis using McNemar’s test yielded a p-value of approximately 0.68. This indicates that, while the mechanism shows qualitative improvements in handling unexpected input, the observed differences in performance were not statistically significant across the tested runs. Further investigation with larger datasets or modified parameters may be required to establish statistically significant gains.

Simulating the Unpredictable: A Crucible for Resilience

To thoroughly evaluate system robustness, a comprehensive UserSimulation framework is employed, creating a diverse range of realistic user interactions. This isn’t simply automated testing; the simulation models nuanced behaviors, encompassing typical use cases as well as edge cases and potential error scenarios. By subjecting the system to these varied conditions – from straightforward requests to complex, multi-step interactions – researchers can pinpoint performance bottlenecks and identify areas where the system falters. The generated interactions aren’t random; instead, they’re carefully designed to mimic the breadth and depth of real-world user activity, providing a far more accurate assessment of the system’s reliability and user experience than traditional testing methods would allow. This proactive approach to evaluation is crucial for ensuring the system functions seamlessly and effectively across a wide spectrum of user needs and behaviors.

A robust feedback mechanism is central to the system’s iterative refinement. During UserSimulation, every interaction and system response is meticulously logged, creating a detailed dataset that reveals performance bottlenecks and areas of user friction. This captured data isn’t simply archived; it actively informs the adjustment of the RecoveryPlan. Statistical analysis identifies recurring failure points, while qualitative data illuminates the nuances of user behavior under stress. By continuously cycling simulation results back into the refinement process, the system learns from its ‘mistakes’ and progressively enhances its ability to anticipate and resolve issues, ultimately leading to a more resilient and user-friendly experience. The mechanism functions as a closed-loop system, ensuring that each iteration builds upon the insights of the last, driving continuous improvement in the RecoveryPlan’s efficacy.

The foundation of dependable system simulations rests heavily on meticulous data annotation. This process involves carefully labeling and categorizing vast datasets to accurately represent the nuances of real-world user interactions. Through ‘DataAnnotation’, raw data transforms into structured information, enabling the creation of realistic simulation scenarios. The quality of these annotations directly impacts the fidelity of the simulations; imprecise labeling can lead to skewed results and inaccurate performance assessments. Consequently, a robust ‘DataAnnotation’ pipeline is not merely a preparatory step, but an integral component in ensuring the system’s reliability and effectiveness when confronted with diverse and unpredictable user behavior.

Beyond Narrow Domains: The Promise of Adaptability

The TaskAgent distinguishes itself through a core design principle of adaptability, achieved via a flexible ‘ServiceDomain’ architecture. This domain isn’t a fixed component, but rather a customizable framework enabling the agent to function effectively across a wide spectrum of applications. By decoupling the core agent logic from the specific services it utilizes, developers can readily tailor the agent’s capabilities – integrating new tools, data sources, or functionalities – without requiring substantial modifications to the agent itself. This modularity not only simplifies the development process but also facilitates rapid prototyping and deployment in diverse contexts, from customer service and data analysis to complex problem-solving and creative tasks. The ServiceDomain, therefore, represents a crucial element in realizing the potential of truly versatile conversational AI.

The TaskAgent’s capacity for complex operations is significantly broadened through the integration of ‘ExternalTool’ access. This feature allows the agent to move beyond its internally stored knowledge and actively utilize external resources – APIs, databases, and specialized services – to complete tasks. Rather than being limited to pre-programmed responses, the agent can dynamically query these tools, retrieve real-time information, and perform actions such as calculations, data analysis, or even controlling external devices. This not only enhances the agent’s problem-solving capabilities but also enables it to address a wider range of user requests and adapt to evolving circumstances, effectively transforming it from a conversational entity into an active executor of tasks.

Researchers are now directing efforts towards streamlining the adaptation process of this framework, moving beyond manual configuration towards automated refinement of the TaskAgent’s capabilities. This involves developing algorithms that can dynamically assess the demands of novel conversational scenarios and automatically adjust the agent’s ServiceDomain and ExternalTool integrations accordingly. Exploration is also underway to apply this adaptable framework to increasingly complex AI interactions, such as nuanced negotiation, collaborative problem-solving, and sophisticated tutoring systems – areas where a rigid, pre-defined agent architecture often falls short. The ultimate goal is to create a conversational AI capable of seamlessly transitioning between diverse tasks and demonstrating genuine adaptability in real-world applications.

The pursuit of robust conversational agents, as demonstrated by ReIn, feels less like engineering and more like tending a garden. One cultivates the capacity for error recovery, injecting reasoning blocks as one might prune a struggling branch, hoping to guide growth. It’s a temporary fix, of course. As John von Neumann observed, “The best way to predict the future is to invent it.” ReIn doesn’t prevent failure in multi-turn dialogues-it merely reshapes the landscape of potential failures, preparing for contingencies. The system adapts, but dependencies-the inherent limitations of language models and the unpredictable nature of human conversation-remain. Architecture isn’t structure-it’s a compromise frozen in time, a snapshot of anticipated chaos.

What’s Next?

The pursuit of robustness, as exemplified by this work, often feels like building a dam against a tide already committed to rising. ReIn proposes a localized intervention – a reasoning block to mend conversational fractures – but every such repair inevitably shifts the locus of future failure. The system will not simply accept correction; it will discover new ways to stumble. Each dependency introduced is a promise made to the past, a belief that this particular architecture will not be undone by emergent dialogue patterns.

The emphasis on test-time intervention is, perhaps, a tacit acknowledgment that perfect pre-training is an asymptote. The true challenge lies not in anticipating every error, but in cultivating systems that diagnose and correct themselves – systems that, in a sense, begin fixing themselves. Control, in this context, is an illusion that demands Service Level Agreements. The more interesting question isn’t ‘how do we prevent errors?’ but ‘how do we build agents that gracefully negotiate their inevitability?’

Future work will likely see a proliferation of such ‘reasoning blocks’, each a temporary bulwark against the chaos of natural language. Yet, the deeper current flows toward self-improving agents, systems that learn not just what to say, but how to recover when speech falters. The cycle continues: build, break, rebuild – a perpetual dance with complexity.

Original article: https://arxiv.org/pdf/2602.17022.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- United Airlines can now kick passengers off flights and ban them for not using headphones

- Crimson Desert: Disconnected Truth Puzzle Guide

- All 9 Coalition Heroes In Invincible Season 4 & Their Powers

- Mewgenics vinyl limited editions now available to pre-order

- How to Get to the Undercoast in Esoteric Ebb

- Grey’s Anatomy Season 23 Confirmed for 2026-2027 Broadcast Season

- NASA astronaut reveals horrifying tentacled alien is actually just a potato

- The Boys Season 5 Spoilers: Every Major Character Death If the Show Follows the Comics

- All Golden Ball Locations in Yakuza Kiwami 3 & Dark Ties

- Does Mark survive Invincible vs Conquest 2? Comics reveal fate after S4E5

2026-02-23 06:09