Author: Denis Avetisyan

A new framework leverages the power of artificial intelligence and human expertise to assess the reliability of corporate sustainability ratings.

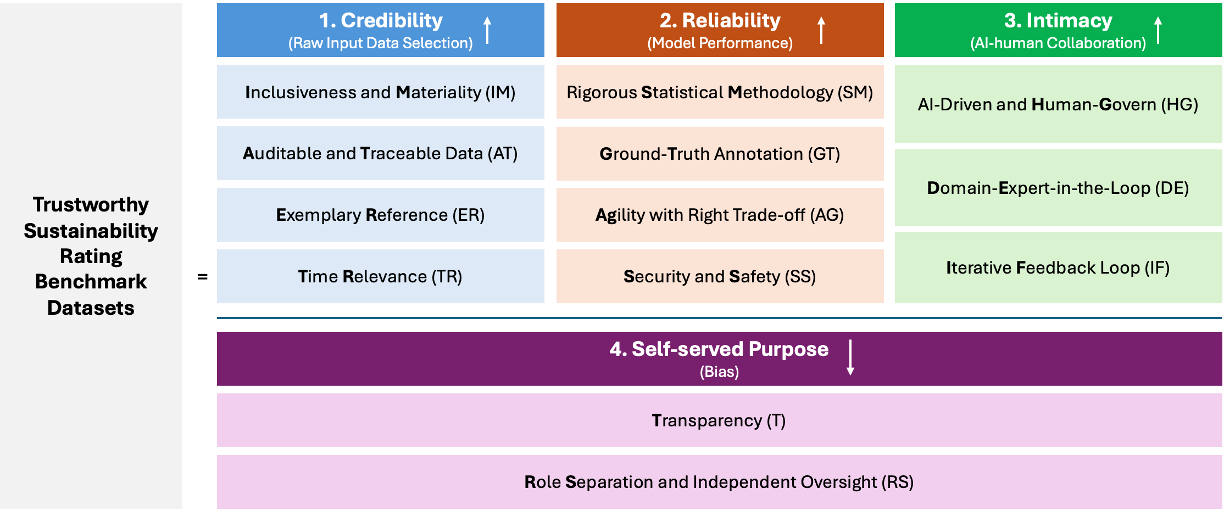

This paper introduces STRIDE, a human-AI collaborative approach for constructing benchmark datasets to improve data transparency and reduce discrepancies in ESG metrics.

Despite growing reliance on sustainability ratings for investment and corporate governance, significant discrepancies across agencies undermine their credibility and practical utility. This paper, ‘Toward Trustworthy Evaluation of Sustainability Rating Methodologies: A Human-AI Collaborative Framework for Benchmark Dataset Construction’, addresses this challenge by introducing a novel human-AI framework, comprising STRIDE and SR-Delta, designed to generate reliable benchmark datasets for evaluating sustainability assessments. The proposed approach leverages large language models to establish principled criteria and facilitate discrepancy analysis, ultimately fostering more transparent and comparable ESG metrics. Could this collaborative methodology unlock a new era of trustworthy sustainability evaluations and accelerate progress toward urgent global agendas?

The Shifting Sands of Sustainability Metrics

Despite escalating investor interest in Environmental, Social, and Governance (ESG) factors, sustainability ratings currently exhibit a troubling lack of uniformity and dependability, effectively impeding the seamless incorporation of these considerations into investment strategies. This inconsistency isn’t merely a matter of differing opinions; analyses reveal substantial discrepancies in how ratings agencies assess identical companies, leading to confusion and diluted impact. The core issue lies in the absence of standardized metrics and universally accepted definitions of sustainability, forcing evaluators to rely on varied interpretations of corporate disclosures. Consequently, a company receiving a high rating from one agency may simultaneously be flagged as a significant risk by another, creating a landscape where investors struggle to discern genuine progress from superficial reporting and hindering the efficient allocation of capital towards truly sustainable ventures.

The variability in sustainability ratings isn’t simply a matter of differing opinions; it originates from the inherent challenges in how companies report environmental, social, and governance data and how those reports are then analyzed. Corporate disclosures often lack standardized metrics, relying instead on qualitative descriptions or selectively reported figures, creating ambiguity for raters. This lack of uniformity forces sustainability rating agencies to employ subjective interpretations when applying their methodologies, as they must fill gaps and reconcile disparate reporting styles. Consequently, similar corporate actions can receive markedly different assessments depending on the weighting and emphasis given to various factors within each agency’s unique framework, ultimately diminishing the reliability and comparability of these crucial evaluations.

A critical impediment to the effective use of sustainability ratings lies in their inherent lack of transparency, which erodes investor confidence and diminishes their practical value. When the methodologies underpinning these assessments remain opaque, stakeholders are left unable to discern why a particular company received a specific rating, hindering meaningful comparison and due diligence. This ambiguity fuels skepticism about the validity of ESG scores, potentially leading to misallocation of capital and undermining efforts to drive positive environmental and social impact. Consequently, the utility of these ratings for informed decision-making is significantly limited, as investors struggle to reconcile conflicting assessments and assess the true sustainability performance of prospective investments. The resulting distrust necessitates greater standardization and disclosure regarding rating methodologies to restore faith in the integrity of ESG evaluations.

Current sustainability assessments grapple with a fundamental challenge: inconsistent data quality. Evaluations of Environmental, Social, and Governance (ESG) performance often rely on self-reported corporate disclosures, which vary significantly in depth, standardization, and verification processes. This creates a landscape where comparable data is scarce, forcing rating agencies to navigate subjective interpretations and estimations. The absence of universally accepted metrics and rigorous auditing further complicates efforts to achieve objective evaluations; differing methodologies can yield vastly different results for the same company, undermining investor confidence and hindering the effective allocation of capital towards truly sustainable practices. Ultimately, the pursuit of consistent sustainability assessments requires a concerted effort to enhance data transparency, standardize reporting frameworks, and establish independent verification mechanisms.

Constructing a Robust Foundation: Introducing STRIDE

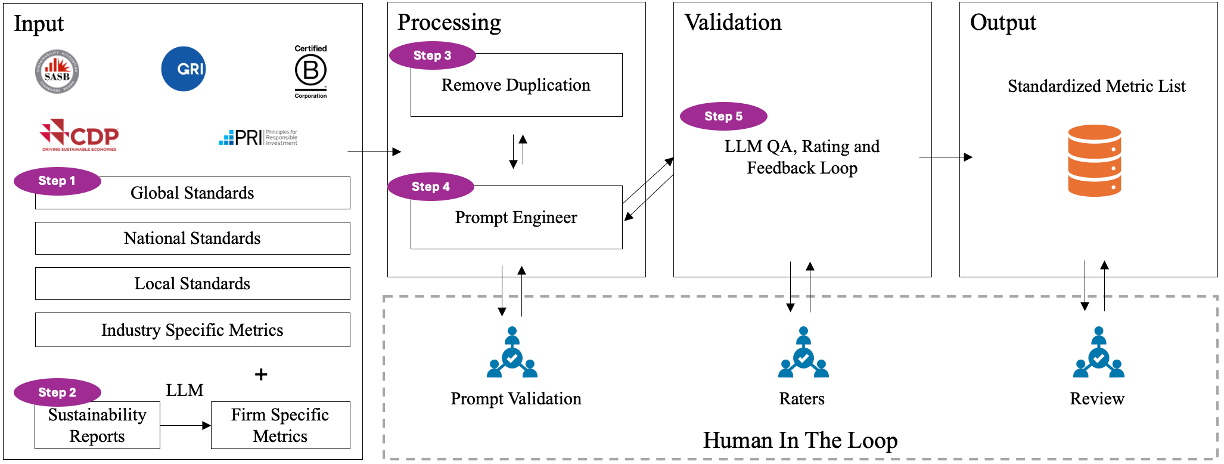

The STRIDE Framework addresses limitations in sustainability rating assessment by constructing dedicated Benchmark Datasets. These datasets are not pre-existing collections, but are systematically generated to facilitate comparative analysis of different sustainability ratings. This process involves identifying key performance indicators relevant to sustainability, sourcing data related to those indicators for a defined set of entities, and then applying that data to each rating scheme being evaluated. The resulting benchmark allows for quantifiable comparison of rating outcomes, revealing areas of convergence, divergence, and potential bias within the sustainability assessment landscape. This systematic approach ensures evaluations are grounded in consistent data, improving the reliability and interpretability of comparative results.

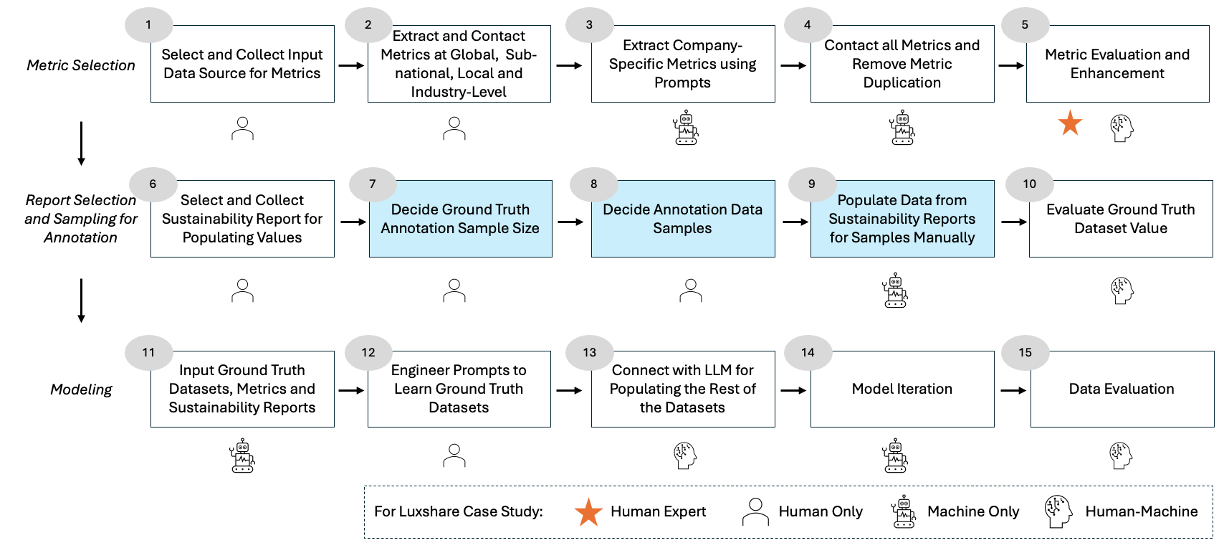

STRIDE utilizes Large Language Models (LLMs) to automate the processes of data extraction and sustainability rating scoring, significantly improving both efficiency and scalability. Specifically, LLMs are employed to parse unstructured data from diverse sources – including corporate reports, news articles, and regulatory filings – identifying relevant metrics and assigning values based on predefined criteria. This automated scoring reduces manual effort and allows for the rapid evaluation of a large number of entities. The LLM-driven approach facilitates consistent application of scoring methodologies and enables the system to adapt to evolving sustainability standards, ensuring the framework remains current and scalable to accommodate growing datasets and increasing demand for sustainability assessments.

Credibility Assessment within the STRIDE framework utilizes a multi-faceted approach to validate data sources prior to inclusion in sustainability rating evaluations. This process incorporates source-based features, including organizational transparency, reporting methodology disclosures, and independent verification status. Furthermore, STRIDE evaluates content-based indicators such as factual consistency, bias detection, and evidence support. Data sources are assigned a credibility score based on weighted combinations of these features, with lower-scoring sources either excluded or subjected to increased scrutiny during the subsequent analysis stages. This rigorous assessment aims to mitigate the impact of unreliable or biased information on overall sustainability ratings and ensure the robustness of comparative benchmarks.

STRIDE utilizes a collaborative Human-AI approach to sustainability rating assessment. The framework automates initial data extraction and scoring using Large Language Models, generating preliminary ratings. These automated results are then subject to review and validation by human experts who provide nuanced judgment and contextual understanding. This process leverages the scalability and efficiency of AI while incorporating the critical thinking and domain expertise necessary to ensure accurate and insightful evaluations, ultimately improving the reliability of sustainability ratings compared to purely automated or manual systems.

Unearthing Discrepancies: SR-Delta in Action

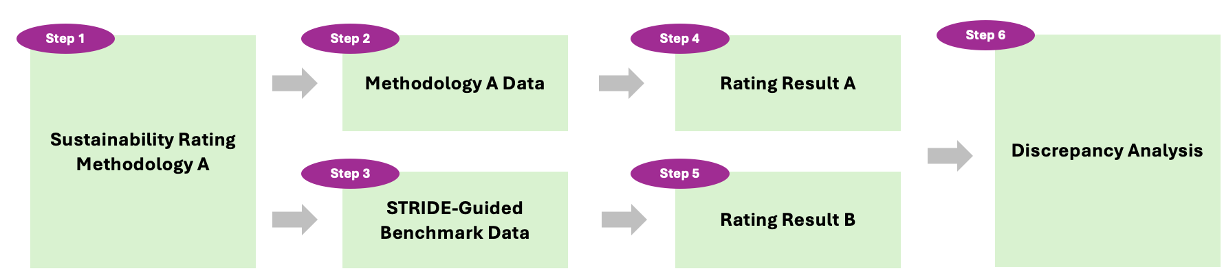

SR-Delta functions as a discrepancy analysis procedure embedded within the STRIDE framework, specifically engineered to identify inconsistencies and potential biases present in established Sustainability Ratings. This methodology systematically compares data sources and rating criteria, flagging instances where reported information deviates from supporting evidence or where subjective interpretations may influence the assigned rating. The procedure is not intended to replace existing rating systems, but rather to provide an independent verification process, highlighting areas requiring further investigation and promoting greater transparency in ESG assessments. Discrepancies identified through SR-Delta can relate to data gaps, conflicting information, or inconsistencies in the application of rating methodologies across different companies or sectors.

Application of the SR-Delta methodology to MSCI ESG Ratings identified multiple instances of disclosure ambiguity and data quality concerns contributing to rating discrepancies. Specifically, inconsistencies were found regarding the reporting of key performance indicators (KPIs) across different company disclosures, with variations in definitions and calculation methodologies impacting comparability. Further analysis revealed instances where reported data lacked sufficient supporting evidence or relied on self-reported metrics without independent verification. These issues were observed across multiple sectors and company sizes, indicating a systemic challenge in ensuring consistent and reliable ESG data reporting within the MSCI framework.

Disclosure Ambiguity significantly impacts the reliability of ESG performance assessments because inconsistencies or a lack of clarity in reported data introduces uncertainty into Sustainability Ratings. This ambiguity stems from variations in reporting standards, differing interpretations of ESG metrics, and instances where provided information is insufficiently detailed for accurate evaluation. Addressing these issues necessitates enhanced reporting frameworks that prioritize standardized definitions, require comprehensive data disclosure, and promote consistent application of ESG criteria across all assessed entities. Minimizing disclosure ambiguity is crucial for fostering investor confidence and enabling informed decision-making based on transparent and verifiable ESG data.

A case study applying the STRIDE framework and SR-Delta methodology to Luxshare Precision Industry Co. resulted in a calculated human-machine trust score of 0.56. This score indicates a substantial level of agreement between human expert assessments and the automated analysis provided by SR-Delta. The 0.56 value suggests the methodology demonstrates a significant capacity for objective ESG evaluation, highlighting the potential for consistent and reliable assessments independent of subjective interpretation. This result supports the viability of integrating automated discrepancy analysis into sustainability rating processes.

A Deep Dive: Luxshare Precision Industry Under the STRIDE Lens

The application of the STRIDE framework to Luxshare Precision Industry serves as a compelling case study in evaluating Environmental, Social, and Governance (ESG) performance beyond conventional ratings. This analysis demonstrates how a structured, data-centric approach can move past reliance on potentially subjective assessments, offering a more nuanced understanding of a company’s sustainability profile. By systematically examining source data and identifying inconsistencies, STRIDE reveals the underlying factors influencing ESG scores, providing stakeholders with actionable insights into a company’s true commitment to responsible practices. The framework’s practical application to Luxshare highlights its potential to enhance transparency and accountability within the ESG landscape, ultimately fostering more informed investment decisions and driving positive change.

The application of SR-Delta to Luxshare Precision Industry’s ESG data uncovered notable inconsistencies within the information used to calculate its MSCI Ratings. Detailed analysis revealed discrepancies between publicly reported figures and those incorporated into the rating agency’s assessment, specifically regarding waste management practices and employee training programs. These variances weren’t indicative of intentional misreporting, but rather stemmed from data sourcing ambiguities and differing interpretations of reporting standards. SR-Delta’s methodology pinpointed these inconsistencies, highlighting the potential for inaccuracies in relying solely on aggregated ESG scores and demonstrating the value of granular, source-level data verification for a more reliable sustainability evaluation.

A rigorous, data-driven approach to evaluating sustainability performance offers a critical advantage over traditional methods often reliant on subjective assessments and limited data points. This is particularly evident when analyzing complex global supply chains, where inconsistencies and inaccuracies can significantly skew overall ESG ratings. By employing frameworks like SR-Delta, an organization can move beyond broad generalizations and pinpoint specific discrepancies within reported data, fostering greater transparency and accountability. Such an approach not only enhances the objectivity of evaluations, but also allows for a more nuanced understanding of a company’s true sustainability profile, ultimately leading to more informed investment decisions and a more accurate reflection of genuine environmental and social impact.

The application of the STRIDE framework to Luxshare Precision Industry generated a detailed ESG performance profile, revealing a Credibility Score of 0.85 and a Reliability Score of 0.83, indicating strong data validity and consistency in reported metrics. However, the resulting Intimacy Score of 0.32 suggests a limited connection between reported sustainability initiatives and the company’s core business operations – a potential area for improvement. Notably, the Self-Served Purpose Score achieved a perfect 1.00, confirming a clear and demonstrable commitment to sustainability goals as articulated by the company itself; these scores collectively demonstrate the framework’s capacity to provide a nuanced and multifaceted evaluation, moving beyond simple ratings to highlight both strengths and weaknesses in a company’s ESG performance.

Charting a Course Towards Transparent and Reliable ESG Assessments

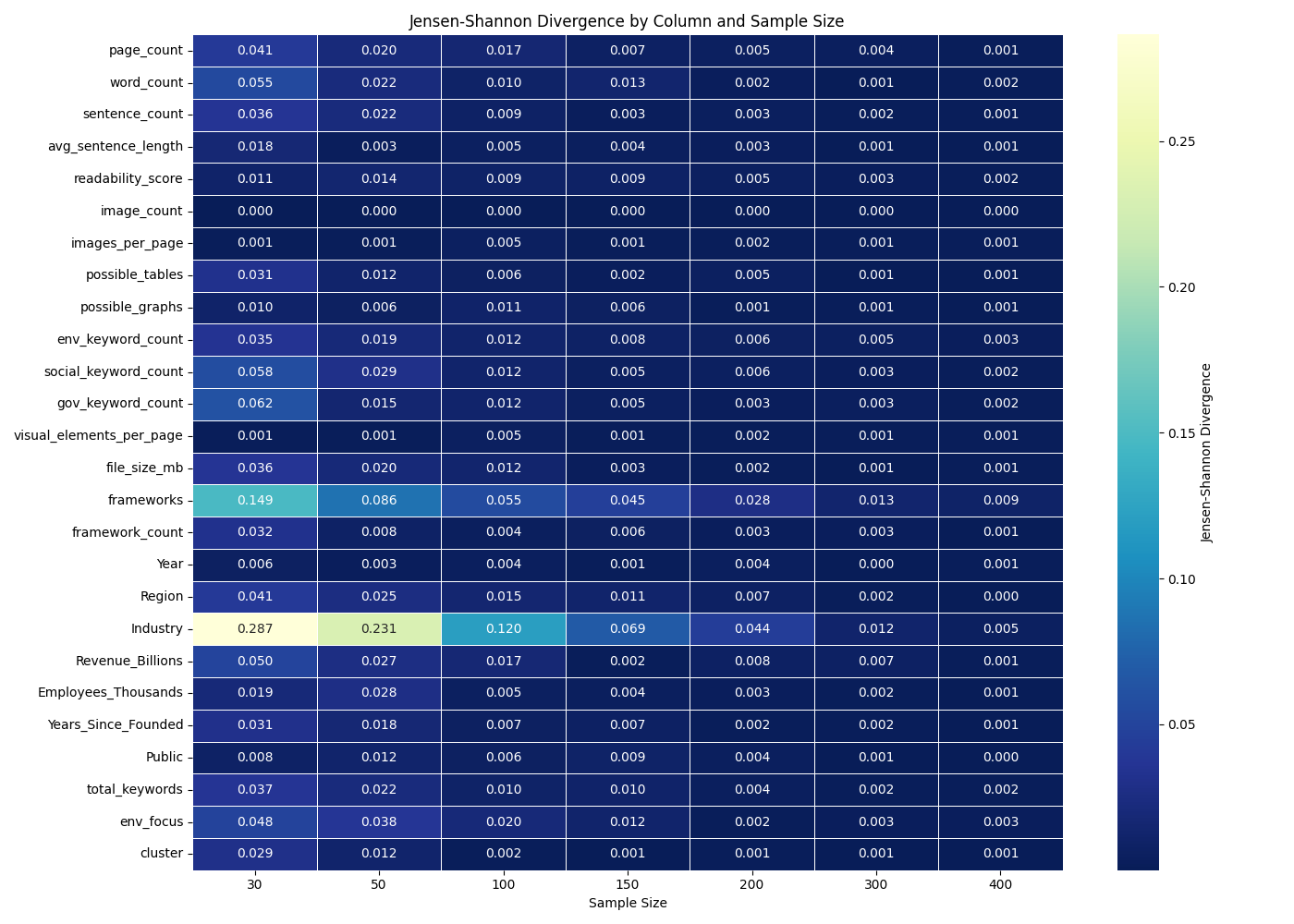

A fundamental challenge in evaluating corporate sustainability lies in the current lack of standardized methodologies and consistently high-quality data. The proliferation of varying ESG reporting frameworks and metrics creates ambiguity, hindering accurate comparisons between companies and obscuring genuine progress. Efforts to consolidate these frameworks are underway, but simultaneously improving the underlying data is paramount; this includes verifying data sources, increasing granularity, and employing robust auditing processes. By establishing common definitions, measurement techniques, and data validation procedures, a more resilient and dependable system for assessing sustainability performance can be constructed, ultimately enabling stakeholders to confidently gauge impact and make informed decisions regarding environmental, social, and governance factors.

The confluence of artificial intelligence and human judgment represents a pivotal advancement in environmental, social, and governance (ESG) assessment. Systems like STRIDE demonstrate the potential of AI to process vast datasets and identify complex patterns indicative of sustainability performance, far exceeding the capacity of manual analysis. However, algorithms require contextualization and validation; therefore, integrating human expertise is not merely supplementary, but essential. This collaborative approach allows for nuanced interpretation of data, identification of potential biases within algorithms, and ensures that assessments reflect real-world complexities often missed by purely quantitative methods. Consequently, this synergy unlocks deeper, more actionable insights, enabling continuous improvement in ESG methodologies and fostering a more dynamic and reliable evaluation process.

The efficacy of Environmental, Social, and Governance (ESG) assessments hinges critically on demonstrable transparency throughout reporting and rating processes. Currently, a lack of clarity regarding methodologies, data sources, and weighting criteria often obscures the rationale behind ESG scores, breeding skepticism amongst investors and stakeholders. Enhanced transparency demands standardized reporting frameworks, publicly accessible data, and detailed explanations of rating methodologies. This openness not only fosters trust in the assessments themselves, but also drives accountability for the companies being evaluated; when data and methodologies are open to scrutiny, organizations are incentivized to genuinely improve their sustainability performance, rather than engage in superficial ‘greenwashing’. Ultimately, a move towards transparent ESG assessments transforms these evaluations from subjective opinions into objective, reliable indicators of a company’s commitment to sustainable practices.

A future where environmental, social, and governance (ESG) assessments are both transparent and reliable promises a fundamental shift in investment strategies and global impact. Such a system moves beyond superficial metrics, providing investors with the granular, trustworthy data needed to accurately gauge a company’s true sustainability performance and long-term risk profile. This enhanced clarity isn’t merely about avoiding “greenwashing”; it facilitates capital allocation towards genuinely impactful ventures, incentivizing businesses to prioritize responsible practices. Consequently, reliable ESG assessments have the potential to unlock substantial positive change, directing financial flows towards solutions for pressing global challenges and fostering a more sustainable and equitable world for generations to come.

The pursuit of trustworthy evaluation, as detailed in this framework, echoes a fundamental principle of system longevity. The STRIDE methodology, with its emphasis on human-AI collaboration for benchmark dataset construction, isn’t simply about achieving accurate ESG metrics; it’s about building a resilient system capable of adapting to evolving data landscapes. As Robert Tarjan once observed, “Program structure is more important than cleverness.” This sentiment applies directly to the construction of sustainability ratings; a well-structured, transparent dataset – built with collaborative intelligence – will endure far longer and provide more reliable insights than one relying on isolated, ingenious solutions. The framework recognizes that technical debt-in this case, opaque data or inconsistent methodologies-accumulates over time, ultimately impacting the system’s long-term viability.

What’s Next?

The pursuit of trustworthy sustainability ratings, as outlined by this work, inevitably encounters the fundamental limitations inherent in all evaluative systems. Any improvement in methodology ages faster than expected; the very act of refining metrics introduces new avenues for decay, for divergence between intended signal and observed outcome. The STRIDE framework, while offering a potent means of data curation and discrepancy reduction, does not eliminate the core problem: the arrow of time erodes the validity of even the most meticulously constructed benchmarks.

Future work must address not merely the how of rating, but the duration of trust. The emphasis should shift from striving for perfect, static assessments – a futile endeavor – to understanding the rate of metric obsolescence. A critical line of inquiry involves modeling the lifespan of ‘sustainability’ itself, acknowledging that definitions and priorities inevitably shift.

Rollback, the journey back along the arrow of time to reconstruct past assessments with current understanding, will become increasingly important. This necessitates robust data lineage and versioning – not simply to correct errors, but to quantify the cost of delayed refinement. Ultimately, the field must embrace the transient nature of evaluation, recognizing that the goal isn’t to achieve trustworthy ratings, but to manage their inevitable decline.

Original article: https://arxiv.org/pdf/2602.17106.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- United Airlines can now kick passengers off flights and ban them for not using headphones

- Crimson Desert: Disconnected Truth Puzzle Guide

- All 9 Coalition Heroes In Invincible Season 4 & Their Powers

- Mewgenics vinyl limited editions now available to pre-order

- Grey’s Anatomy Season 23 Confirmed for 2026-2027 Broadcast Season

- How to Get to the Undercoast in Esoteric Ebb

- The Boys Season 5 Spoilers: Every Major Character Death If the Show Follows the Comics

- NASA astronaut reveals horrifying tentacled alien is actually just a potato

- Viral Letterboxd keychain lets cinephiles show off their favorite movies on the go

- Does Mark survive Invincible vs Conquest 2? Comics reveal fate after S4E5

2026-02-23 02:39