Author: Denis Avetisyan

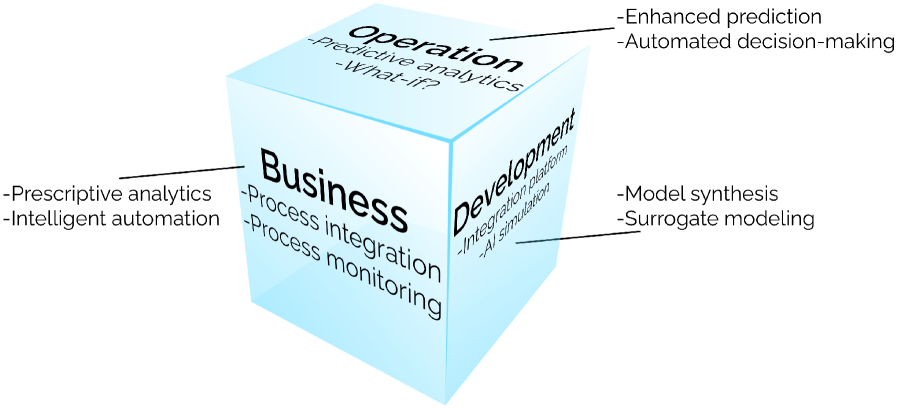

The convergence of artificial intelligence, modeling & simulation, and digital twins is unlocking unprecedented capabilities for system understanding and predictive optimization.

This review explores the application of artificial intelligence techniques to enhance modeling and simulation within the context of digital twins, focusing on data integration, predictive maintenance, and system dynamics.

Despite increasing computational power, accurately representing complex physical systems remains a significant challenge for predictive modeling. This paper, ‘Artificial Intelligence for Modeling & Simulation in Digital Twins’, explores the convergence of artificial intelligence (AI) and modeling & simulation (M&S) within the framework of digital twins to address this limitation. By detailing the synergistic relationship between these technologies, we demonstrate how integrated systems can enhance system understanding, prediction, and optimization across diverse applications. What future advancements will unlock even greater potential for intelligent, data-driven insights through these combined approaches?

The Inevitable Mirror: From Physical Prototypes to Virtual Prophecy

Historically, engineering projects have been intrinsically linked to the creation of physical prototypes – tangible, often expensive, representations of a design. This reliance necessitates a cycle of build, test, and refine, frequently uncovering issues late in the development process and triggering costly revisions. Furthermore, maintenance strategies have largely been reactive, addressing failures only after they occur, leading to downtime and unexpected expenses. This traditional approach not only extends project timelines and inflates budgets, but also limits opportunities for optimization and innovation; improvements are often discovered through accidental findings rather than proactive analysis. The inherent inefficiencies of this method stand in stark contrast to emerging technologies that prioritize virtual modeling and predictive capabilities.

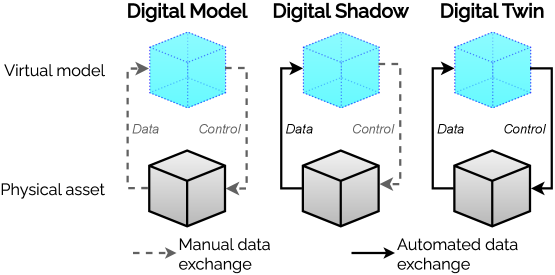

Digital Twins represent a fundamental shift in how systems are designed, maintained, and optimized, moving beyond traditional reactive approaches to embrace proactive strategies. These virtual replicas of physical assets aren’t simply static models; they dynamically mirror the condition and performance of their real-world counterparts through a continuous flow of real-time data. This capability facilitates predictive maintenance, allowing potential issues to be identified and addressed before failures occur, significantly reducing downtime and costs. Consequently, the technology is fueling substantial growth within the broader digital transformation market, currently experiencing a remarkable 60% Compound Annual Growth Rate (CAGR), as industries increasingly recognize the value of optimizing performance and extending asset lifecycles through virtual simulation and analysis.

At the heart of digital twin technology lies the creation of a remarkably detailed virtual counterpart to a physical asset – be it a jet engine, a wind turbine, or even an entire city. This isn’t merely a 3D model; it’s a dynamic, living representation that continuously updates to mirror the real-world object’s condition and performance. Through the constant influx of data from sensors and other monitoring systems, the digital twin evolves, accurately reflecting changes in temperature, stress, wear, and other critical parameters. This high-fidelity mirroring enables engineers and operators to observe the asset’s behavior in real-time, analyze potential issues before they arise, and ultimately optimize its lifespan and efficiency. The accuracy of this virtual reflection is paramount, as it forms the foundation for predictive maintenance, performance optimization, and informed decision-making throughout the asset’s lifecycle.

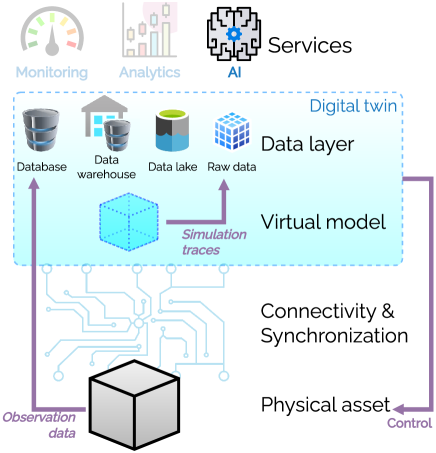

The creation of a functional digital twin hinges on the confluence of real-time data streams and sophisticated simulation capabilities. Sensors embedded within a physical asset continuously transmit data – encompassing temperature, pressure, vibration, and operational status – to its virtual counterpart. This influx of information isn’t merely recorded; it dynamically updates the digital twin’s state, creating a near-instantaneous reflection of the physical world. However, raw data alone is insufficient. Advanced simulation techniques, including finite element analysis, computational fluid dynamics, and machine learning algorithms, process this data to predict future behavior, identify potential failures, and optimize performance. This synergistic interplay between live data and predictive modeling allows the digital twin to move beyond simple mirroring, becoming a powerful tool for proactive decision-making and enhanced operational efficiency.

The Virtual Core: Constructing the Data Ecosystem

The foundation of an effective Digital Twin is a robust DataLayer, responsible for the bidirectional flow of information between the PhysicalTwin and the virtual model. This layer must reliably acquire data from the physical asset via sensors, IoT devices, and other data sources, performing necessary preprocessing, validation, and storage. Simultaneously, the DataLayer ingests outputs from simulations – whether based on φ-function approximations or stochastic modeling – and integrates them with real-world data to refine the virtual representation. Data formats must be standardized and scalable to accommodate the volume, velocity, and variety of incoming information, and the architecture should support real-time or near-real-time updates to maintain synchronization between the physical and virtual instances.

Effective Digital Twin construction requires employing a variety of modeling and simulation techniques to accurately represent the behavior of the physical asset. PhysicsModels utilize first principles to simulate continuous physical phenomena, while discrete event approaches, such as PetriNets, model systems as a sequence of events occurring at specific points in time. PetriNets, specifically, are useful for analyzing systems with concurrent activities and resource contention. The choice of technique depends on the characteristics of the system being modeled; some systems require a combination of approaches to capture the relevant dynamics, allowing for a nuanced and accurate virtual representation of the PhysicalTwin.

System Dynamics (SD) and Agent-Based Modeling (ABM) offer complementary approaches to simulating complex systems. SD utilizes feedback loops and stocks/flows to model aggregate behavior, focusing on relationships between overall system variables and suitable for high-level analysis of interconnected components. Conversely, ABM simulates the actions and interactions of autonomous agents within a system, allowing for the emergence of macro-level patterns from micro-level behaviors. The choice between SD and ABM-or a hybrid approach-depends on the system’s characteristics; ABM is often preferred when individual agent heterogeneity and explicit representation of interactions are crucial, while SD excels at representing continuous variables and delays within a system’s structure. Both methods facilitate understanding of non-linear behaviors and unintended consequences within complex adaptive systems.

Surrogate modeling, also known as meta-modeling, addresses computational limitations in Digital Twin simulations by creating simplified approximations of complex phenomena. These approximations, often based on machine learning techniques like regression or neural networks, are trained on data generated by high-fidelity simulations or physical experiments. The resulting surrogate model can then be evaluated much faster than the original model, enabling real-time or near-real-time predictions and optimizations. This approach is particularly useful when evaluating a large number of design iterations or scenarios, or when integrating the Digital Twin with real-time control systems where latency is critical. While sacrificing some accuracy compared to the full-fidelity model, surrogate modeling provides a computationally efficient alternative for specific aspects of the simulation where detailed accuracy is not paramount.

The Oracle Within: Machine Learning and Predictive Control

Machine Learning, and specifically Reinforcement Learning (RL), facilitates automated optimization and control within Digital Twin environments by training agents to interact with the virtual representation of a physical asset. These RL agents learn through trial and error, receiving rewards for actions that improve performance and penalties for detrimental ones, ultimately identifying optimal control strategies. This approach moves beyond traditional rule-based systems, allowing the Digital Twin to adapt to changing conditions and complex scenarios without explicit programming. The trained agents can then be deployed to control or provide recommendations for the corresponding Physical Twin, leading to improvements in operational efficiency and performance. This capability is particularly valuable for complex systems where manual optimization is impractical or impossible.

Training machine learning agents within a Digital Twin environment allows for the simulation and optimization of operational and maintenance strategies for the corresponding physical asset, known as the PhysicalTwin. These agents can explore a wide range of scenarios and parameter settings without impacting real-world operations, identifying strategies that maximize performance, minimize downtime, and reduce costs. The virtual environment facilitates rapid iteration and evaluation of these strategies, enabling the selection of optimal control parameters and maintenance schedules before implementation on the PhysicalTwin. This process leverages the fidelity of the Digital Twin to accurately represent the behavior of the physical asset, ensuring that the strategies developed in the virtual space are transferable and effective in the real world.

Closed-loop control systems, facilitated by continuous data exchange between the Digital Twin and its Physical Twin, enable proactive intervention strategies. This synchronization allows the virtual environment to predict potential failures or inefficiencies in the physical asset, triggering adjustments or maintenance procedures before issues arise. Consequently, organizations leveraging this technology have documented reductions in maintenance costs ranging from 10 to 20 percent. This cost savings stems from a shift from reactive, failure-based maintenance to a predictive model that optimizes resource allocation and minimizes unscheduled downtime.

Implementation of closed-loop control systems and predictive analytics, facilitated by Digital Twin technology, has demonstrated measurable economic benefits for early adopters. General Electric (GE) has publicly reported achieving up to a 20% reduction in unanticipated downtime through the application of these digital technologies. Furthermore, GE documented a 15% improvement in energy efficiency resulting from optimized operations informed by data analysis and predictive modeling within their Digital Twin infrastructure. These figures indicate a substantial return on investment through decreased operational costs and increased asset performance, driven by the ability to proactively address potential failures and optimize resource allocation.

The Looming Interoperability: Standards and the Future of Twins

The effective integration of Digital Twins across diverse applications hinges on the establishment of standardized frameworks, and initiatives like ISO23247 and ISO30173 are pivotal in achieving this goal. These standards don’t merely offer guidelines; they define a common language and structure for representing complex physical assets and their behaviors in the virtual world. By specifying consistent data formats, communication protocols, and validation procedures, these standards enable different Digital Twin implementations – developed by various teams or organizations – to seamlessly exchange information and collaborate. This interoperability is crucial for realizing the full potential of Digital Twins, allowing for holistic lifecycle management, predictive maintenance, and optimized performance across entire systems, rather than isolated components. Without such standardization, Digital Twins risk becoming siloed, limiting their value and hindering broader innovation.

The effective implementation of Digital Twin technology hinges on the ability of these virtual representations to communicate and collaborate, a capability significantly bolstered by emerging standards. These protocols don’t merely address data formats; they establish rigorous processes for model validation, ensuring accuracy and reliability across diverse applications. Consequently, industries ranging from manufacturing and aerospace to healthcare and urban planning can seamlessly integrate Digital Twins, enabling shared insights, predictive maintenance, and optimized performance. This interoperability isn’t simply about connecting systems, but about unlocking a new era of data-driven decision-making, where virtual models serve as a universal language for complex systems and processes.

The evolution of Digital Twin technology isn’t solely focused on creating precise, controllable replicas of physical entities; a parallel development centers on DigitalShadows. These virtual representations, while mirroring the structure and data of a physical asset, deliberately lack the ability to exert control over their physical counterpart. This distinction unlocks powerful analytical possibilities, allowing for ‘what-if’ scenario testing and predictive maintenance without the risk of impacting the real-world system. By focusing on observation and analysis alone, DigitalShadows offer a cost-effective and safe environment for exploring operational improvements, identifying potential failures, and optimizing performance-essentially providing a dedicated virtual laboratory for data-driven insights without the constraints of real-time control loops.

The convergence of isolated Digital Twins into a cohesive ‘Digital Thread’ represents a significant advancement in product and system lifecycle management. This interconnected network transcends traditional data silos, enabling a continuous flow of information from initial design and engineering through manufacturing, operation, and eventual decommissioning. Such a thread doesn’t merely aggregate data; it contextualizes it, allowing for predictive maintenance, optimized performance, and accelerated innovation. By linking virtual representations across the entire value chain, organizations can gain unprecedented insights into product behavior, identify potential issues proactively, and implement closed-loop improvements – ultimately fostering greater efficiency, resilience, and sustainability throughout a product’s existence.

The pursuit of truly intelligent digital twins necessitates acknowledging that these systems aren’t static constructions, but rather evolving landscapes. The article emphasizes the power of integrating AI with modeling and simulation to enhance predictive capabilities – a vital step towards proactive system management. This echoes John McCarthy’s observation: “Artificial intelligence is the science and engineering of making computers do things that require intelligence when done by humans.” It’s a fitting sentiment, as the success of digital twins doesn’t hinge on perfectly replicating reality, but on fostering a system capable of learning, adapting, and ‘forgiving’ inevitable discrepancies-much like a resilient garden tolerates imperfection.

What Lies Ahead?

The pursuit of artificial intelligence within digital twins reveals less a path toward complete system mastery and more a deepening entanglement with inherent unpredictability. The promise of predictive maintenance, of optimization through simulation, feels less like engineering and more like divination – a refined attempt to read the tea leaves of complex systems. Scalability is just the word used to justify complexity, a comforting illusion that increased computational power will somehow resolve the fundamental limitations of modeling reality.

The integration of machine learning introduces a seductive simplicity, trading explicit understanding for empirical correlation. But every optimized system will someday lose flexibility, becoming brittle in the face of novel conditions. The true challenge isn’t building more accurate models; it’s accepting that all models are, by definition, incomplete. Data integration, the bedrock of these endeavors, merely amplifies the signal and the noise, making it ever more difficult to discern meaningful patterns from random fluctuations.

The perfect architecture is a myth to keep sane. Future work will inevitably focus on managing the entropy of these ecosystems, on building resilience into systems designed for prediction. The field will not arrive at a final solution, but at a perpetual negotiation with uncertainty – a continuous process of adaptation, refinement, and acceptance of the limitations inherent in representing a dynamic world with static models.

Original article: https://arxiv.org/pdf/2602.19390.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- United Airlines can now kick passengers off flights and ban them for not using headphones

- Crimson Desert: Disconnected Truth Puzzle Guide

- All 9 Coalition Heroes In Invincible Season 4 & Their Powers

- Mewgenics vinyl limited editions now available to pre-order

- Assassin’s Creed Shadows will get upgraded PSSR support on PS5 Pro with Title Update 1.1.9 launching April 7

- Viral Letterboxd keychain lets cinephiles show off their favorite movies on the go

- Grey’s Anatomy Season 23 Confirmed for 2026-2027 Broadcast Season

- Does Mark survive Invincible vs Conquest 2? Comics reveal fate after S4E5

- All Golden Ball Locations in Yakuza Kiwami 3 & Dark Ties

- How to Get to the Undercoast in Esoteric Ebb

2026-02-24 22:34