Author: Denis Avetisyan

Deep learning is dramatically improving the accuracy of automated electrocardiogram arrhythmia classification, promising earlier and more reliable diagnoses.

A comprehensive evaluation reveals that weighted ensembles of convolutional and recurrent neural networks, incorporating attention mechanisms and generative data augmentation, achieve state-of-the-art performance.

Despite advancements in cardiovascular diagnostics, accurate and automated arrhythmia classification from electrocardiograms (ECG) remains a significant challenge. This is addressed in ‘Deep Neural Network Architectures for Electrocardiogram Classification: A Comprehensive Evaluation’, which rigorously compares several deep learning architectures-including convolutional, recurrent, and residual networks-for ECG analysis, augmented with generative adversarial networks to address data scarcity. The research demonstrates that a dynamic ensemble of convolutional and long short-term memory networks, enhanced with attention mechanisms, achieves state-of-the-art performance in arrhythmia detection, yielding an F1-score of 0.958. Will these findings pave the way for more reliable and interpretable intelligent systems for real-time cardiac monitoring and diagnosis?

The Inevitable Complexity of Cardiac Signals

The precise detection of heart arrhythmias from electrocardiogram (ECG) signals stands as a cornerstone of effective cardiac care, directly influencing patient prognosis and survival rates. Irregular heart rhythms, if left undiagnosed or misidentified, can quickly escalate into life-threatening conditions like stroke, heart failure, or sudden cardiac arrest. Consequently, accurate arrhythmia classification enables clinicians to implement timely interventions – ranging from lifestyle adjustments and medication to more invasive procedures like cardioversion or the implantation of pacemakers. Beyond immediate treatment, precise diagnosis facilitates long-term patient monitoring, allowing for proactive management of chronic conditions and personalized care plans designed to optimize heart health and improve overall quality of life. The ability to differentiate between benign and dangerous arrhythmias, therefore, isn’t merely a diagnostic step, but a critical determinant in safeguarding cardiovascular well-being.

The diagnostic challenge with electrocardiogram (ECG) signals stems from their intrinsic complexity and the substantial variability observed between individuals – and even within the same person over time. Subtle nuances in waveform morphology, often indicative of arrhythmia, can be easily obscured by physiological noise, baseline wander, or muscle artifacts. Traditional analytical techniques, relying on manually defined features and thresholds, frequently fail to capture the full spectrum of these variations, leading to both false positives and missed detections. This limitation is particularly pronounced in cases of complex arrhythmias or when ECG signals are degraded by poor recording quality, necessitating more sophisticated approaches capable of adapting to the inherent irregularities of cardiac electrical activity and minimizing diagnostic errors.

The development of reliable arrhythmia classification models faces a significant hurdle due to a scarcity of meticulously labeled electrocardiogram (ECG) data, a problem acutely felt when dealing with infrequent cardiac events. While algorithms can achieve high accuracy on common arrhythmias, performance frequently declines when confronted with rarer conditions simply because the training datasets lack sufficient examples for effective learning. This data imbalance introduces bias, causing models to underperform in identifying these critical, yet less prevalent, arrhythmias-potentially delaying diagnosis and appropriate treatment. Researchers are actively exploring techniques like data augmentation, synthetic data generation, and transfer learning to mitigate this limitation, aiming to build models capable of generalizing well even with limited examples of challenging arrhythmias and ultimately improving diagnostic accuracy across the entire spectrum of cardiac irregularities.

Automated Feature Extraction: A Necessary Surrender to Complexity

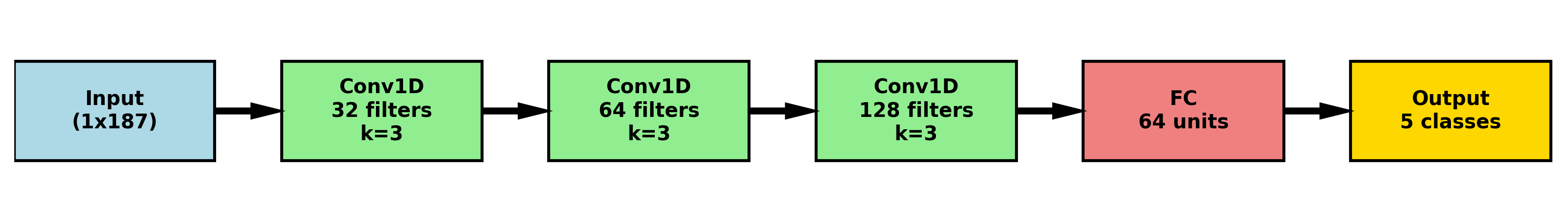

Convolutional Neural Networks (CNNs) demonstrate efficacy in electrocardiogram (ECG) analysis by automating feature extraction directly from raw signal data. Unlike traditional methods requiring manual feature engineering – such as identifying QRS complexes, P waves, and T waves – CNNs utilize convolutional layers with learnable filters to detect patterns indicative of cardiac activity. These filters scan the ECG signal, identifying localized features and their spatial relationships. Subsequent pooling layers reduce dimensionality and computational complexity, while multiple convolutional and pooling layers enable the network to learn hierarchical representations of the ECG signal. This automated process eliminates the need for domain expertise in feature selection and can identify subtle patterns potentially missed by manual analysis, ultimately improving the accuracy and efficiency of ECG-based diagnostics.

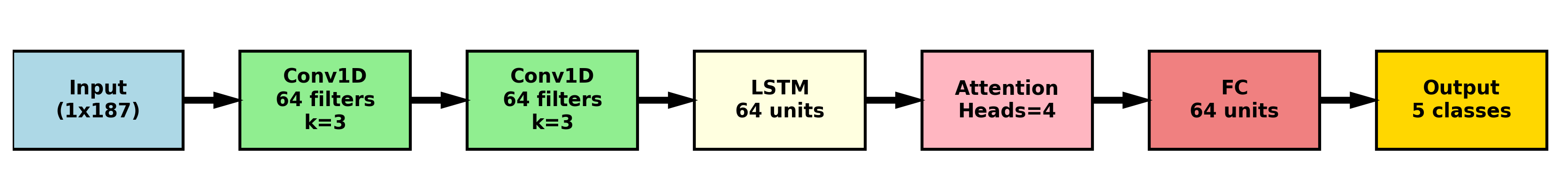

CNN-LSTM architectures combine the feature extraction capabilities of Convolutional Neural Networks (CNNs) with the sequential processing strengths of Long Short-Term Memory (LSTM) networks to effectively analyze electrocardiogram (ECG) data. CNN layers are initially employed to automatically learn relevant spatial features directly from the raw ECG signal, such as QRS complexes and P-waves. The output of these convolutional layers, representing learned feature maps, is then fed into LSTM layers. LSTMs are recurrent neural networks designed to process sequential data; in this context, they capture the temporal dependencies within the ECG signal, recognizing patterns and changes over time. This combined approach allows the model to identify both localized features and their evolution, improving the accuracy of ECG analysis and anomaly detection compared to models utilizing only CNNs or LSTMs.

Attention mechanisms improve ECG analysis by weighting the importance of different time steps within the input sequence. Unlike traditional methods or even basic CNN-LSTM networks that treat all segments of the ECG signal equally, attention allows the model to dynamically focus on the specific portions most indicative of cardiac abnormalities – such as the QRS complex or P wave. This is achieved through the calculation of attention weights, typically using a softmax function applied to a learned alignment score between the hidden states of the LSTM and a context vector. Higher weights signify greater relevance, effectively amplifying the contribution of those segments to the final classification or prediction. Consequently, attention mechanisms enhance the model’s ability to discern subtle but critical features, leading to improved diagnostic accuracy and robustness against noise or artifacts in the ECG signal.

Mitigating Imbalance: Acknowledging the Rarity of Failure

Data augmentation utilizing Generative Adversarial Networks (GANs) addresses class imbalance by artificially increasing the representation of minority classes. GANs function through a generator network that creates synthetic samples and a discriminator network that evaluates their authenticity. This adversarial process refines the generator’s ability to produce realistic, yet novel, instances of the minority class, effectively expanding the training dataset without requiring additional real-world data collection. The synthesized samples are then incorporated into the training set, balancing class distributions and improving model generalization performance, particularly for under-represented categories.

Focal Loss addresses class imbalance by down-weighting the contribution of easily classified examples during training, allowing the model to concentrate on hard-to-classify instances. This is achieved through a dynamically scaled cross-entropy loss, where the scaling factor reduces the loss contribution from well-classified examples. The α parameter balances the importance of positive and negative examples, while the γ parameter modulates the rate at which easy examples are down-weighted; higher values of γ increase the focusing effect, further emphasizing difficult examples and improving performance on minority classes. This refined training process allows for more effective learning, particularly when dealing with datasets exhibiting significant class disparity.

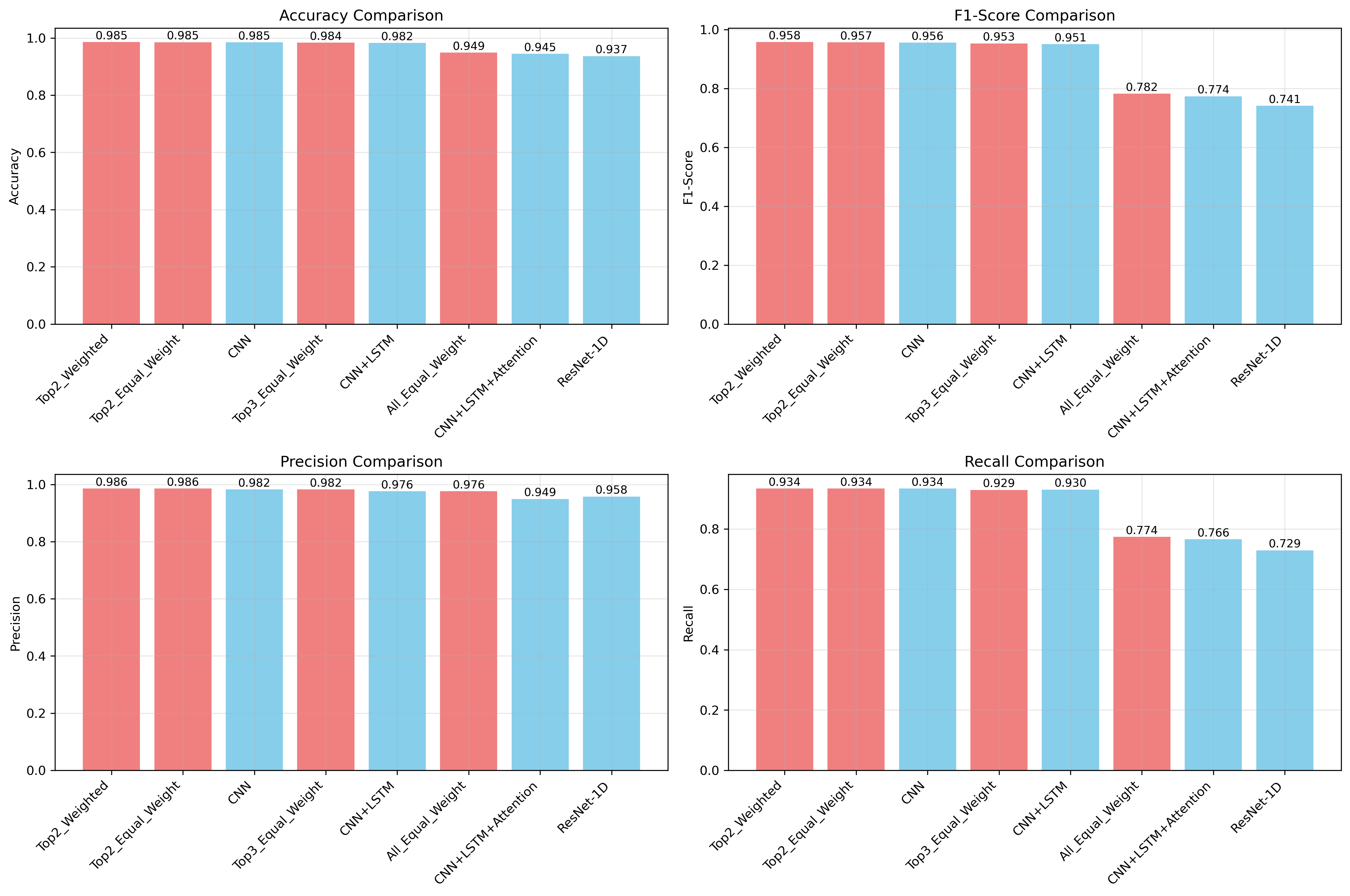

Ensemble learning was utilized to enhance both prediction accuracy and model robustness by combining the outputs of multiple individual models. This approach reduces variance and leverages the complementary strengths of different model configurations. Specifically, a Top2-Weighted ensemble – selecting the top two predictions from each model and weighting them accordingly – achieved a peak F1-score of 0.958, demonstrating improved performance over single models. This weighting scheme prioritizes high-confidence predictions, further contributing to the ensemble’s overall effectiveness.

Revealing the Logic: Visualizing the Inevitable Imperfection

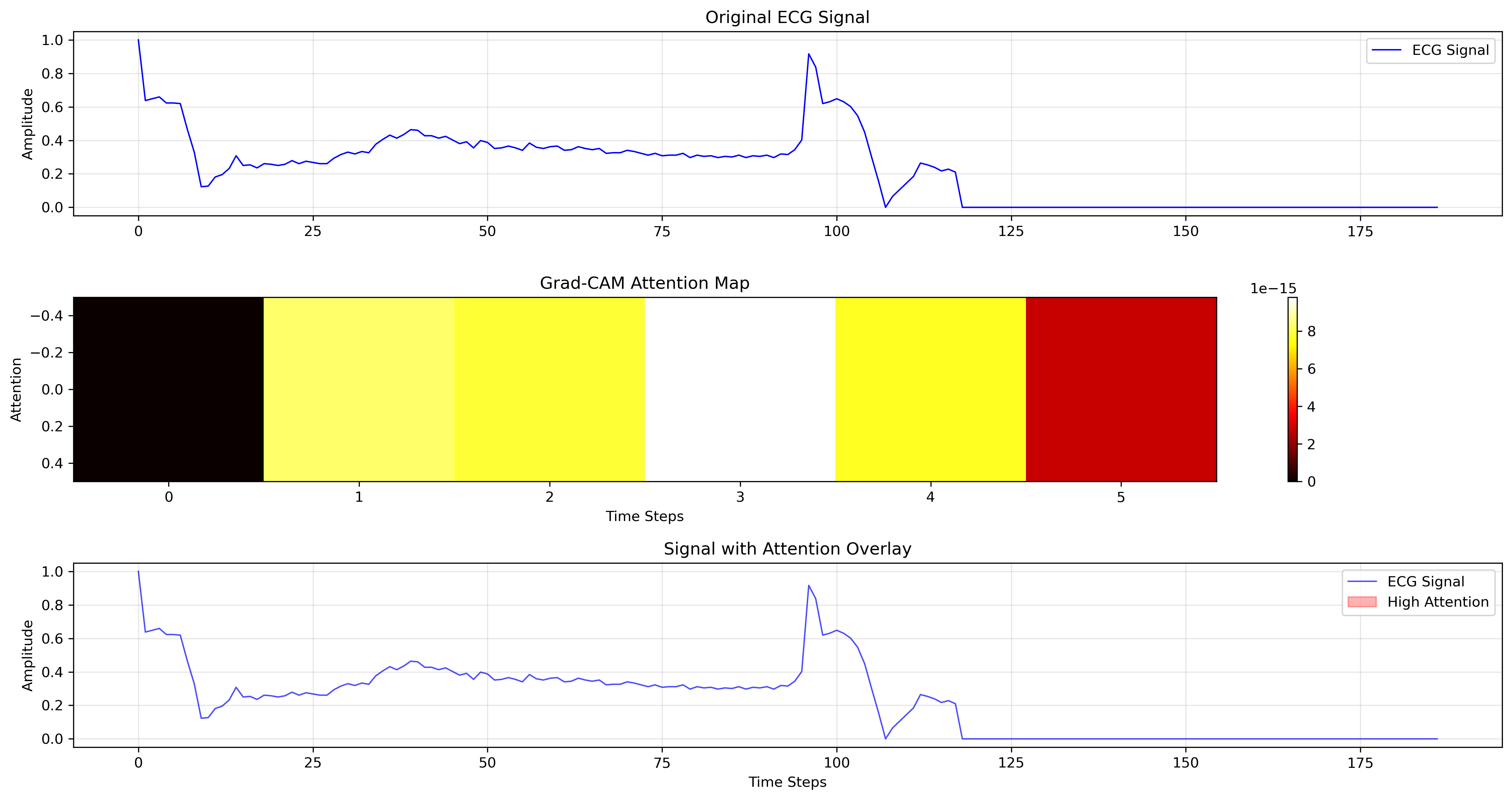

To move beyond simply classifying electrocardiogram (ECG) signals, the research utilizes Gradient-weighted Class Activation Mapping (Grad-CAM) visualization techniques. These methods effectively illuminate which specific portions of the ECG waveform – such as the P wave, QRS complex, or T wave – are most influential in the CNN-LSTM model’s predictive process. By generating heatmaps overlaid on the ECG, Grad-CAM reveals the model’s focal points, offering a visual explanation of its decision-making. This isn’t merely about achieving high accuracy; it’s about understanding why the model arrives at a particular diagnosis, thereby fostering transparency and enabling clinicians to assess the biological plausibility of the AI’s interpretations. The resulting visualizations allow for a detailed examination of the model’s attention, potentially uncovering subtle patterns indicative of cardiac abnormalities that might otherwise be overlooked.

The ability to visualize which parts of an electrocardiogram (ECG) signal drive a predictive model’s decisions is proving crucial for building confidence among medical professionals. Beyond simply achieving high accuracy, this interpretability allows clinicians to assess whether the artificial intelligence is focusing on physiologically relevant features – the characteristic waves and intervals that reflect cardiac function. By highlighting these critical regions, the system moves beyond a ‘black box’ approach, enabling doctors to validate the model’s reasoning and integrate it more effectively into clinical workflows. This deeper understanding doesn’t just confirm the model’s performance; it has the potential to reveal novel insights into the complex interplay of electrical activity within the heart, ultimately advancing both diagnostic capabilities and the fundamental knowledge of cardiac physiology.

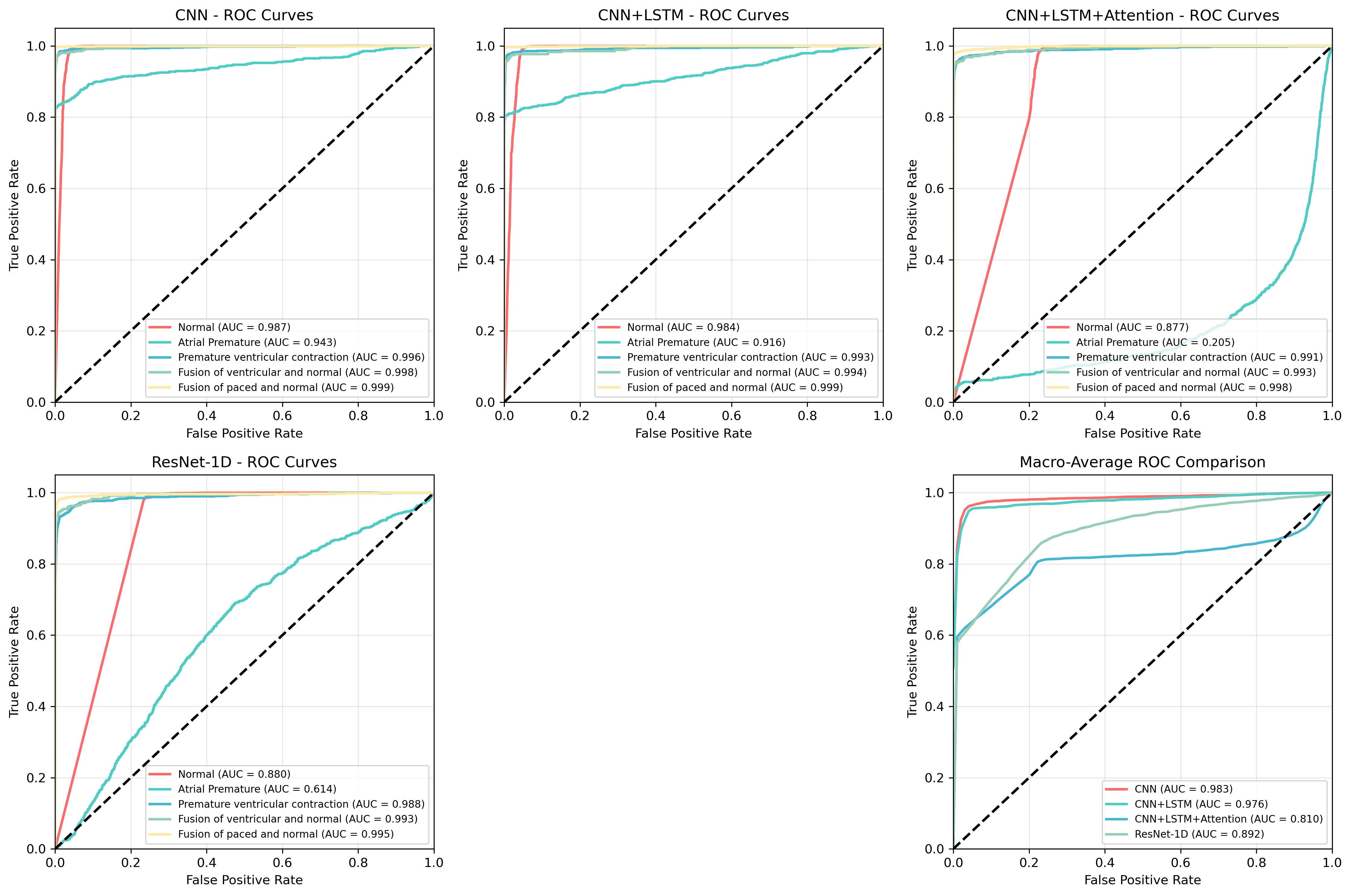

Comprehensive testing of the arrhythmia detection system, utilizing the widely-recognized MIT-BIH Arrhythmia Database, reveals substantial advancements in diagnostic accuracy. The Top2-Weighted ensemble method demonstrated particularly strong performance, achieving a robust F1-score of 0.958, indicative of a well-balanced precision and recall. Specifically, the ensemble exhibited a high precision of 0.986, minimizing false positives, alongside a recall of 0.934, effectively identifying a large proportion of actual arrhythmia events. The core CNN-LSTM model also performed exceptionally well, attaining an overall accuracy of 0.982, confirming the efficacy of the proposed architecture in automated cardiac arrhythmia classification and suggesting potential for reliable clinical application.

The pursuit of automated arrhythmia classification, as detailed in this research, isn’t simply about constructing a more accurate model; it’s about cultivating a resilient system. The weighted ensemble of CNN and LSTM, fortified by attention mechanisms and GAN augmentation, reflects an understanding that certainty is an illusion. Monitoring, in this context, becomes the art of fearing consciously – anticipating the inevitable imperfections and building capacity for revelation. As Aristotle observed, “The ultimate value of life depends upon awareness and the power of contemplation rather than mere survival.” This sentiment echoes the core principle: true resilience begins where certainty ends, and the system’s capacity to adapt, learn, and reveal its limitations is paramount.

What Winds Will Carry It?

The pursuit of automated arrhythmia classification, as demonstrated by this work, yields ever-diminishing returns. Each incremental gain in accuracy feels less like a triumph and more like a postponement. The ensemble of convolutional and recurrent networks, fortified with attention and generative augmentation, is not a destination, but a temporary reprieve. It merely shifts the locus of failure-from misclassification to the brittleness of the learned representations when confronted with data that deviates even slightly from the training distribution.

The true challenge isn’t building a more accurate classifier, but cultivating a system capable of acknowledging its own ignorance. A network that doesn’t simply predict but observes the limits of its knowledge, and signals the need for human intervention. The generative models, intended to broaden the dataset, instead deepen the illusion of completeness. They offer more examples of what is known to be arrhythmia, but do little to prepare the system for the novel rhythms of unforeseen cardiac events.

One suspects that the most fruitful avenues of inquiry lie not in architectural refinements, but in a radical rethinking of the evaluation metrics. Accuracy, sensitivity, specificity-these are artifacts of a bygone era. The future belongs to systems that measure not what they know, but what they don’t know, and how gracefully they degrade when confronted with the inevitable uncertainties of life.

Original article: https://arxiv.org/pdf/2602.17701.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- United Airlines can now kick passengers off flights and ban them for not using headphones

- Crimson Desert: Disconnected Truth Puzzle Guide

- All 9 Coalition Heroes In Invincible Season 4 & Their Powers

- Grey’s Anatomy Season 23 Confirmed for 2026-2027 Broadcast Season

- Mewgenics vinyl limited editions now available to pre-order

- Viral Letterboxd keychain lets cinephiles show off their favorite movies on the go

- All Golden Ball Locations in Yakuza Kiwami 3 & Dark Ties

- How to Get to the Undercoast in Esoteric Ebb

- Does Mark survive Invincible vs Conquest 2? Comics reveal fate after S4E5

- The Boys Season 5 Spoilers: Every Major Character Death If the Show Follows the Comics

2026-02-23 22:49