How AI Might Save Us: Mapping Paths to Survival

A new analysis categorizes potential future scenarios where humanity successfully navigates the risks of increasingly powerful artificial intelligence.

A new analysis categorizes potential future scenarios where humanity successfully navigates the risks of increasingly powerful artificial intelligence.

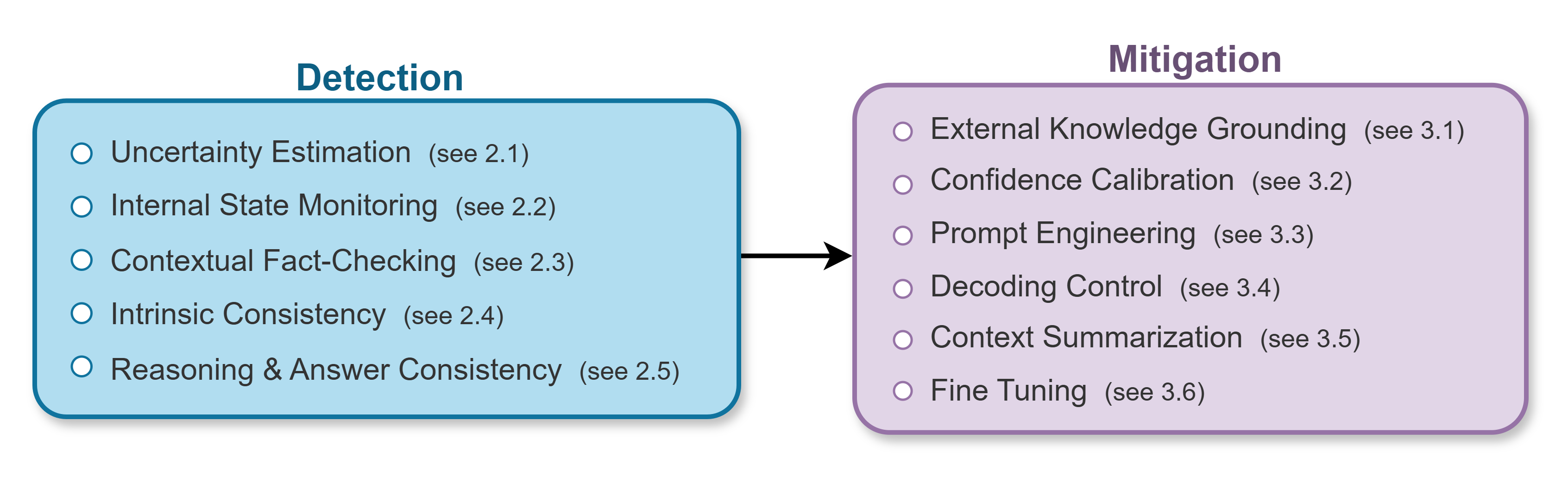

As large language models become increasingly integrated into critical applications, ensuring factual accuracy and mitigating the risk of fabricated information is paramount.

A new study demonstrates the potential of artificial intelligence to rapidly and reliably assess concrete compressive strength, offering a path toward automated quality control in large-scale construction projects.

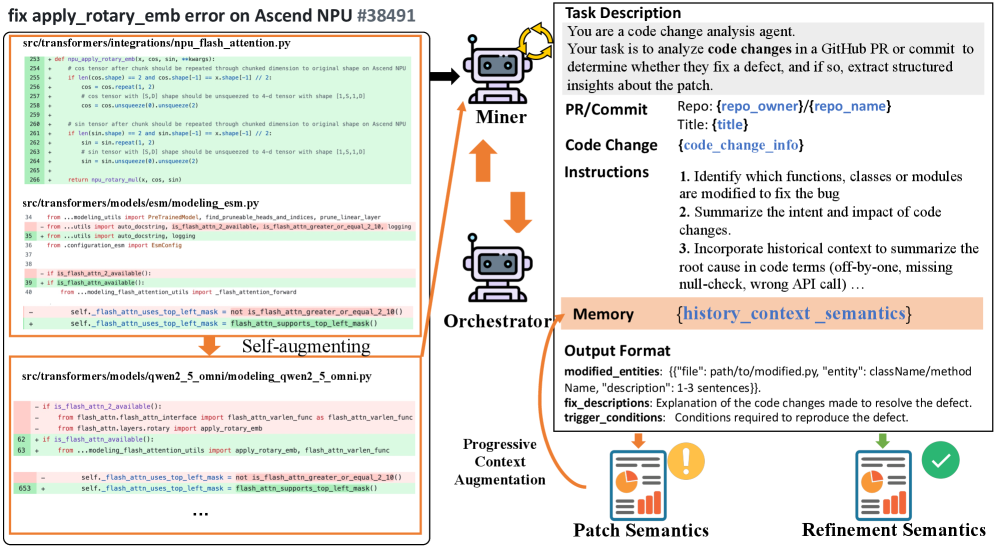

A new framework automatically assesses how changes to deep learning libraries impact the applications that depend on them, preventing silent errors and improving software stability.

Concept drift – unexpected changes in data patterns – can devastate supply chain forecasts, but a novel framework offers a robust solution for detecting and mitigating these disruptions.

![PRISM reconstructs a large language model’s probability prediction by aggregating Shapley values-quantifying each factor’s contribution-where factors positively correlated with the outcome are represented in green and those negatively correlated in red, with the size of each representation reflecting the absolute value of its contribution, effectively decomposing [latex] f(\cdot) [/latex] via the sigmoid function [latex] \sigma(\cdot) [/latex].](https://arxiv.org/html/2601.09151v1/x1.png)

Researchers have developed a novel framework that boosts the accuracy and interpretability of how large language models assess the likelihood of their predictions.

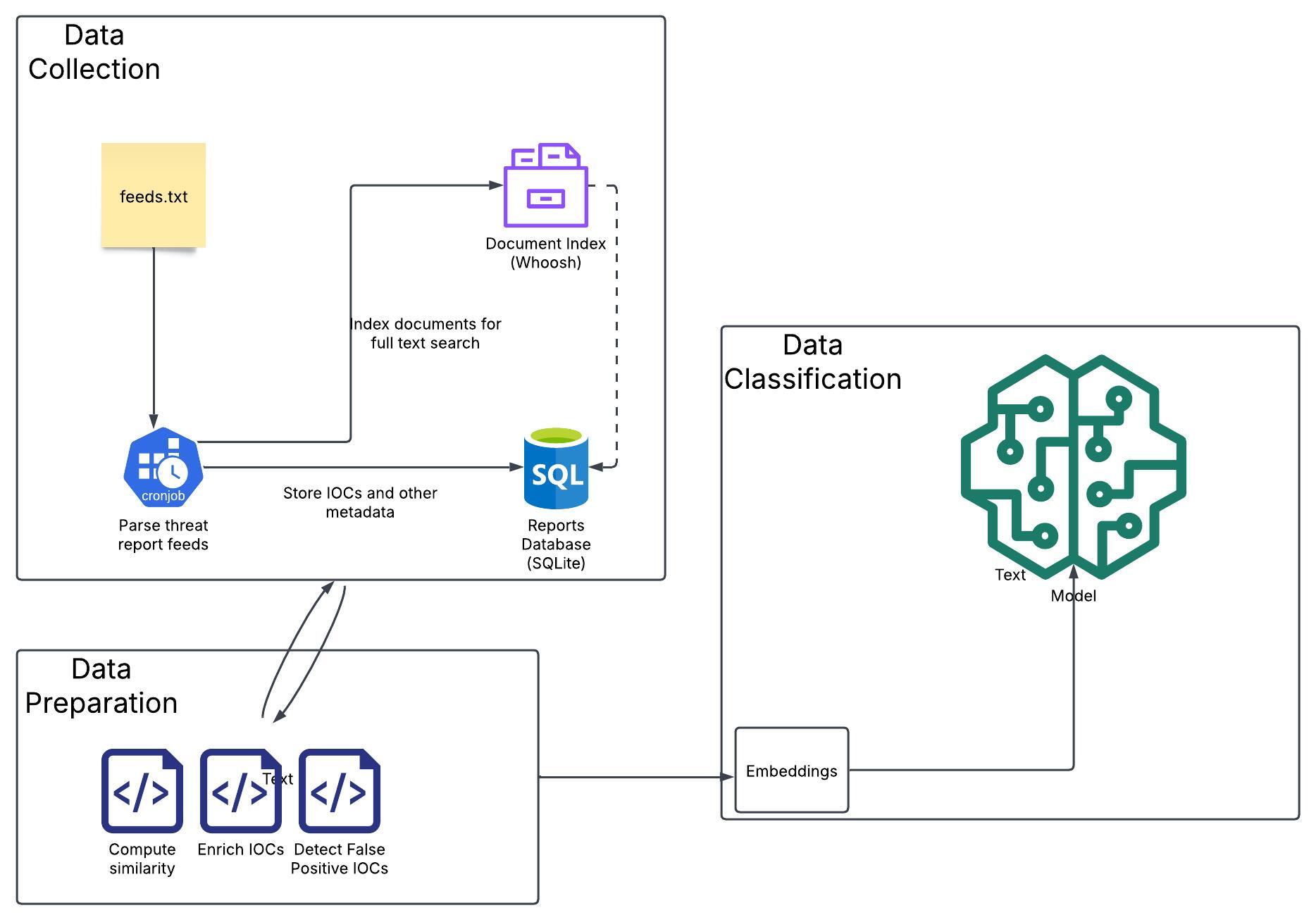

New research demonstrates how advanced AI can sift through online intelligence to identify potential cyberattacks before they fully materialize.

![Analysis of simulations mirroring the gravitational wave event GW231123, conducted with the NRSur waveform model and assessed through independent detector inference, reveals that divergences between posterior distributions of parameters-including total mass [latex]M_M[/latex], mass ratio [latex]q_q[/latex], luminosity distance [latex]D_{L D}\[/latex], effective inspiral spin [latex]\chi_{eff}[/latex], and precession spin [latex]\chi_p[/latex]-are consistent with expectations for Gaussian noise, with a small percentage of LIGO Livingston and LIGO Hanford pairs exhibiting larger divergences than those observed in GW231123 itself.](https://arxiv.org/html/2601.09678v1/figures_BF/JS_H_L_chip.png)

New analysis confirms the surprisingly high masses and spins observed in the gravitational wave event GW231123 are not artifacts of noise or waveform modeling.

A novel multimodal foundation model is poised to unify diverse data sources, promising enhanced situational awareness and proactive safety management in civil aviation.

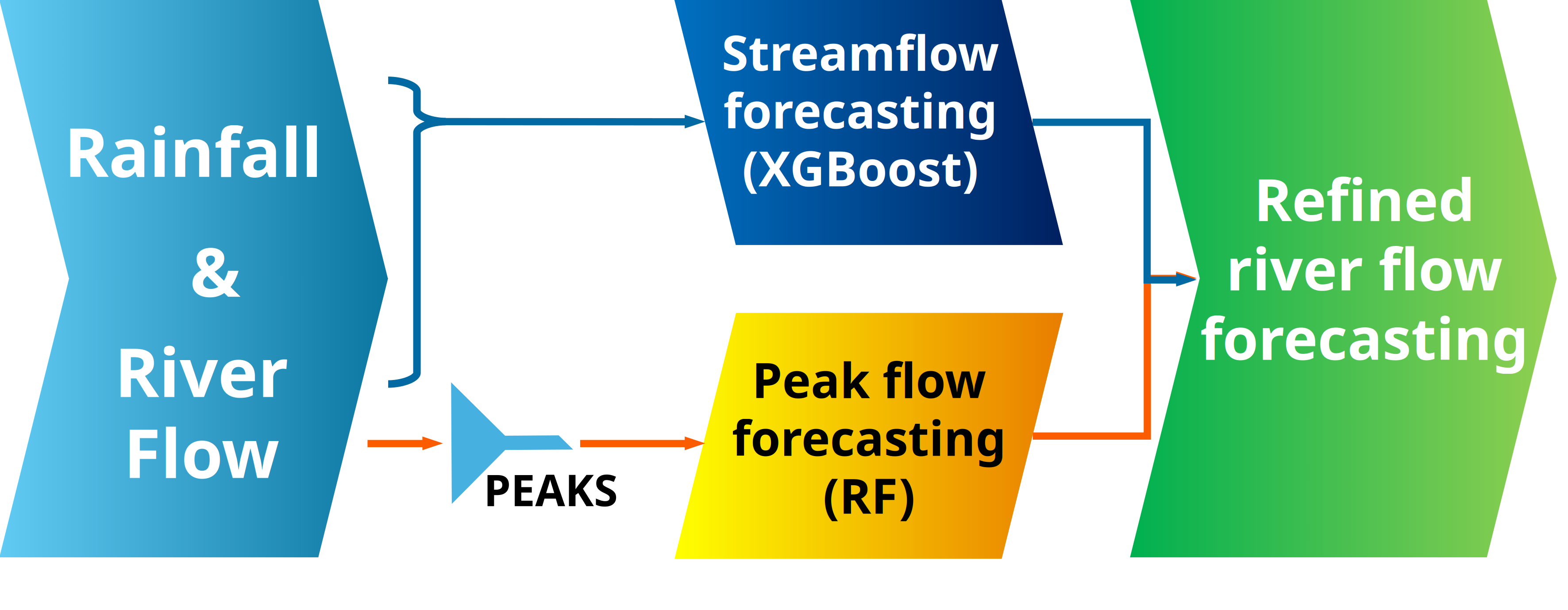

Researchers have developed a streamlined machine learning framework that significantly improves the accuracy of short-term river flow and peak flood predictions.