Decoding Plasma Chaos: A Neural Network Approach

![]()

Researchers have developed a convolutional operator network capable of modeling and predicting the complex dynamics of plasma turbulence, opening new avenues for understanding and controlling this pervasive phenomenon.

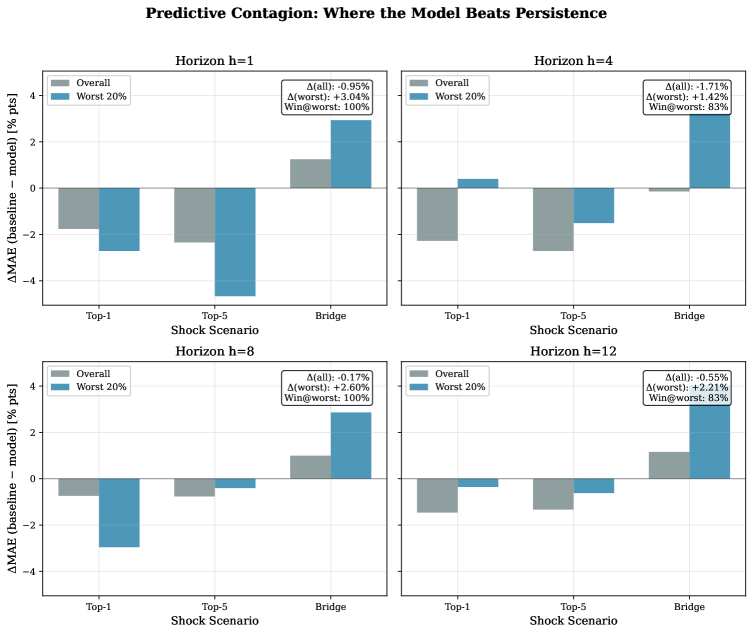

![The aggregate measure of systemic risk, represented by the ASRI, is decomposed into its constituent parts-stablecoin risk, DeFi liquidity risk, contagion risk, and arbitrage opacity-each contributing proportionally to the overall stress, and shifts in these relative contributions during crisis periods illuminate the dominant channels of systemic transmission, adhering to the decomposition property where [latex] \sum_{i} w_{i} S_{i} [/latex] equals the total ASRI at any given time.](https://arxiv.org/html/2602.03874v1/x1.png)