Building a Smarter Cybersecurity Defense with Knowledge Graphs

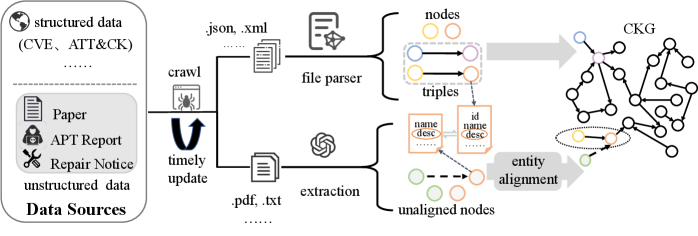

A new framework automatically constructs and expands a comprehensive cybersecurity knowledge graph by intelligently integrating diverse threat intelligence sources.

A new framework automatically constructs and expands a comprehensive cybersecurity knowledge graph by intelligently integrating diverse threat intelligence sources.

![VasoMIM extracts vascular anatomy from X-ray angiograms using a Frangi filter, then employs a patch-wise anatomical distribution to prioritize vessel-relevant regions during masking, ultimately optimizing model performance by minimizing a combined loss function [latex]\mathcal{L}_{MIM}[/latex] comprising standard pixel-level reconstruction [latex]\mathcal{L}_{rec}[/latex] and a novel anatomical consistency loss [latex]\mathcal{L}_{cons}[/latex], thereby learning discriminative vascular representations.](https://arxiv.org/html/2602.11536v1/x3.png)

A new self-supervised learning approach is dramatically improving the analysis of X-ray angiograms, enabling more accurate vascular segmentation and detection.

![Standard decomposition of uncertainty falters because methods attempting to derive both aleatoric-reflecting ambiguity in the true probability [latex]p^<i> [/latex]-and epistemic uncertainty-measuring deviation from [latex]p^</i> [/latex]-produce correlated estimates trapped along a diagonal, a limitation which a structurally separated Credal CBM approach successfully circumvents by recovering the inherent geometric independence of these properties.](https://arxiv.org/html/2602.11219v1/x1.png)

A new framework offers a robust method for separating genuine knowledge gaps from inherent data noise in deep learning models.

New research details a method for mathematically guaranteeing the safety of neural network controllers used in spacecraft guidance, moving beyond traditional simulation-based verification.

![In Reddit discussions concerning agentic AI, heightened platform visibility-specifically, engagement scores exceeding a threshold [latex]Q_{0.75}[/latex]-correlates with a delayed search for corroborating evidence, suggesting that initial credibility is often established through visibility itself, allowing nascent narratives to solidify before factual substantiation emerges, a process particularly observable in threads where verification cues are absent within a defined observation window and are thus treated as right-censored data.](https://arxiv.org/html/2602.11412v1/mechanism_sketch.png)

New research reveals that public conversations surrounding advanced artificial intelligence often prioritize engagement over rigorous verification, potentially leading to the premature acceptance of claims.

![The evolution of time series forecasting benchmarks reveals a field increasingly reliant on repurposed datasets-indicated by superscripted markers-and characterized by key transitions highlighted along a timeline that now prominently features the Time Series Transformer [latex]TST[/latex], despite a historical pattern of frameworks inevitably accruing technical debt as production use cases challenge theoretical elegance.](https://arxiv.org/html/2602.12147v1/figure/timeline.png)

A new research effort introduces TIME, a comprehensive benchmark designed to rigorously evaluate the next generation of time series forecasting models.

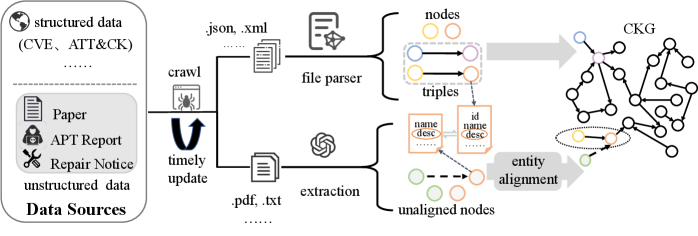

A new technique identifies and isolates malicious participants in collaborative machine learning, even when they work together.

![The GemmaAgent architecture facilitates complex reasoning through the synergistic integration of a large language model (LLM) and specialized tools, enabling it to iteratively refine plans and execute actions based on observed states and [latex] \mathbb{R} [/latex]-valued rewards, ultimately achieving robust task completion.](https://arxiv.org/html/2602.11982v1/x1.png)

Researchers are exploring how artificial intelligence can automatically simplify complex cybersecurity vulnerability descriptions, improving accessibility for a wider audience.

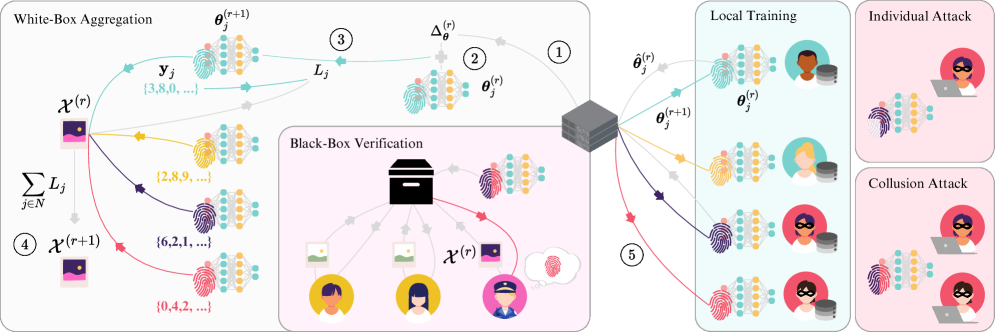

A new method, NSM-Bayes, dramatically improves the speed and robustness of Bayesian inference by leveraging neural networks and simulation.

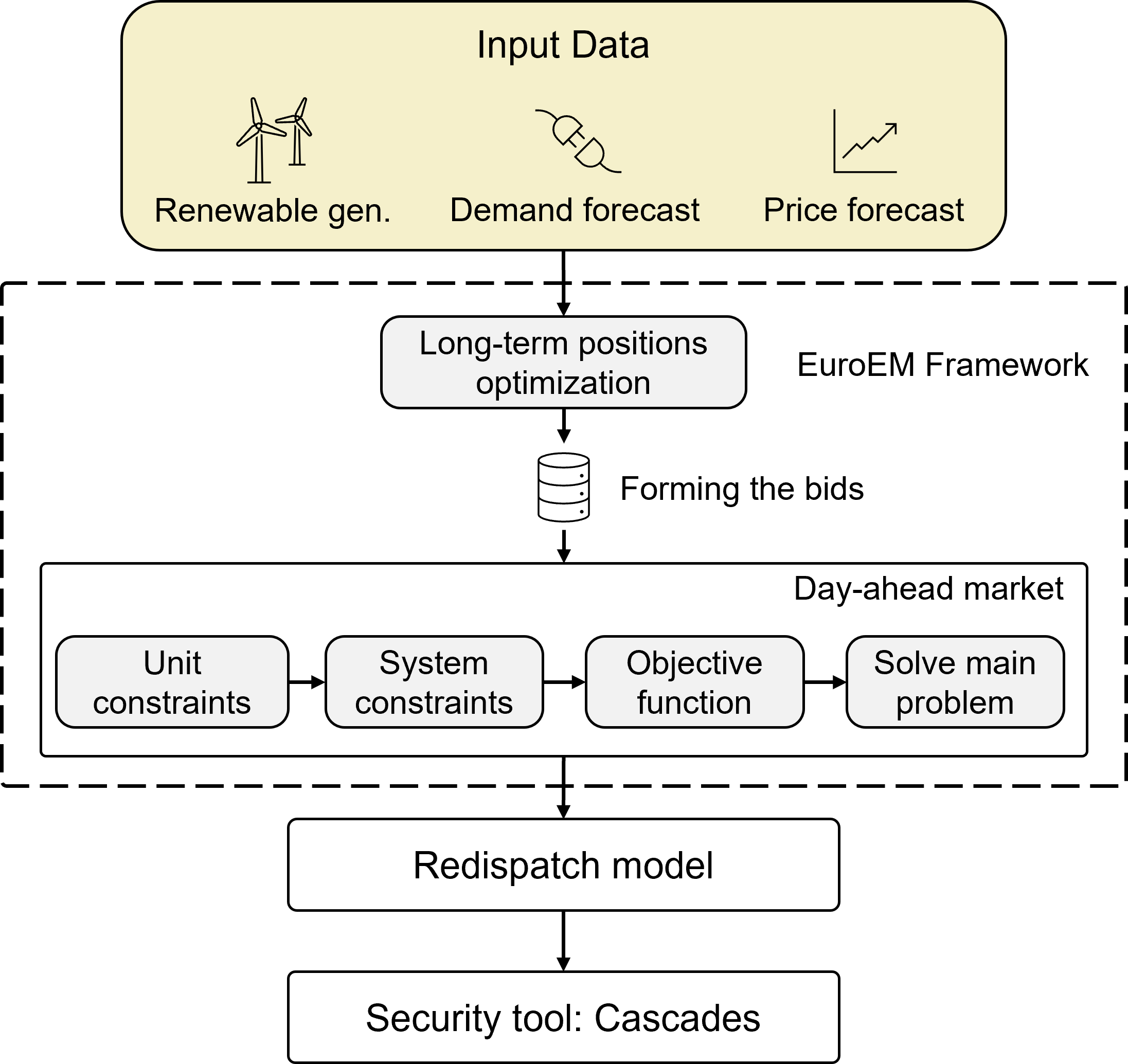

New research reveals that standard power grid security assessments often fail to account for how actual market behavior impacts system resilience.