Seeing Through the Noise: AI-Powered Threat Detection Gets a Critical Upgrade

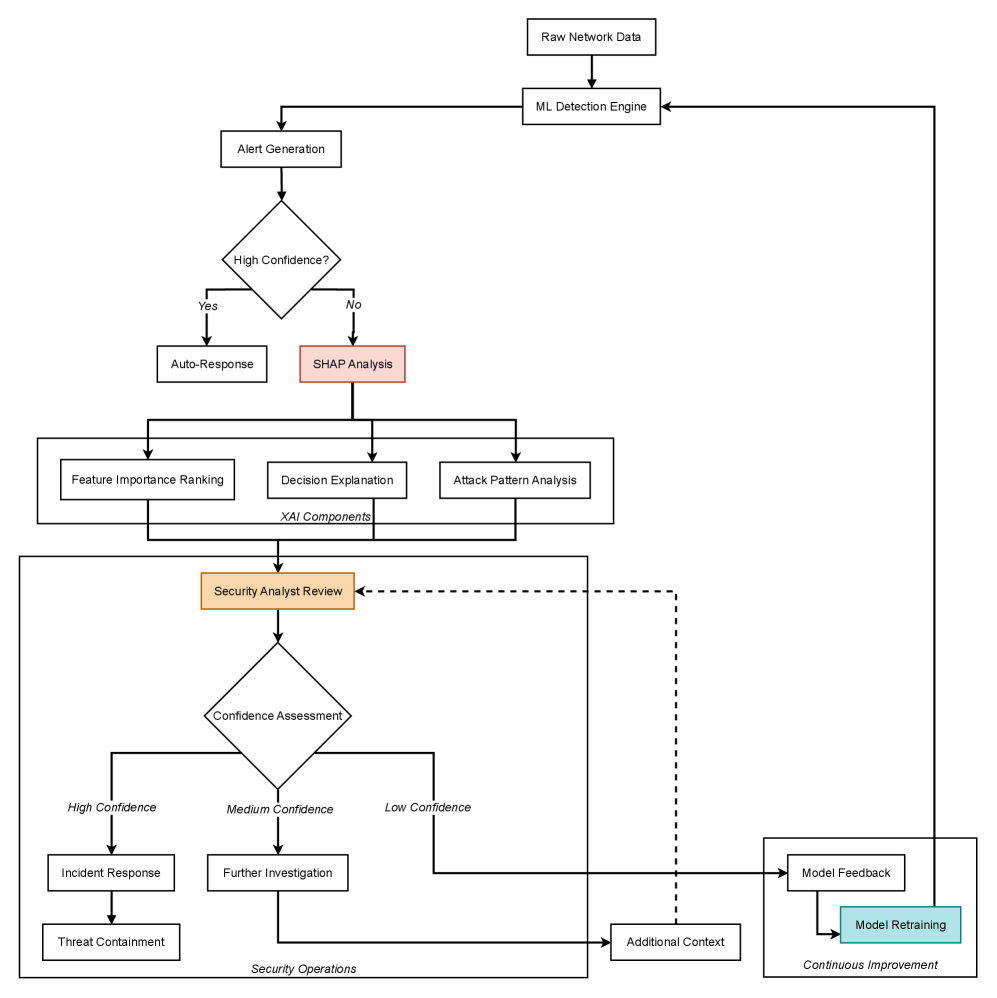

A new framework combines explainable AI with strategic data handling to significantly improve the accuracy and transparency of cybersecurity threat detection.

A new framework combines explainable AI with strategic data handling to significantly improve the accuracy and transparency of cybersecurity threat detection.

As large language models become increasingly integrated into autonomous AI agents, ensuring their security requires a fundamental shift towards proactive supply chain oversight.

Researchers have developed a novel diagnostic framework leveraging random matrix theory to assess the underlying quality of crash classification models, moving beyond simple accuracy metrics.

A new framework evaluates the potential for AI-powered mental health support systems to provide unsafe or ineffective care, revealing critical vulnerabilities.

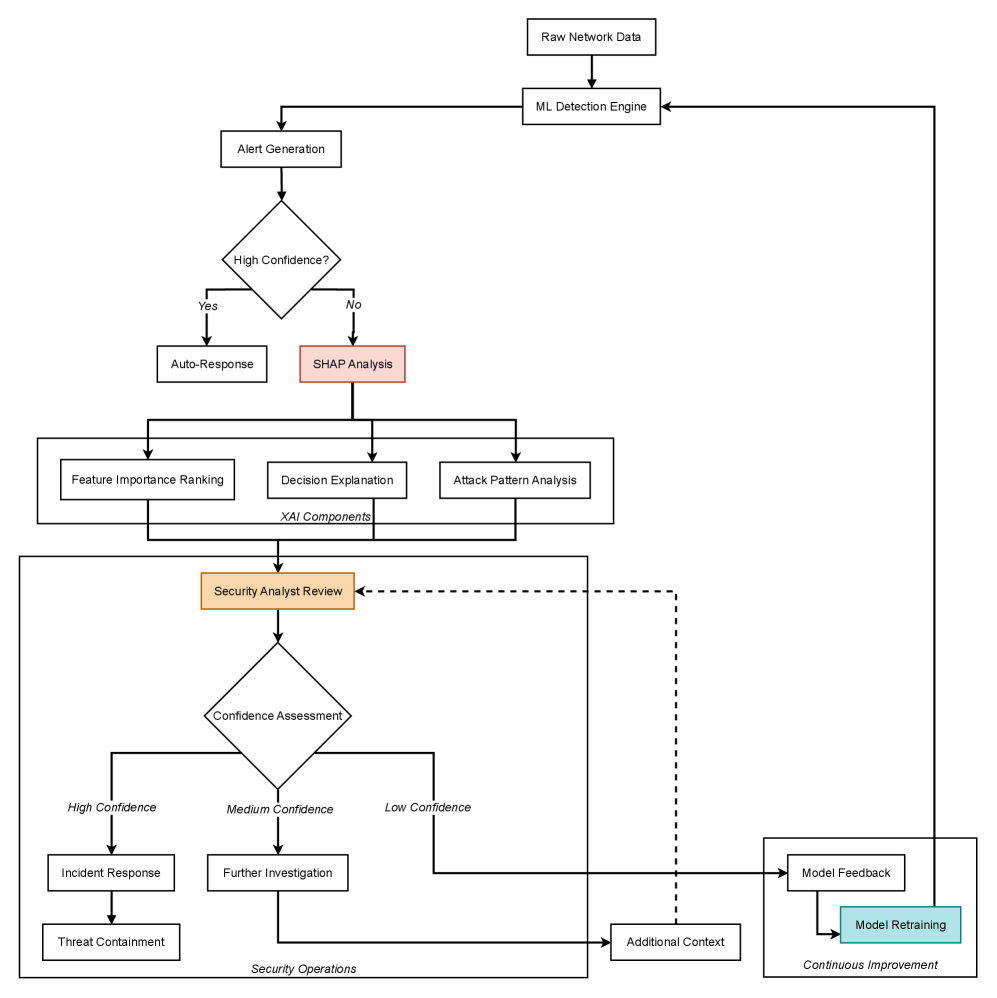

New research reveals that the architecture of stablecoins fundamentally shapes how systemic risk spreads during periods of market turbulence.

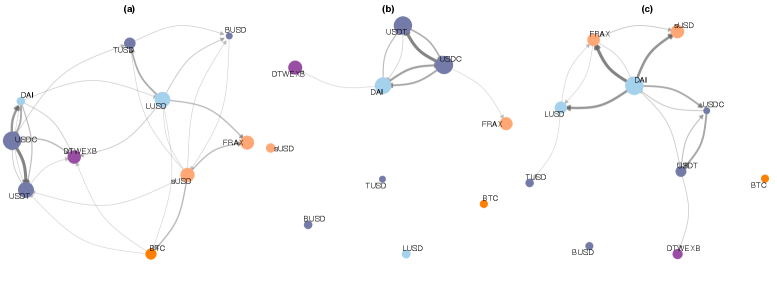

A new study explores whether large language models can effectively translate the complex logic behind credit risk predictions into human-understandable explanations.

A new framework and benchmark suite aim to ensure financial large language models are reliable, transparent, and ready for real-world deployment.

As AI systems become more autonomous, simply improving accuracy isn’t enough-we need to understand and control how failures propagate through complex systems.

A new study rigorously benchmarks the performance of physics-informed neural networks on simulating dynamical systems, highlighting strengths and limitations across different complexities.

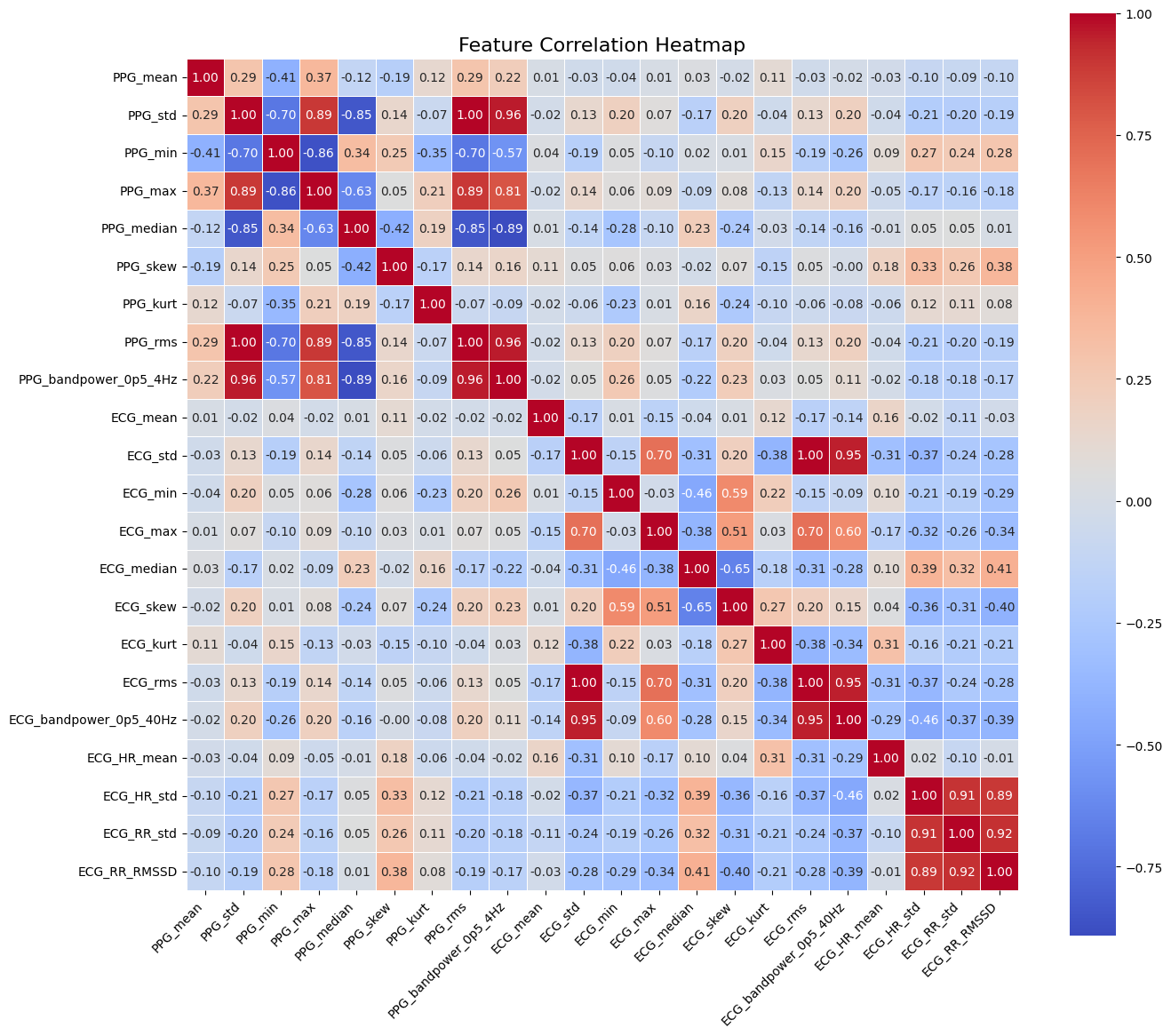

A new machine learning framework leverages combined heart signals to accurately identify atrial fibrillation, a common and dangerous heart condition.