Author: Denis Avetisyan

A new architecture enables secure, verifiable, and economically viable machine learning across distributed edge devices without relying on trusted intermediaries.

This review presents a compositional framework for trustless federated learning leveraging blockchain and incentive mechanisms for decentralized coordination.

While federated learning promises to democratize artificial intelligence by enabling collaborative model training without centralized data collection, current systems lack the robustness needed for truly decentralized and trustworthy operation. This paper, ‘Trustless Federated Learning at Edge-Scale: A Compositional Architecture for Decentralized, Verifiable, and Incentive-Aligned Coordination’, introduces an architecture built on cryptographic techniques to address critical gaps in accountability, incentive alignment, and scalability. By leveraging cryptographic receipts, novelty measurements, and parallel object ownership, we demonstrate a path towards verifiable and economically sustainable federated learning at the edge. Could this approach finally unlock the potential of billions of edge devices to collaboratively advance AI, while preserving data privacy and fostering equitable participation?

The Inherent Fragility of Centralized Systems

Conventional machine learning systems often rely on a centralized architecture, where vast amounts of data are aggregated on a single server or within a limited number of data centers. This concentration creates inherent vulnerabilities; a system failure at this central point can disrupt the entire learning process, leading to significant downtime and potential data loss. More critically, this centralized storage of sensitive information presents a lucrative target for malicious actors, increasing the risk of large-scale data breaches and compromising user privacy. The accumulation of data also raises concerns about data ownership and control, as users relinquish direct access to their information. Consequently, the inherent fragility and privacy risks associated with centralized approaches are driving a growing interest in decentralized learning paradigms that distribute data and computation across multiple nodes, enhancing both resilience and security.

Collaborative artificial intelligence development increasingly demands methods that prioritize security, scalability, and data privacy. Traditional models often rely on centralized datasets, creating vulnerabilities to breaches and single points of failure; a compromised central server can halt progress and expose sensitive information. Consequently, research is heavily focused on techniques like federated learning and differential privacy, allowing algorithms to train on distributed datasets without direct data exchange. These approaches not only enhance resilience against attacks but also address growing concerns regarding data ownership and user consent. Successfully implementing such systems requires overcoming significant computational challenges and ensuring that privacy protections do not unduly compromise model accuracy, representing a critical frontier in realizing the full potential of collaborative AI.

A Novel Architecture: PGOT and the Pursuit of Trustless Learning

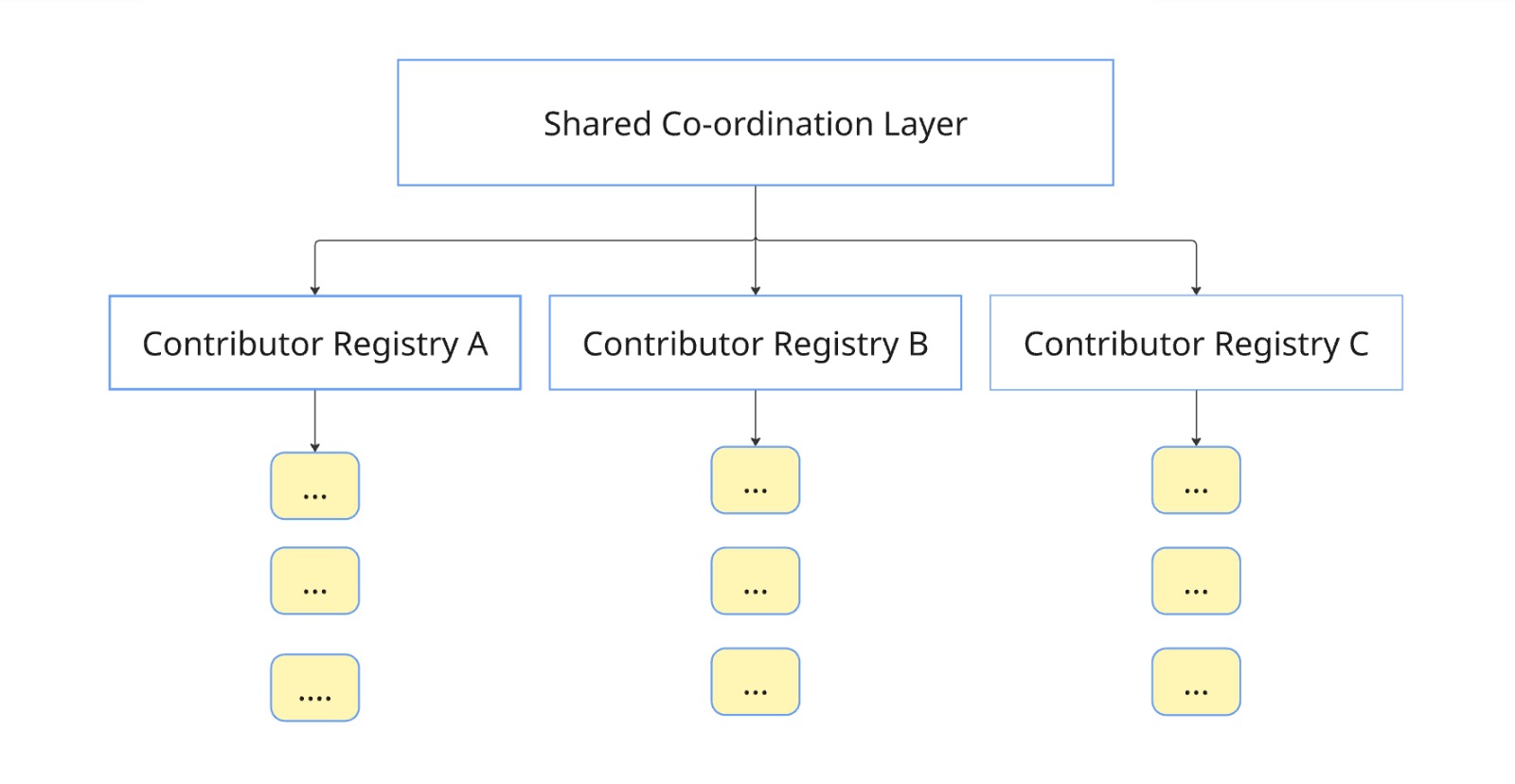

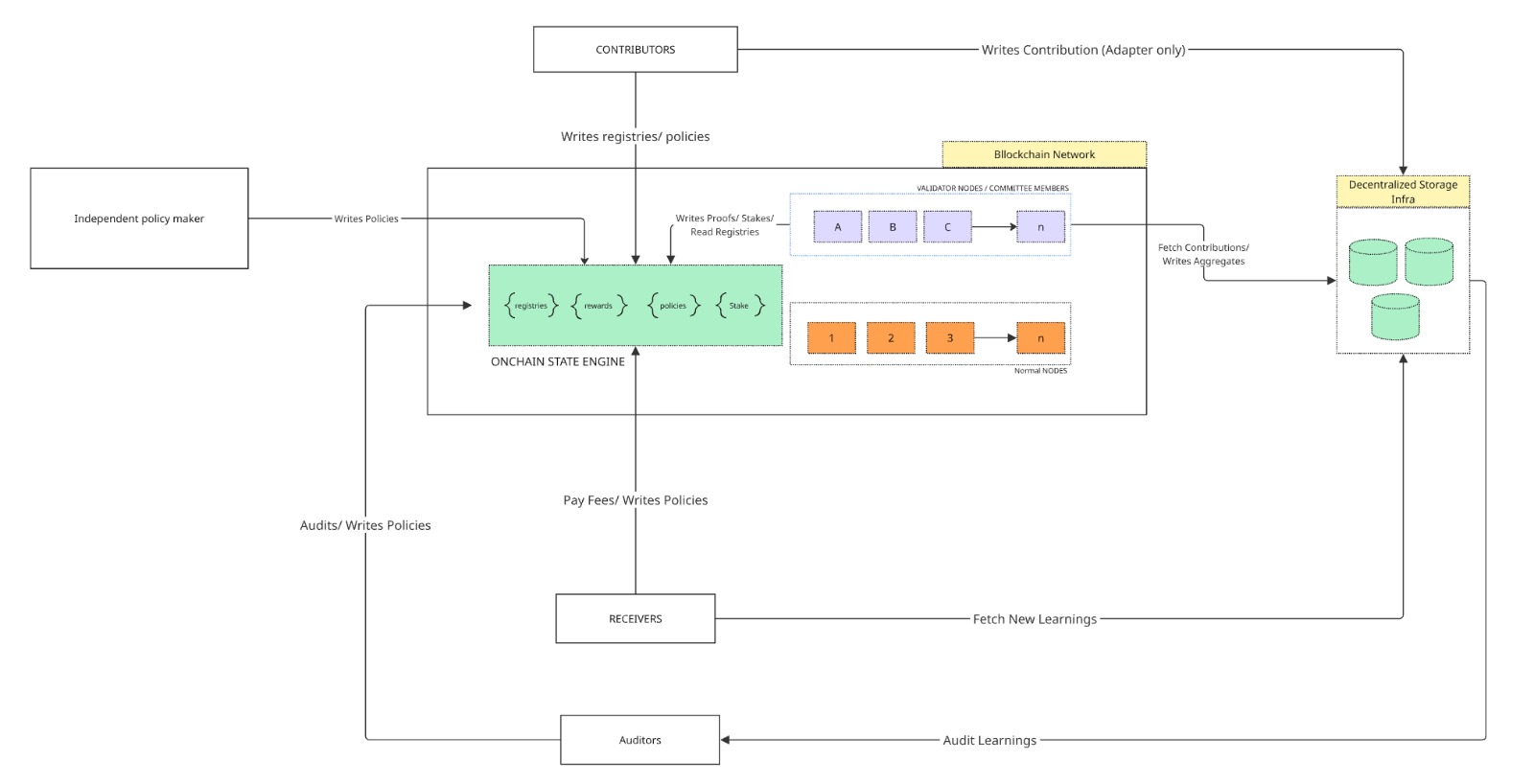

The proposed PGOT architecture builds upon the principles of Federated Learning by incorporating Blockchain Technology to address limitations in coordination and trust. Traditional Federated Learning relies on a central aggregator, creating a single point of failure and potential manipulation. PGOT leverages a blockchain to distribute coordination, enabling secure and verifiable aggregation of model updates from participating nodes. This integration facilitates a trustless environment where contributions are cryptographically verified, ensuring data integrity and preventing malicious actors from compromising the global model. The blockchain component records all model updates and associated contributions, providing an immutable audit trail and enabling incentive mechanisms to reward honest participation.

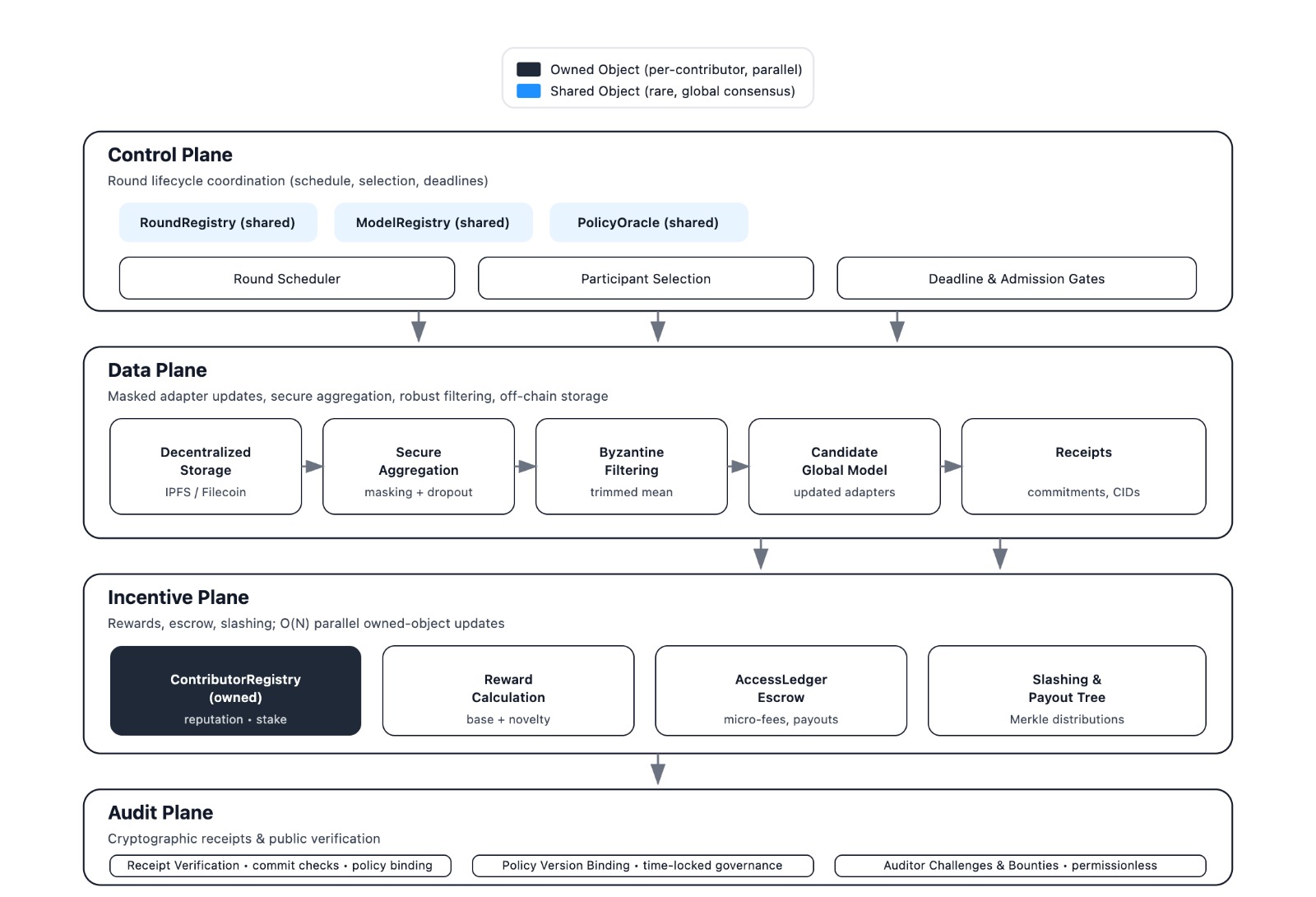

The PGOT architecture relies on four core mechanisms to facilitate federated learning. Proof-Carrying Aggregation verifies the correctness of model updates submitted by participants using cryptographic proofs. Geometric Novelty Decomposition reduces communication overhead by transmitting only the novel components of model changes, based on geometric principles. Object-Centric Coordination organizes the learning process around data objects rather than individual model parameters, improving efficiency and interpretability. Finally, Time-Locked Governance enforces rules and incentivizes participation through time-based constraints on model contributions and reward distribution, ensuring system integrity and fairness.

The PGOT architecture establishes a trustless environment for federated learning by employing cryptographic verification of model updates before aggregation. Each contribution is validated using techniques like zero-knowledge proofs, ensuring only correct computations are incorporated into the global model. This verification process is coupled with an incentive mechanism – likely a token-based system – rewarding participants for honest contributions and penalizing malicious or incorrect submissions. Crucially, this system is designed to preserve data privacy; individual datasets remain local to each participant and are never directly shared or exposed during the verification or aggregation phases. The combination of verifiable computation and incentivization fosters a robust and reliable federated learning process without requiring a central authority or trusting individual participants.

PGOT achieves improved scalability by decoupling state management from consensus frequency. Traditional blockchain systems require full consensus for every transaction or model update, resulting in coordination complexity that scales quadratically with the number of participants, denoted as $O(N^2)$. PGOT, conversely, decomposes the global state into smaller, independently verifiable components and employs infrequent, aggregated consensus. This approach reduces the coordination overhead to linear complexity, $O(N)$, where N represents the number of participating nodes. By minimizing the frequency of full consensus rounds and focusing on verifying aggregated contributions, PGOT significantly lowers communication and computational burdens, enabling support for a larger number of participants and more frequent model updates.

Demonstrating Integrity: Mechanisms for Robust Federated Learning

ProofCarryingAggregation ensures both the accuracy and privacy of federated learning model updates through the combined application of SecureAggregation and DifferentialPrivacy. SecureAggregation enables the computation of the aggregate model update without revealing individual participant contributions, preventing malicious actors from manipulating the global model based on others’ data. Simultaneously, DifferentialPrivacy adds calibrated noise to the aggregated update, further obscuring individual contributions and providing a quantifiable privacy guarantee. This combination protects against data leakage while maintaining model utility, as the noise is controlled to minimize its impact on overall performance. The system’s design allows for verifiable aggregation, confirming the integrity of the process and the validity of the final model update.

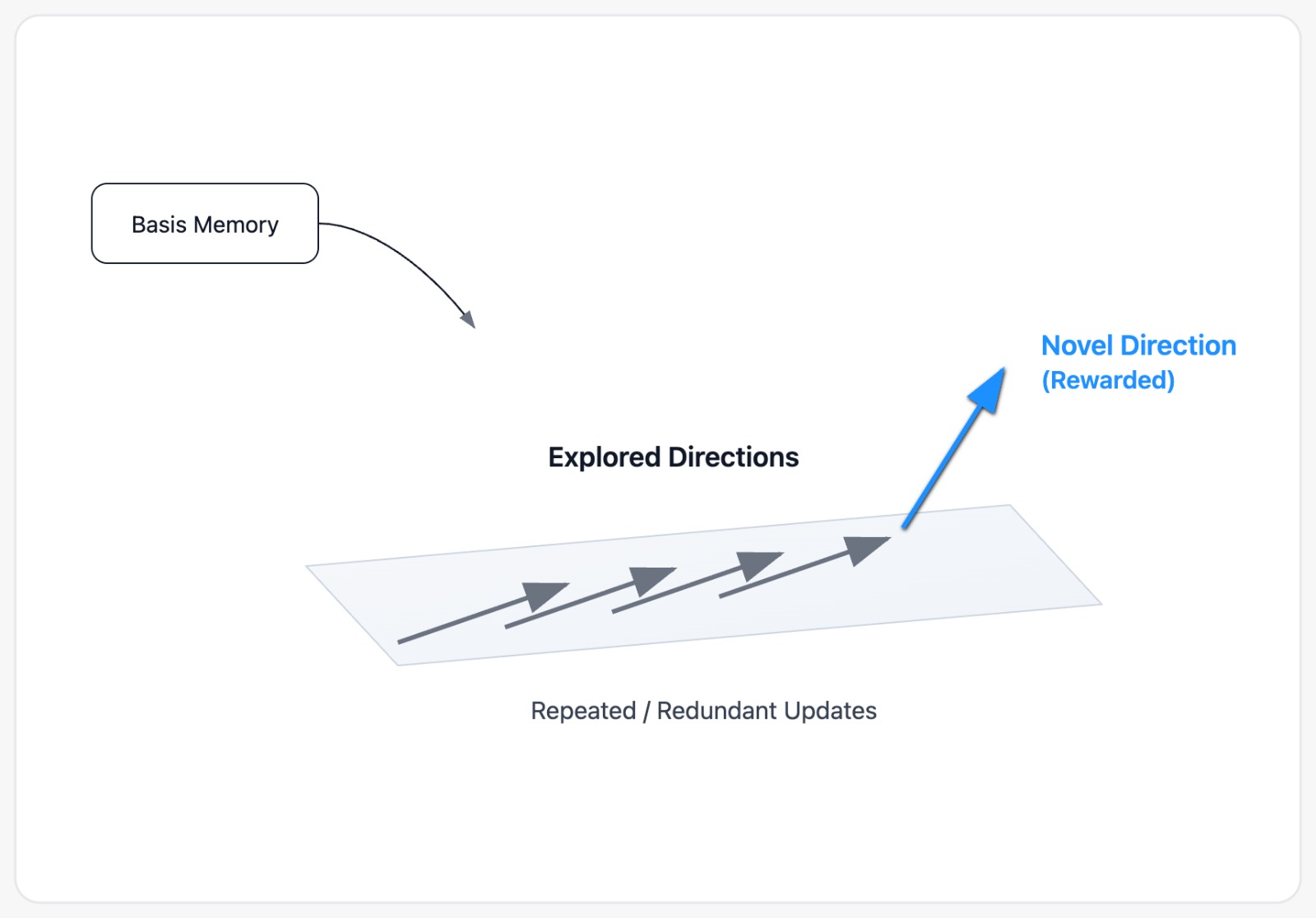

GeometricNoveltyDecomposition mitigates attacks such as ReplayAttack and SybilAttack by quantifying the distance between proposed model updates and previously submitted updates. This is achieved by embedding parameter updates in a geometric space and calculating the cosine similarity between submissions; updates demonstrating low similarity to existing parameters are rewarded, incentivizing exploration of novel regions of the parameter space. This approach effectively discourages the resubmission of old parameters (ReplayAttack) and the creation of multiple identities to amplify the impact of a single attacker (SybilAttack) by prioritizing genuinely new contributions to the model.

The system employs an IncentiveMechanism to reward participants for contributing valuable model updates, utilizing a MicroFee structure to ensure economic viability. This mechanism is designed to function efficiently at a scale of 10,000 contributors operating on a model with 20 million parameters, with a cost of $0.001 per round of updates. This fee covers the computational and communication costs associated with aggregation and validation, ensuring sustainable operation and incentivizing consistent, high-quality contributions from participants.

Low-Rank Adapters improve scalability in federated learning by significantly reducing the communication overhead associated with model updates. Instead of transmitting the full model parameters, each participating client computes and transmits only a low-rank decomposition of their parameter updates. This decomposition, represented by two smaller matrices, drastically reduces the number of parameters needing transmission; for example, a model update that would normally require transmitting $n$ parameters is reduced to $r \cdot k$ parameters, where $r$ and $k$ are the dimensions of the low-rank matrices and $r \ll n$. This reduction in communication bandwidth is crucial for deployments with a large number of participants or limited network connectivity, enabling more frequent model updates and faster convergence without being constrained by communication costs.

Towards a Future of Resilient and Scalable Systems

PGOT’s ObjectCentricCoordination represents a significant advancement in addressing scalability challenges within complex computational systems. Rather than processing data serially, this mechanism enables parallel computation by breaking down tasks into independent object-level operations. This approach dramatically reduces communication bottlenecks, as only necessary object data is exchanged between processing units, rather than entire datasets. The result is a substantial increase in throughput and efficiency, allowing the system to handle significantly larger workloads without performance degradation. By focusing on object-level interactions, PGOT minimizes data transfer overhead and maximizes the utilization of available computational resources, paving the way for truly scalable and responsive applications.

The system’s governance utilizes TimeLockedGovernance coupled with VerifiableComputation to create an immutable and auditable history of model changes. This approach doesn’t simply record what changed, but cryptographically proves the validity of each modification, ensuring no unauthorized or malicious alterations can be introduced. By locking future model states within a time-delayed commitment, the system prevents sudden, unpredictable shifts and allows for community review and potential dispute resolution. This secure record of evolution is vital for maintaining trust and accountability in decentralized applications, particularly where model accuracy and integrity are paramount – effectively creating a “chain of custody” for the model itself, guaranteeing its provenance and preventing tampering over time.

A critical component of ensuring trustworthy and efficient decentralized systems lies in rapid safety verification. Recent advancements demonstrate that safety proxy evaluation, utilizing homomorphic proofs, can now be completed in under ten seconds. This swift verification process allows for real-time assessment of model behavior without revealing sensitive data, a feat previously hampered by computational demands. The speed is achieved through optimized cryptographic techniques and efficient hardware acceleration, enabling continuous monitoring and rapid response to potential risks. Consequently, this breakthrough paves the way for dynamic and adaptive systems capable of maintaining integrity and security even in high-throughput environments, fostering greater confidence in decentralized applications and models.

The system demonstrably incentivizes participation through a cost-effective fee recovery mechanism for receivers. With a minimal transaction fee of just $0.01 per round, receivers consistently recoup their investment by a factor of 3 to 28 times. This substantial return is achieved by leveraging the network’s coordination and verification processes, effectively rewarding accurate computation and data handling. The economic model not only sustains network activity but also promotes a robust and reliable infrastructure for decentralized applications, making participation financially attractive and fostering long-term scalability.

The developed architecture extends beyond theoretical scalability, promising tangible benefits across multiple sectors. In healthcare, it facilitates secure analysis of sensitive patient data, enabling collaborative research without compromising individual privacy-a critical step toward personalized medicine. Simultaneously, decentralized financial modeling gains a robust foundation for transparent and auditable systems, mitigating risks associated with centralized control and fostering innovation in areas like algorithmic trading and risk management. This combination of security, scalability, and verifiability positions the architecture as a foundational technology for building trustworthy and efficient systems in data-intensive applications, opening doors to previously unattainable levels of collaboration and insight.

The pursuit of decentralized systems, as detailed in this architecture for trustless federated learning, inevitably encounters the constraints of time and complexity. This work attempts to build a scaffolding against decay, a compositional framework to maintain verifiable coordination at the edge. As Carl Friedrich Gauss observed, “If others would think as hard as I do, they would not have so little to think about.” The elegance of this system lies not merely in its technical innovations-blockchain integration, incentive mechanisms-but in its acknowledgement that sustained functionality requires diligent construction and constant vigilance against the inevitable erosion of any complex system. Stability, in such endeavors, isn’t a permanent state, but rather a meticulously engineered delay of the inherent disorder.

What Lies Ahead?

The presented architecture, while addressing immediate concerns of trust and incentive in edge-based federated learning, merely shifts the locus of eventual decay. Every abstraction carries the weight of the past; the composability achieved today will inevitably face the pressures of evolving hardware, shifting economic landscapes, and novel adversarial strategies. The challenge isn’t to eliminate trust, but to design systems where the cost of verifying trust remains less than the cost of accepting its absence – a perpetually moving target.

Future work must confront the inherent fragility of economic models within decentralized systems. Incentive mechanisms, however elegantly constructed, are susceptible to unforeseen exploits and the relentless drive toward optimization. A truly resilient system will not seek to prevent defection, but to accommodate it, absorbing shocks and re-equilibrating without catastrophic failure. This necessitates exploring adaptive governance models and mechanisms for dynamic reputation management, acknowledging that consensus is a temporary state, not a permanent one.

Ultimately, the pursuit of ‘trustless’ systems is a paradoxical endeavor. All systems rely on underlying assumptions, and time tests those assumptions relentlessly. Only slow change preserves resilience; the true measure of success will not be the initial deployment of this architecture, but its capacity to gracefully accommodate the inevitable entropy of the future. The focus should be on minimizing the surface area for failure, not eliminating the possibility of it.

Original article: https://arxiv.org/pdf/2511.21118.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- United Airlines can now kick passengers off flights and ban them for not using headphones

- Crimson Desert: Disconnected Truth Puzzle Guide

- All 9 Coalition Heroes In Invincible Season 4 & Their Powers

- How to Get to the Undercoast in Esoteric Ebb

- Mewgenics vinyl limited editions now available to pre-order

- Warframe Voruna Prime access begins on April 8 for all platforms, new deluxe cosmetic Warframe skins revealed

- All Itzaland Animal Locations in Infinity Nikki

- All Golden Ball Locations in Yakuza Kiwami 3 & Dark Ties

- Dakota County’s plan to end hunger involves locking mayors in escape rooms

- Katanire’s Yae Miko Cosplay: Genshin Impact Masterpiece

2025-11-29 12:54